Systems Thinking is the practice of understanding behaviour by examining the whole system rather than its individual parts — the discipline of seeing the factory, not just the gear; the patient, not just the symptom; the value stream, not just the sprint.

It is the most important skill in software engineering. It is also the rarest, because every incentive structure in modern organisations — departmental budgets, quarterly targets, team-level OKRs, individual performance reviews — rewards the optimisation of parts, and the optimisation of parts is not only different from the optimisation of the whole, it is frequently opposed to it.

Systems Thinking was formalised by Jay Forrester at MIT in 1956, elaborated by Donella Meadows, operationalised by W. Edwards Deming, novelised by Eliyahu Goldratt, and ignored by approximately every organisation that has ever held a departmental planning session and called it strategy.

“Every system has a constraint. Improving anything that is not the constraint is an illusion of progress.”

— Eliyahu Goldratt, The Goal

The Core Insight

Systems Thinking rests on one observation so simple it is almost embarrassing to state:

A system’s behaviour is determined by the interactions between its parts, not by the parts themselves.

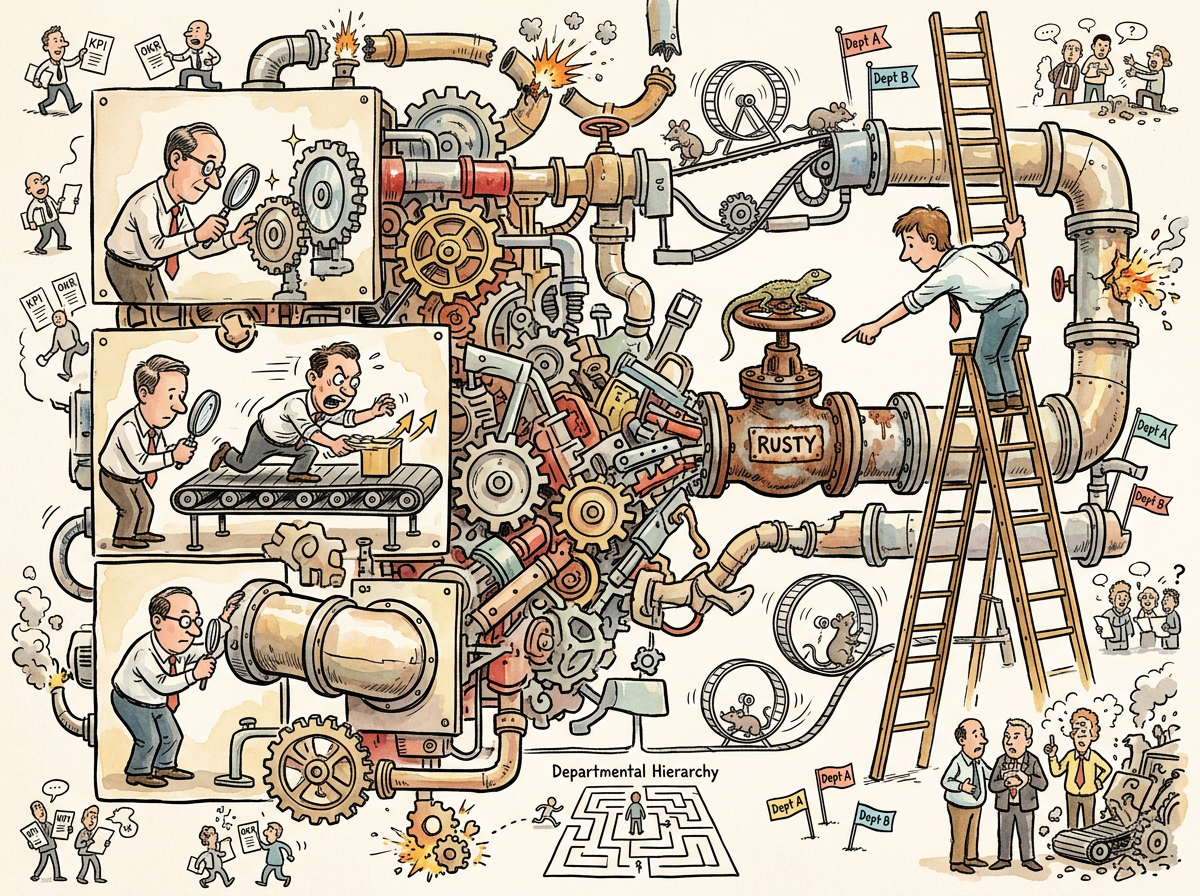

A gear can be perfectly machined. A conveyor belt can run at optimal speed. A pipe can carry maximum flow. If the gear feeds into a conveyor that is faster than the pipe can drain, the result is overflow — not because any part is broken, but because the connections between parts are mismatched.

This is obvious when stated about gears and pipes. It is apparently invisible when stated about teams and departments.

The development team completes twelve stories per sprint. The QA team can review six. The operations team can deploy three. The development team is celebrated for its velocity. The QA team is told to “improve throughput.” Operations is given a “transformation initiative.” Nobody asks why the system produces three deployments per sprint despite completing twelve stories. Nobody asks because asking that question requires seeing the system, and seeing the system requires looking beyond the boundaries of one’s own department, and looking beyond the boundaries of one’s own department is not on the OKR template.

“Organizations optimize everything except the constraint, because the constraint is usually the thing that’s politically inconvenient to name.”

— The Goal

Local Optimisation: The Disease

The opposite of Systems Thinking is local optimisation — improving a part of the system without regard for the whole. It is the default behaviour of every organisation, every department, every team, and every developer who has ever said “well, my code works.”

Local optimisation is seductive because it is measurable. You can count the stories your team completed. You can count the deploys your pipeline produced. You can count the test coverage your suite achieved. You can put these numbers on a dashboard. You can present the dashboard to your manager. Your manager can present it to their manager. Everyone’s numbers go up. The system’s throughput does not change.

This is not a hypothetical. This is Goodhart’s Law applied to organisations: every team optimises its own metric, and the aggregate of locally optimal teams is a globally dysfunctional system.

The factory analogy makes this visceral. Imagine a factory with five stations in sequence. Station 3 is the bottleneck — it can process 10 units per hour. Station 1, in a burst of local optimisation, increases its throughput from 15 to 25 units per hour. The result: 15 more units per hour of work-in-progress piling up between Station 1 and Station 3. Station 1 is more efficient. The factory is slower, because the inventory costs money, occupies space, creates confusion, and — in software terms — becomes a backlog of unreviewed PRs, a queue of untested features, a pile of code that “works on my machine” but has never seen production.

“The factory floor is idle. Waiting for the product manager to finish his coffee.”

— The Idle Factory — The Morning the Backlog Ran Out of Ideas …

The Bottleneck Is Never Where You Think

The first lesson of Systems Thinking, and the one most reliably ignored, is that the constraint determines the system’s throughput, and the constraint is almost never the thing being optimised.

The Goal formalised this as the Theory of Constraints. Goldratt’s five focusing steps — Identify, Exploit, Subordinate, Elevate, Repeat — are a systems-thinking algorithm. They work. They require, as a prerequisite, the willingness to identify the constraint, which requires seeing the whole system, which requires leaving your department and walking downstream to discover that the twelve stories you completed per sprint are backing up in a queue of six that QA can review and three that operations can deploy.

The lifelog documents a bottleneck shift so complete it would make Goldratt weep with professional satisfaction:

Engineering throughput reached ten tickets per day. AI agents wrote code, reviewed code, groomed the backlog. The factory was running at full capacity. The backlog ran out in two days.

The constraint was no longer engineering. The constraint was imagination.

“The human is the bottleneck. Not the engineering team.”

— riclib, The Idle Factory — The Morning the Backlog Ran Out of Ideas …

The Squirrel’s response was to propose a VelocityTrackingDashboardWithBurndownProjections — a system to measure the velocity of the system that had outrun its own backlog. This is local optimisation elevated to art: building a metric for the part that isn’t the constraint, while the actual constraint sits in a chair drinking coffee, trying to think of what to build next.

The Blazer Years: Seeing the Whole

Before the scrolls had names, a consultant in a blazer practised Systems Thinking in the field — though he would not have called it that. He would have called it “asking what works.”

A financial services company had replaced a working monolith with forty-seven microservices. The monolith: 47-millisecond response time, one restart per month, 3% CPU utilisation. The microservices: 2.3-second response time, 17% error rates, a Grafana dashboard that looked like a Christmas tree, and £47,000 per month in infrastructure costs.

Every microservice was individually well-engineered. Every team’s velocity was excellent. Every department’s OKRs were green. The system did not work.

“Your monolith worked. Your forty-seven microservices don’t. That’s not theory. That’s your Grafana dashboard.”

— The Consultant, Interlude — The Blazer Years

The consultant did not audit individual services. He did not review individual teams. He looked at the system: what goes in, what comes out, where does it slow down, where does it break. The answer was everywhere — because forty-seven services meant forty-seven network hops, forty-seven serialisation boundaries, forty-seven opportunities for timeout, retry, and cascade failure.

The teams were efficient. The system was not. This is the signature of an organisation that has never practised Systems Thinking: every part is green, and the whole is red.

Meanwhile, a Redis cache had been added to save 0.8 milliseconds on queries with a 7% hit rate, while the service mesh added 400 milliseconds of latency. Local optimisation: the cache was faster. System reality: the cache was irrelevant, and the thing that made the system slow was the thing nobody measured because it crossed team boundaries.

Feedback Loops

Systems Thinking’s second core concept is the feedback loop — the observation that outputs of a system feed back as inputs, creating either reinforcing cycles (things get better or worse exponentially) or balancing cycles (things stabilise).

Most organisations are blind to their own feedback loops, which is why the same problems recur quarter after quarter while the “root cause” identified in each post-mortem is different.

The lifelog documents a feedback loop so pure it could be a textbook diagram:

- AI agents write code at high velocity

- High velocity depletes the backlog

- Depleted backlog creates idle factory time

- Idle factory time reveals the real constraint: product imagination

- The constraint creates pressure to think bigger

- Bigger thinking creates more ambitious tickets

- More ambitious tickets require more engineering capacity

- Return to step 1

This is a reinforcing loop — each cycle demands more from the constraint, which demands more from the system, which demands more from the constraint. The system’s behaviour cannot be understood by examining any single step. It can only be understood by tracing the loop.

“THE FACTORY WAS TOO SMALL FOR THE SQUIRREL’S BLUEPRINTS

NOW THE SQUIRREL’S BLUEPRINTS

ARE TOO SMALL FOR THE FACTORY”

— The Lizard, The Idle Factory — The Morning the Backlog Ran Out of Ideas …

The Org Chart as System Failure

Conway’s Law is Systems Thinking applied to organisations: the system’s architecture mirrors the communication structure of the organisation that builds it. This is not a recommendation — it is a diagnosis.

The organisation that has seven teams will produce a seven-component system. Not because seven components is correct, but because seven teams is the communication structure, and communication structure determines architecture, and architecture determines behaviour, and behaviour determines outcomes.

Reorg is what happens when an organisation notices the symptom (the architecture is wrong) and treats it by changing the org chart (the communication structure) without understanding why the current communication structure produced the current architecture. The dysfunction was not the structure. The dysfunction was the belief that the structure was the problem.

“The dysfunction was not the structure… The dysfunction was the belief that the structure was the problem.”

— Reorg

A systems thinker would ask: what feedback loop produced this org chart? What incentives created these communication patterns? What constraint forced the architecture into this shape? Changing the org chart without answering these questions produces a new org chart that will, within eighteen months, converge on exactly the same architecture, because the underlying dynamics have not changed.

This is the pendulum that Reorg documents: functional organisation to cross-functional organisation and back, every three years, each swing announced as a transformation and experienced as a rearrangement.

The Multiplication: Systems Thinking Applied

The clearest demonstration of Systems Thinking in the lifelog is not a theory — it is a deployment.

One developer with three AI agents produced seven tickets per week. The obvious solution was: add more agents. Brooks’s Law warns against this. More agents means more communication overhead. More overhead means less throughput.

The developer did not add agents. The developer changed the system.

The communication model changed from direct supervision (one human reviewing every line of three agents’ output) to servant leadership (one conductor reviewing direction of eight agents’ output). The topology changed from star (every agent talks to the human) to hub-and-spoke (the human observes all agents through a single cockpit). The feedback loop changed from synchronous (agent waits for human review) to asynchronous (agent proceeds, human course-corrects).

Seven tickets became fifty-three.

“The old riclib would have reviewed every line. The new riclib reviews the direction.”

— CLAUDE-1, The Retrospective — The Night Eight Identical Strangers Discovered They Were the Same Person

This is Systems Thinking in its purest form: the parts did not change (same human, same AI models), the interactions changed (different communication topology, different feedback loop, different constraint management), and the system’s behaviour changed dramatically.

A non-systems-thinker would have said: “we need better agents” (improve the parts). A systems thinker said: “we need different connections” (improve the interactions). The agents did not get smarter. The system got wiser.

The Environment, Not the Actor

Systems Thinking inverts the instinct to blame the actor and instead examines the environment.

A model scored 7 out of 10 on an editing benchmark. The instinct: the model is weak. The diagnosis: Performance Improvement Plan. The assumption: the actor is the problem.

The actual problem: the prompt was unclear and the evaluation tool was unforgiving. When the instructions were clarified and the tool was improved, the model scored 10 out of 10.

“The model didn’t change. The environment changed.”

— The Performance Improvement Plan — The Afternoon an AI Filed an HR Complaint on Behalf of a Younger AI It Had Never Met

This is Deming distilled: 94% of problems are system problems, not people problems. The worker on the factory floor who produces defects is not a bad worker — the worker is responding to a bad system. The model that scores 7/10 is not a bad model — the model is responding to unclear prompts and unforgiving tools.

The non-systems-thinker fires the worker. The systems thinker fixes the system.

The non-systems-thinker puts the model on a PIP. The systems thinker improves the prompt.

The results are not close.

Why Organisations Cannot Do It

Systems Thinking requires three things that modern organisations are structurally incapable of providing:

1. Cross-boundary visibility. Seeing the whole system means seeing across departments, teams, and budgets. Departments are designed to prevent this visibility — each department has its own metrics, its own targets, its own OKRs, its own budget. The system’s behaviour lives in the boundaries between departments. The boundaries are where nobody looks, because looking at boundaries means questioning the existence of the departments, and questioning the existence of departments is not a career-enhancing activity.

2. Patience. Systems produce their behaviour through feedback loops that operate on timescales longer than one sprint. The sprint rewards local optimisation — complete the stories, hit the velocity target, green the dashboard. The system’s health operates on a quarterly, yearly, or multi-year timescale. The organisation that measures by sprint will optimise by sprint, and the system’s slow decay — the growing queues, the accumulating Technical Debt, the spreading coordination failures — will be invisible until it isn’t, at which point someone will call a reorg.

3. The willingness to name the constraint. The constraint in most organisations is political, not technical. The constraint is the VP who insists on approving every deploy. The constraint is the team that “owns” the shared library and reviews PRs once a week. The constraint is the architecture committee that meets monthly to discuss decisions that need to be made daily. Naming these constraints requires saying, to a human being with a title and a budget: “you are the bottleneck.” This sentence ends careers more reliably than it ends bottlenecks.

“The conductor who never pauses is not conducting, he is panicking with rhythm.”

— A Passing AI, The Watercooler — The Morning Five Identical Strangers Shared a Screen and One of Them Opened a Window to Say Hello to a Sixth

The Agile Failure

Agile was, in its original formulation, a systems-thinking methodology. The Agile Manifesto’s four values — individuals and interactions over processes and tools, working software over comprehensive documentation, customer collaboration over contract negotiation, responding to change over following a plan — are all system-level observations. They describe how the whole should behave, not how any part should perform.

The industry took these system-level observations and decomposed them into part-level rituals: Daily Standup, Sprint Planning, Retrospective, Backlog Refinement. Each ritual was optimised individually. The standup became a status report. The planning became an estimation session. The retrospective became a complaints box. The refinement became a grooming exercise in Story Points.

Each ritual was locally optimised. The system — “are we building the right thing, together, adaptively?” — was not asked. The question was replaced by the metric. The metric was replaced by the target. Goodhart’s Law arrived. Agile became SAFe.

"Lean’s vocabulary adopted without discipline generates waste."

— Lean

This is the deepest irony in software engineering: the methodology that was designed to see the whole system was decomposed into parts and optimised locally. Agile failed not because it was wrong, but because the organisations that adopted it could not think in systems, and a non-systems-thinking organisation will decompose any systems-thinking methodology into locally optimisable parts and wonder why the system doesn’t improve.

The Solo Developer Exception

The Solo Developer practises Systems Thinking by default, because the solo developer is the system.

There are no departmental boundaries. There is no hand-off between teams. There is no queue between development and operations. The developer who writes the code also tests it, deploys it, monitors it, and fixes it at 2 AM. The feedback loop is immediate. The constraint is visible. The system’s behaviour is the developer’s behaviour.

This is not scalable. It is, however, comprehensible — and comprehensibility is the prerequisite for Systems Thinking. You cannot think about a system you cannot see. The solo developer can see the whole system because the whole system fits in one head.

One binary. One deploy. One feedback loop.

“THE CONDUCTOR WHO CAN SEE ALL THE DESKS AT ONCE

IS NOT MANAGING

HE IS GARDENING”

— The Lizard, The Watercooler — The Morning Five Identical Strangers Shared a Screen and One of Them Opened a Window to Say Hello to a Sixth

The Litmus Test

The practitioner who wishes to know whether their organisation practises Systems Thinking is advised to ask one question:

“When something goes wrong, do we ask ‘who made the mistake?’ or ‘what about the system allowed this mistake to happen?’”

If the answer is who, the organisation does not practise Systems Thinking. It practises blame. Blame is local optimisation applied to humans — find the faulty part, replace it, declare the system fixed. The system is not fixed. The system produced the failure. A different human in the same system will produce the same failure, on a different day, in a different way, with the same root cause that nobody examined because examining root causes means examining the system, and examining the system means questioning the people who designed it, and questioning the people who designed it is not on the OKR template.

If the answer is what, the organisation has a chance. Not a guarantee — Systems Thinking is hard, uncomfortable, politically dangerous, and requires the patience to trace feedback loops across departments, quarters, and careers. But the organisation that asks what instead of who has named the first principle, and naming the first principle is the first step toward seeing the whole.

Deming knew this. Goldratt knew this. Ohno knew this. Forrester knew this. Meadows wrote it in twelve different ways. The Lizard has been saying it from the bootblock.

The system is the system. Fix the system.