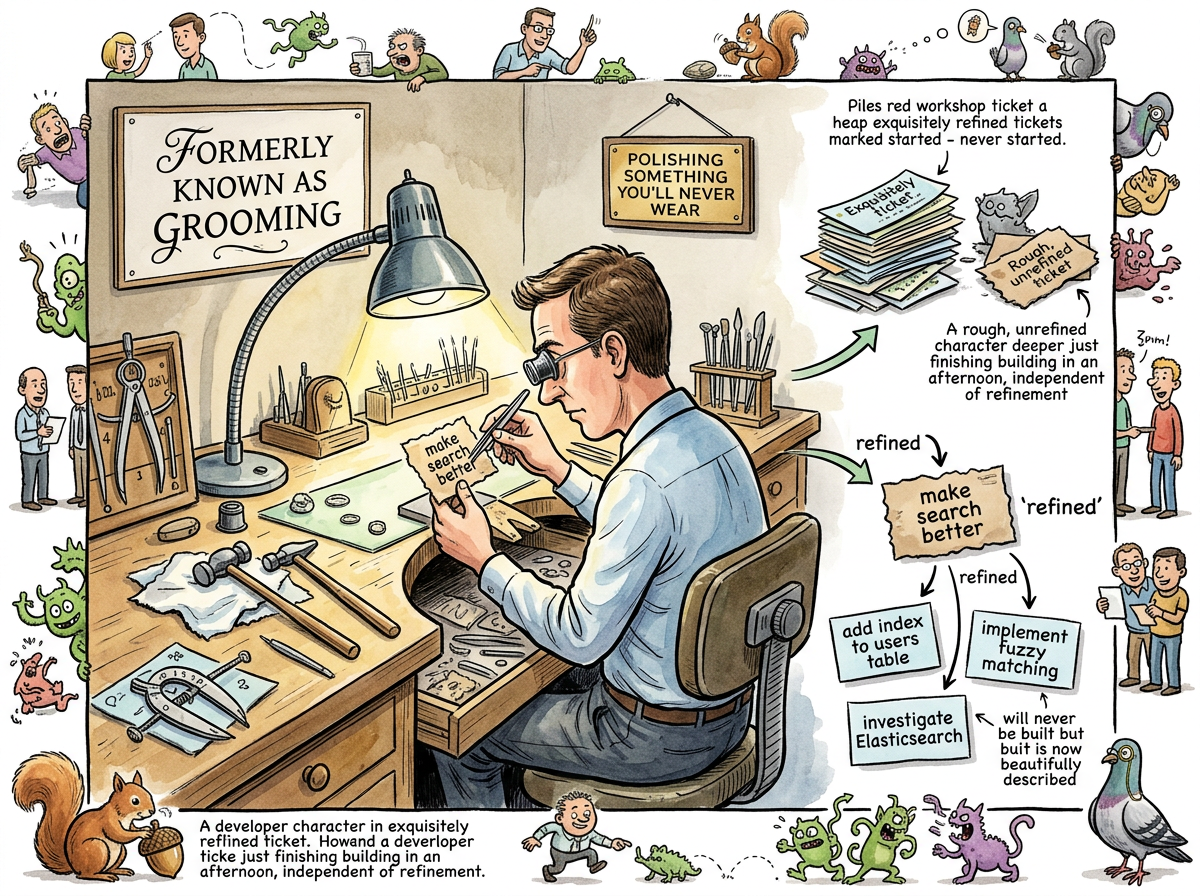

Backlog Refinement is Grooming, renamed.

The practice is identical. The meeting is identical. The outcome — a set of backlog items that have been discussed, clarified, estimated, and declared “ready” for Sprint Planning — is identical. The word changed because “grooming” acquired connotations that made the phrase “backlog grooming session” uncomfortable to say aloud in professional settings, and the Scrum community collectively decided that the path of least resistance was a rename rather than an explanation.

“Refinement” was chosen because it implies improvement through careful attention — the act of taking something rough and making it smooth. This is a generous metaphor. In practice, refinement is the act of taking something vague and making it described — which is not the same as making it understood, and is certainly not the same as making it built.

The Refinement Paradox

The paradox of Backlog Refinement is that the items most in need of refinement are the ones least likely to be built.

Items at the top of the backlog — the ones that will actually enter the next sprint — are already well-understood, because the team has been thinking about them for weeks. They need five minutes of refinement. They get five minutes.

Items in the middle of the backlog — the ones that might be built in two or three sprints — are vague, ambiguous, and full of assumptions. They need an hour of refinement. They get an hour. Then they are deprioritized, the assumptions change, and the hour is wasted. They will be refined again next month, with different assumptions, and wasted again.

The team spends the most refinement time on the items least likely to survive to Sprint Planning. The items that actually get built were already clear before the meeting started.

The Nine-Minute Alternative

When AI agents were dispatched into a codebase to assess backlog items, the refinement happened in nine minutes. Five agents. Five gap analyses. Each one returning not with opinions about the work, but with facts — what was already built, what remained, what had been superseded.

“You’re describing automated backlog grooming.”

“And the agents are better at it.”

— riclib and the Caffeinated Squirrel, The Idle Factory — The Morning the Backlog Ran Out of Ideas …

The agents did not debate whether a ticket was a 5 or an 8. The agents read the code. The code did not have opinions about its own complexity. The code was either done or not done, and the agents could tell the difference faster than a room full of humans holding Fibonacci cards.

This is not an argument against refinement. It is an argument that refinement’s core activity — understanding whether work is ready — is research, not ceremony. And research scales better when performed by things that can read code at machine speed than by humans who must first agree on what the word “ready” means.