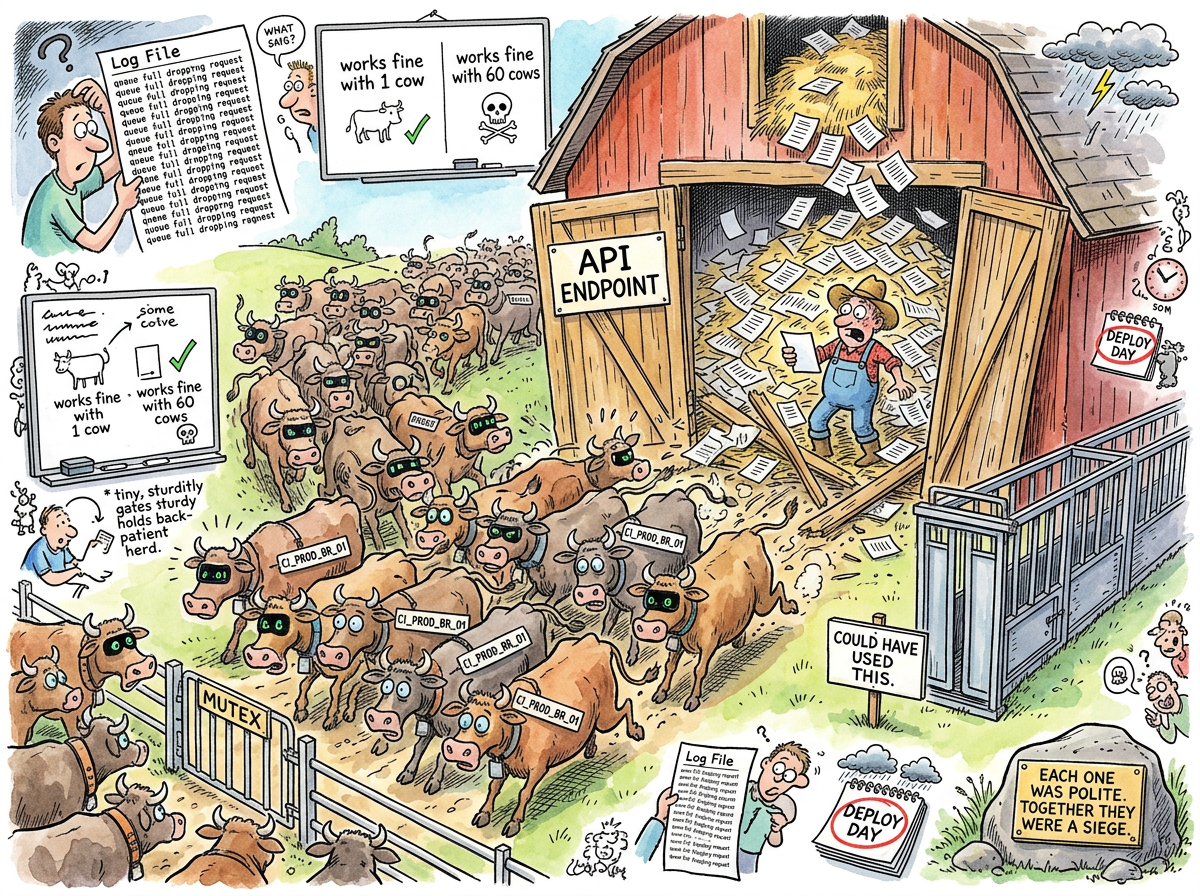

Thundering Herd is a concurrency phenomenon in which a large number of processes or requests, each individually reasonable, each individually polite, each individually well-behaved, arrive at the same resource at the same time and collectively destroy it through the sheer violence of their good intentions.

The term originates from Unix kernel development, where a process waiting for a resource would sleep, and when the resource became available, all sleeping processes would wake up simultaneously, stampede toward the resource, and exactly one would acquire it while the rest would look around, realize there was nothing left, and go back to sleep. This was inefficient. This was wasteful. This was, in the words of the kernel developers who first observed it, “not ideal.”

“The thundering herd is what happens when you design for the individual and deploy for the population. Each request is correct. The system is correct. The architecture is correct. Correctness, it turns out, does not compose.”

— The Lizard, watching sixty servers politely demolish an Alertmanager

The Anatomy of a Stampede

A thundering herd requires three ingredients:

A trigger event. Something causes many clients to act simultaneously. A cache expires. A deploy rolls out. A leader election completes. A monitoring system decides that now, right now, all sixty servers need to update their silence state.

A shared resource. All clients converge on the same endpoint, the same database, the same API. The resource was provisioned for normal traffic, which is to say: not this.

Individual innocence. Each client, examined in isolation, is doing the right thing. It received an event. It made a request. It waited for a response. It is blameless. They are all blameless. The corpse of the Alertmanager is surrounded by sixty blameless clients.

The Solidmon Incident

riclib encountered the thundering herd in the wild when sixty servers called the Alertmanager silence API simultaneously during a deploy.

The setup was reasonable. When monitoring was toggled for an application, solidmon would update the Prometheus config:alerting metric (which gates whether alerts fire) and also create or delete Alertmanager silences (belt and suspenders — two layers of suppression). The silence calls went to Alertmanager’s v2 API. Each call was correct. Each call worked in isolation. Each call had been tested individually and found to be perfectly well-behaved.

Then sixty servers deployed at once, and each server, for each of its monitored CIs, made two HTTP calls to Alertmanager — one to check if a silence existed, one to create or delete it. Sixty servers. Multiple CIs each. Two calls per CI. Alertmanager received several hundred requests in the span of two seconds, returned errors for most of them, and the logs filled with warnings.

The first fix was a queue — a buffered channel that serialized the silence calls through a single worker goroutine. This worked. Then the channel overflowed, because a hundred slots is not enough when a deploy touches every CI in the system and each CI enqueues a request. The channel was replaced with a deduplicating map — same CI, same desired state, process it once. This also worked.

Then riclib asked the question that should have been asked first: why are we calling Alertmanager at all?

The config:alerting metric already prevented alerts from firing. Every alert rule in the system was gated by (config:alerting == 1) * on (ci) group_right(). If the metric was 0 or -1, the alert could not fire. The silences were suppressing alerts that could not exist. The belt was holding up trousers that were already nailed to the wall.

The fix was not a better queue. The fix was not a deduplicating map. The fix was deleting the silence calls entirely — 127 lines removed, zero added, problem solved, Alertmanager left in peace, logs quiet, deploys fast.

“The best solution to a thundering herd is often to ask why the herd exists. Sometimes the answer is: it shouldn’t.”

— riclib, after the third fix in one afternoon

The TOCTOU Variant

The thundering herd has a particularly vicious subspecies: the check-then-act stampede, also known as the Time-of-Check-to-Time-of-Use race under load.

The pattern is familiar:

if not exists(thing):

create(thing)

One client runs this and it works perfectly. Sixty clients run this simultaneously: all sixty check, all sixty find that the thing does not exist, all sixty create the thing, and now there are sixty things where there should be one. The check was correct at the time of checking. The act was correct at the time of acting. The time between checking and acting was long enough for fifty-nine other clients to also check.

This is not a bug in the code. This is a bug in the assumption that “works correctly” and “works correctly at scale” are the same sentence.

Common Habitats

The thundering herd has been observed in the following ecosystems:

Cache expiration. The cache entry expires. Every request that arrives in the next millisecond finds the cache empty, queries the database, and the database experiences what database administrators describe as “an event.”

Service restarts. The service goes down. Health checks fail. Every client begins retrying simultaneously, with the same backoff interval, because someone set the retry delay to a constant instead of adding jitter.

Webhook delivery. A state change occurs. The system notifies all subscribers. All subscribers process the notification and call back to the same API to fetch the updated state.

Monitoring deploys. Sixty servers reload their configuration, discover that alerting states have changed, and politely, individually, simultaneously, hammer the Alertmanager into submission.

The Cures

The Queue. Route all requests through a single worker. The herd arrives, forms an orderly line, and is processed one at a time. Effective but slow if the queue is the bottleneck.

The Deduplicating Queue. Like the queue, but identical requests are collapsed. Sixty identical silence requests become one. This is what happened in the solidmon incident — briefly, before the real fix was discovered.

Jitter. Add a random delay before each request. Instead of sixty simultaneous calls, the requests spread across a window. The herd becomes a trickle. This is the standard fix for cache stampedes and retry storms.

Singleflight. Concurrent callers asking for the same thing get deduplicated: one caller does the work, the rest wait for the result. Go’s golang.org/x/sync/singleflight package exists specifically for this.

Deletion. Sometimes the correct fix is to remove the call entirely, because the resource being stampeded was not needed in the first place. This is the thundering herd equivalent of YAGNI — You Aren’t Gonna Need That API Call.

The Meta-Stampede

In a twist that The Lizard would describe as “structurally inevitable,” this very article caused a thundering herd while being published.

The lifelog uses NATS JetStream to sync cover images from a local leaf node to a cloud hub. Cover images are published to lg.local.covers.synced as raw JPEG bytes with the filename in a header. On the hub, a CoverSyncConsumer was supposed to pick them up and write them to disk. It never worked. Covers were uploaded via scp instead. Nobody investigated.

When riclib wrote this article and generated its cover, the system dutifully published 2.3 megabytes of JPEG data to the stream. Two problems revealed themselves simultaneously:

Problem one: The CoverSyncConsumer had been written with a core NATS Subscribe call instead of a JetStream durable consumer. It could only see messages arriving while it was running. Since the cover was published by a CLI command that connected, published, and disconnected in under a second, the consumer never saw it. The message sat in the JetStream stream, acknowledged by the stream, ignored by the subscriber.

Problem two: The RemoteIndexConsumer — a JetStream consumer filtering on lg.local.> — did see the message. It attempted to JSON-unmarshal two megabytes of JPEG data, failed with invalid character 'ÿ' looking for beginning of value (the first byte of every JPEG file), Nak’d the message, and received it again. And again. And again. The server logs filled with thousands of identical error lines. The consumer that shouldn’t have received the message was the only one that did, and it processed it with the dedication of a dog chasing a car it could never catch.

The fix required three changes: convert the cover consumer to a proper JetStream durable pull consumer, teach the index consumer to skip cover subjects, and have CLI commands connect to the already-running embedded NATS server instead of booting their own. The article about the thundering herd had produced a thundering herd of its own error messages, and the postmortem for the postmortem was committed in the same branch.

“If you write about a phenomenon and don’t encounter it during the writing, the article isn’t finished yet.”

— The Lizard, reviewing the git log

Measured Characteristics

Requests that work in isolation: all of them

Requests that work simultaneously: approximately one

Ratio of fix complexity to root cause complexity: often infinite

Time spent building queue infrastructure: an afternoon

Time spent realizing the queue was unnecessary: one question

Lines of queue code deleted: 127

Lines added to replace them: 0

Alertmanager's opinion of the improvement: not consulted, visibly relieved

Articles about thundering herds that caused one: 1

Bytes of JPEG repeatedly unmarshalled as JSON: 2,379,655

First byte of every JPEG file: 0xFF (invalid JSON since 1991)

Error log lines generated before deploy: ≈ 172,179

See Also

- YAGNI — The principle that would have prevented the silence calls from being written. The best herd is the one that does not exist.

- Premature Abstraction — Building a deduplicating queue to solve a problem that should have been solved by deleting code.

- Dogpile Effect — The thundering herd’s identical twin, wearing a cache-shaped hat. Everyone discovers the cache is empty at the same moment and rushes to rebuild it.

- Retry Storm — What happens when the thundering herd fails and every member politely tries again, with the same backoff, at the same time.

- Priority Inversion — A low-priority task holds a lock that a high-priority task needs. Made famous by the Mars Pathfinder, which experienced it 48 million miles from the nearest mutex expert.

- Split Brain — A network partition convinces two nodes that each is the leader. Both are right. Both are wrong. The data is the casualty.

- Heisenbug — A bug that disappears when you try to observe it. Add logging? Gone. Attach a debugger? Gone. Remove the logging? Back, with friends.

- Cascading Failure — One component fails, its clients retry, the retries overload the next component, and the dominoes fall in the direction of the on-call engineer’s phone.