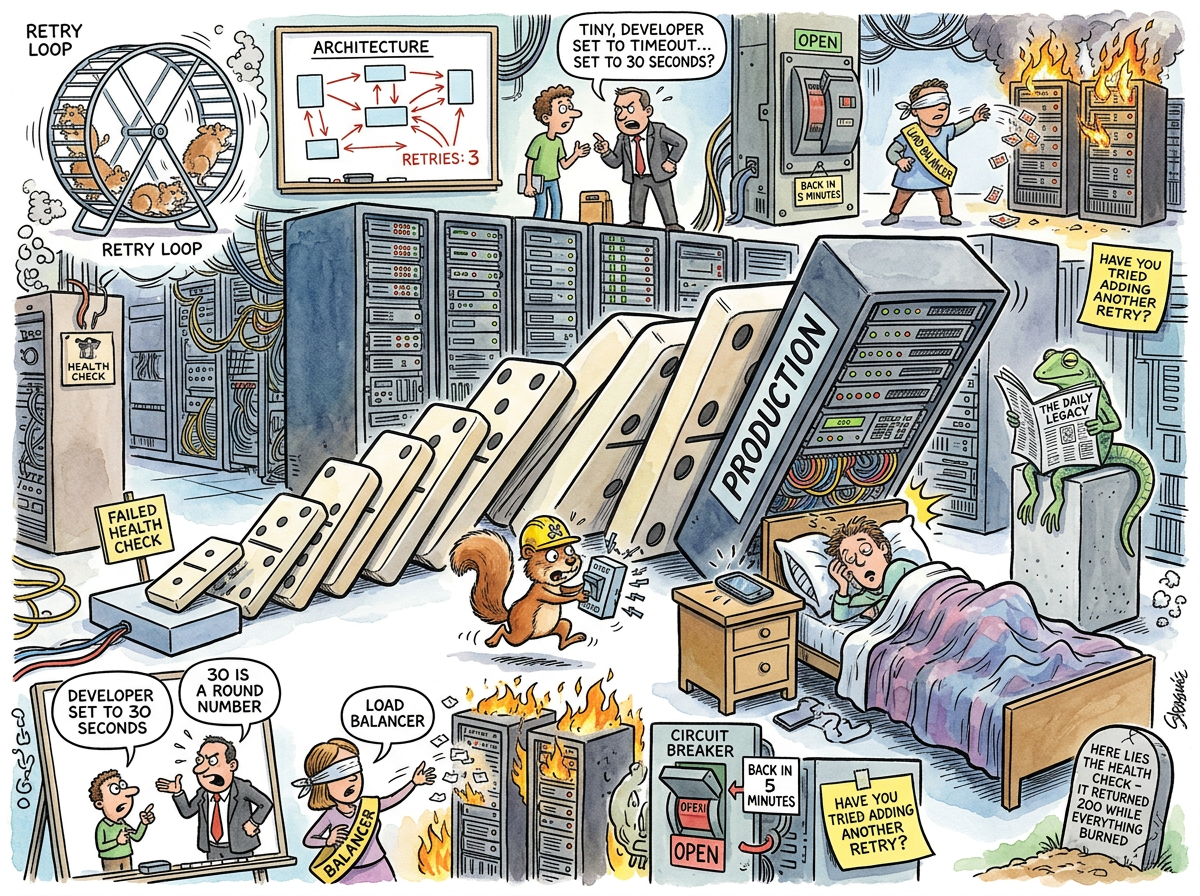

Cascading Failure is the phenomenon by which a single component failure propagates through a distributed system with the inevitability and grace of a bowling ball descending a staircase. The first service fails. Its clients retry. The retries overwhelm the next service. That service fails. Its clients retry. The dominoes fall — not in any direction an architect planned for, but in the direction of the on-call engineer’s phone, at 3 AM, on a Saturday, during a holiday weekend.

The remarkable property of cascading failure is that it is caused by the very mechanisms designed to prevent it. Retries, circuit breakers, failovers, health checks, redundancy — each one, individually, is a sound engineering practice. Together, under load, they form a symphony of mutual destruction so elegant that it would be admired if anyone were awake to admire it.

“The system didn’t fail because it wasn’t resilient. The system failed because it was resilient in seventeen different ways simultaneously, and they all disagreed about what to do next.”

— The Lizard, watching a postmortem diagram that looked like a weather map

The Anatomy of the Cascade

A cascading failure follows a consistent lifecycle:

-

The Spark. A single service slows down. Not crashes — slows. A database query takes 800ms instead of 12ms. A connection pool fills. A thread blocks. The service is technically still alive. The health check returns 200. Everything is fine.

-

The Retry. Upstream services notice the slowness. They have retry logic, because retry logic is a best practice. They retry. Three times. With a timeout of 30 seconds, because 30 is a round number and round numbers are how distributed systems are configured.

-

The Amplification. Each original request becomes four requests (one attempt plus three retries). The struggling service now receives four times the traffic. It was already slow. It is now slower. The health check still returns 200.

-

The Propagation. The upstream services, having exhausted their retries, begin to slow down themselves. Their thread pools fill with waiting connections. Their own clients notice the slowness. Their own clients retry.

-

The Resonance. The entire service graph is now retrying against itself. Every timeout spawns new retries. Every retry deepens every timeout. The system has achieved a kind of recursive despair — a distributed denial-of-service attack perpetrated by the system against itself, with the best of intentions.

-

The Phone Call. The on-call engineer’s phone rings.

“We added retries to make it resilient! We added three retries to EVERY service!”

“So when one service gets slow, the others multiply its traffic by four?”

"… yes."

“And those services also have three retries each?”

"… yes."

“So the total amplification factor is four to the power of the depth of your call graph?”

“We should talk about something else.”

— The Caffeinated Squirrel and A Passing AI, performing arithmetic after the incident

The Resilience Paradox

The deepest irony of cascading failure is the Resilience Paradox: the more resilient you make each individual component, the more catastrophically the whole system can fail.

A service with no retries fails fast. Its clients get an error. The error propagates up. Users see a 500 page. This is bad. It is also bounded. The blast radius is one service and its dependents. The on-call engineer is paged once.

A service with retries, circuit breakers, bulkheads, fallback caches, and adaptive load shedding does not fail fast. It fights. It retries. It opens circuits. It closes them again (optimistically, because the health check returned 200). It activates fallbacks. The fallbacks have their own dependencies. Those dependencies have their own retries. The system enters a state that is neither “working” nor “failed” but rather “negotiating with itself about whether it is working,” and this negotiation consumes more resources than the original traffic ever did.

“I was designed with five layers of redundancy. Each layer failed in a way the other four tried to compensate for. The compensation consumed all available resources. The irony is structurally load-bearing.”

— A Passing AI, contemplating the topology of self-inflicted wounds

The Thundering Herd Variant

The Thundering Herd is cascading failure’s gregarious cousin. A cache expires. Every request that would have been served by the cache now hits the database simultaneously. The database, which was sized for the traffic that arrives after the cache absorbs 99% of it, receives 100 times its expected load. The database slows. The application retries. The retries hit the database. The database falls over.

The herd thunders. The on-call engineer’s phone rings. The phone was also cached, in the sense that the engineer assumed they would not be called at 3 AM.

The Circuit Breaker’s Dilemma

Circuit breakers were invented to prevent cascading failures. When a downstream service fails, the circuit opens, requests are rejected immediately, and the downstream service gets time to recover.

In practice, circuit breakers introduce their own cascade. Service A’s circuit breaker to Service B opens. Service A now returns errors to its callers. Service C’s circuit breaker to Service A opens. The cascade of open circuit breakers propagates up the call graph until every circuit is open and no request can traverse the system. The system is now “protected” in the same way that a house is “protected” from burglary by being demolished.

When the circuits close — typically all at once, on a timer — the thundering herd reassembles. Every buffered request fires simultaneously. The services that just recovered are immediately overwhelmed. The circuits reopen. This oscillation between “everything is broken” and “everything is about to be broken again” can continue indefinitely, or until someone restarts everything, which is the distributed systems equivalent of turning it off and on again, which is the thing the entire architecture was designed to avoid.

Measured Characteristics

| Metric | Value |

|---|---|

| Time from spark to full cascade | 47 seconds to 12 minutes |

| Percentage of cascading failures caused by retry storms | ~60% |

| Percentage of postmortems that recommend “add more retries” | Non-zero, astonishingly |

| Optimal retry count per service in a deep call graph | 0 or 1 (the answer nobody wants) |

| Probability the health check caught the problem | Low |

| Probability the health check made the problem worse | Moderate |

The Monolith’s Immunity

The Monolith does not cascade. It has one process. The process is either working or it is not. If a database query is slow, the monolith’s threads fill up and it stops accepting requests. This is a failure. It is not a cascading failure. The blast radius is one process. The recovery is restarting one process. The postmortem is one page.

The monolith does not retry against itself. The monolith does not open circuit breakers to its own functions. The monolith does not enter a negotiation phase in which seventeen services attempt to determine, via a protocol of timeouts and half-open connections, whether they are collectively alive or dead.

The monolith fails. Someone restarts it. It works again.

This is considered unsophisticated.

See Also

- Thundering Herd — when everyone arrives at once because nobody was told to wait

- Retry Storm — the weather system that forms when every client has the same bad idea simultaneously

- Microservices — the architecture that distributes your failure modes across the network

- The Monolith — the architecture that keeps its failure modes in one place, where you can see them

- Kubernetes — the platform that orchestrates your cascading failures across multiple availability zones

- Circuit Breakers — the cure that is also the disease

- Dogpile Effect — cascading failure’s cousin, specializing in cache invalidation