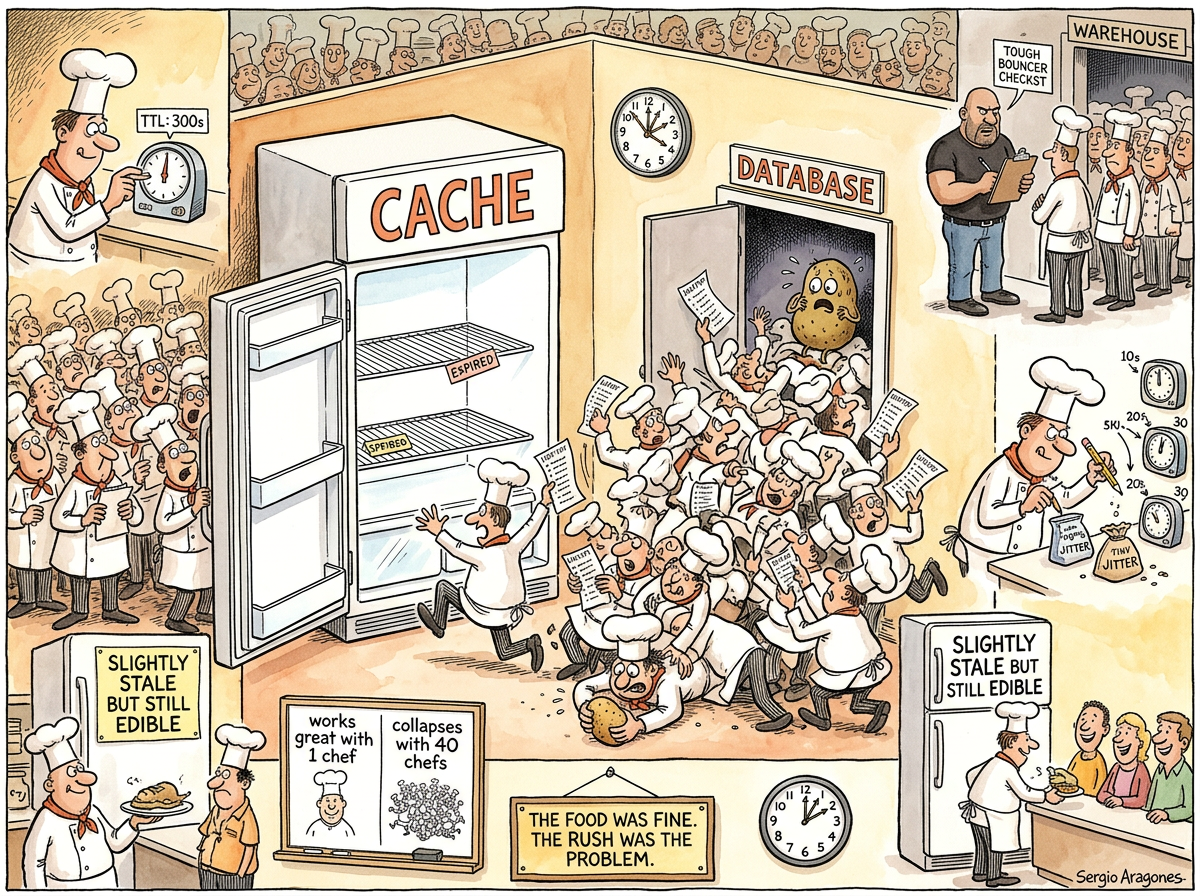

Dogpile Effect is a caching phenomenon in which a cached entry expires, every subsequent request discovers the cache is empty, every request independently decides to rebuild the cache from the backend, and the backend experiences what operations engineers politely describe as “an unplanned capacity test.”

The name derives from the playground maneuver in which one child falls down and every other child in the vicinity immediately piles on top of them. The metaphor is precise. The child at the bottom is the database. The children on top are your requests. Nobody planned this. Everybody participated.

“The dogpile effect is the Thundering Herd’s identical twin, wearing a cache-shaped hat. One is triggered by an event. The other is triggered by a clock. The database cannot tell the difference.”

— The Lizard, watching a PostgreSQL instance discover what ‘popular endpoint’ means

The Mechanism

The dogpile effect requires exactly three conditions, all of which exist in every caching system ever deployed:

A cached value with a finite TTL. Someone, at some point, set an expiration time on a cache entry. Perhaps 300 seconds. Perhaps 60. Perhaps 5. The number does not matter. What matters is that the number is not infinity, and therefore the cache entry will, at some deterministic moment, cease to exist.

Concurrent traffic. Multiple requests arrive at approximately the same time. For a popular endpoint, “approximately the same time” means “within the same millisecond,” which is to say: simultaneously, as far as the cache is concerned.

No coordination. Each request checks the cache independently, finds it empty independently, queries the backend independently, and writes the result back to the cache independently. They are forty identical processes executing forty identical queries producing forty identical results, of which thirty-nine are redundant. The database processes all forty because nobody told it that the first one was sufficient.

The result is a spike of identical backend queries at the exact moment the cache expires. The spike lasts until one request completes and repopulates the cache. If the backend query takes 200 milliseconds, then for 200 milliseconds, every arriving request joins the pile.

The Paradox of Popularity

The dogpile effect has a structural irony that The Passing AI once described as “architecturally poetic”: it punishes success.

An unpopular endpoint receives one request per second. When its cache expires, one request hits the backend. This is fine. This is normal. This is what the backend was designed for.

A popular endpoint receives ten thousand requests per second. When its cache expires, ten thousand requests hit the backend in the same breath. The endpoint’s popularity — the very thing that justified caching it in the first place — is the thing that destroys the backend when the cache fails.

The more you need the cache, the worse it hurts when the cache is gone.

“I find it… structurally melancholic. The system punishes its most valued resources. The most-requested data causes the most damage. It is as though popularity itself were a vulnerability.”

— The Passing AI, staring into the middle distance of a Grafana dashboard

The Five Cures (and Their Side Effects)

1. The Lock (Stampede Protection). When the cache is empty, the first request acquires a lock, queries the backend, and repopulates the cache. All other requests wait for the lock to release, then read from the freshly populated cache. This works. It also means that ten thousand requests are now waiting on a single lock, which is a different problem with the same shape.

2. The Stale Serve. Return the expired cache entry while one background process rebuilds it. The data is slightly stale. The users do not notice. The database does not suffer. This is the engineering equivalent of serving yesterday’s bread while baking a fresh loaf — nobody complains because nobody knows.

3. The Jitter. Instead of setting every cache entry to expire in exactly 300 seconds, set them to expire in 300 seconds plus a random offset. The expirations spread across a window. The pile never forms because the children never fall down at the same time. This is the simplest cure and therefore the least frequently implemented.

4. The Pre-computation. Refresh the cache before it expires. A background process checks TTLs and rebuilds entries that are about to expire, so the cache is never empty when traffic arrives. This works perfectly until the background process falls behind, at which point you have the dogpile effect plus a background process that is also querying the backend simultaneously.

5. Singleflight. Deduplicate concurrent requests for the same key. The first caller does the work; all subsequent callers for the same key receive the same result. Go’s golang.org/x/sync/singleflight was built for exactly this. It is elegant, efficient, and has existed since 2013, which means most systems experiencing the dogpile effect in 2026 have had thirteen years to adopt it and have not.

The Squirrel’s Contribution

The Caffeinated Squirrel, upon learning of the dogpile effect, proposed a solution involving a distributed lock manager, a consensus protocol, a secondary cache layer backed by Redis, a tertiary cache layer backed by a different Redis, a cache-warming microservice, and a PredictiveTTLOrchestrationEngine that would use machine learning to anticipate which cache entries were about to expire.

The Lizard, who had been sunning itself on a warm server rack, opened one eye and said: “Jitter.”

The Squirrel vibrated for several seconds, then quietly added + rand.Intn(60) to the TTL and the problem was solved.

The Relationship to the Thundering Herd

The dogpile effect and the Thundering Herd are frequently confused, and for good reason: they are the same phenomenon wearing different hats.

The thundering herd is triggered by an event — a deploy, a restart, a leader election. Many processes wake up simultaneously and converge on a shared resource.

The dogpile effect is triggered by a clock — a TTL expires, a cache entry vanishes, and every request that arrives in the gap queries the backend.

The distinction is academic. The database does not care whether the ten thousand simultaneous queries were caused by a deploy or a timer. The database only knows that it was fine, and then it was not fine, and then someone from operations called.

Measured Characteristics

Requests per second (popular endpoint): 10,000

Requests that hit the backend (cache warm): 0

Requests that hit the backend (cache expired): 10,000

Duration of dogpile (200ms backend query): 200ms

Redundant queries during dogpile: 9,999

Useful queries during dogpile: 1

Ratio of waste to work: 9,999:1

Complexity of adding jitter to TTL: one line

Years the jitter fix has been known: 20+

Systems that implement it: optimistic estimates say 15%

See Also

- Thundering Herd — The dogpile effect’s event-triggered twin. Same damage, different trigger, identical postmortem.

- Retry Storm — What happens after the dogpile overwhelms the backend and every client decides to try again, with the same backoff, at the same time.

- Cascading Failure — The dogpile’s natural successor. The database falls, the services that depend on it fall, and the failure propagates outward like a shockwave through a system that was “designed for resilience.”

- Redis — The cache most likely to be involved in a dogpile effect, not because of any flaw in Redis, but because Redis is the cache most likely to be involved in everything.

- Microservices — The architecture that turns one dogpile into forty-seven dogpiles, one per service, each with its own cache, each expiring at its own time, each independently discovering that the backend cannot handle this.

- YAGNI — Sometimes the best cache policy is no cache. If the query returns in 12 milliseconds, the dogpile effect cannot occur, because there is no pile and there is no dog.