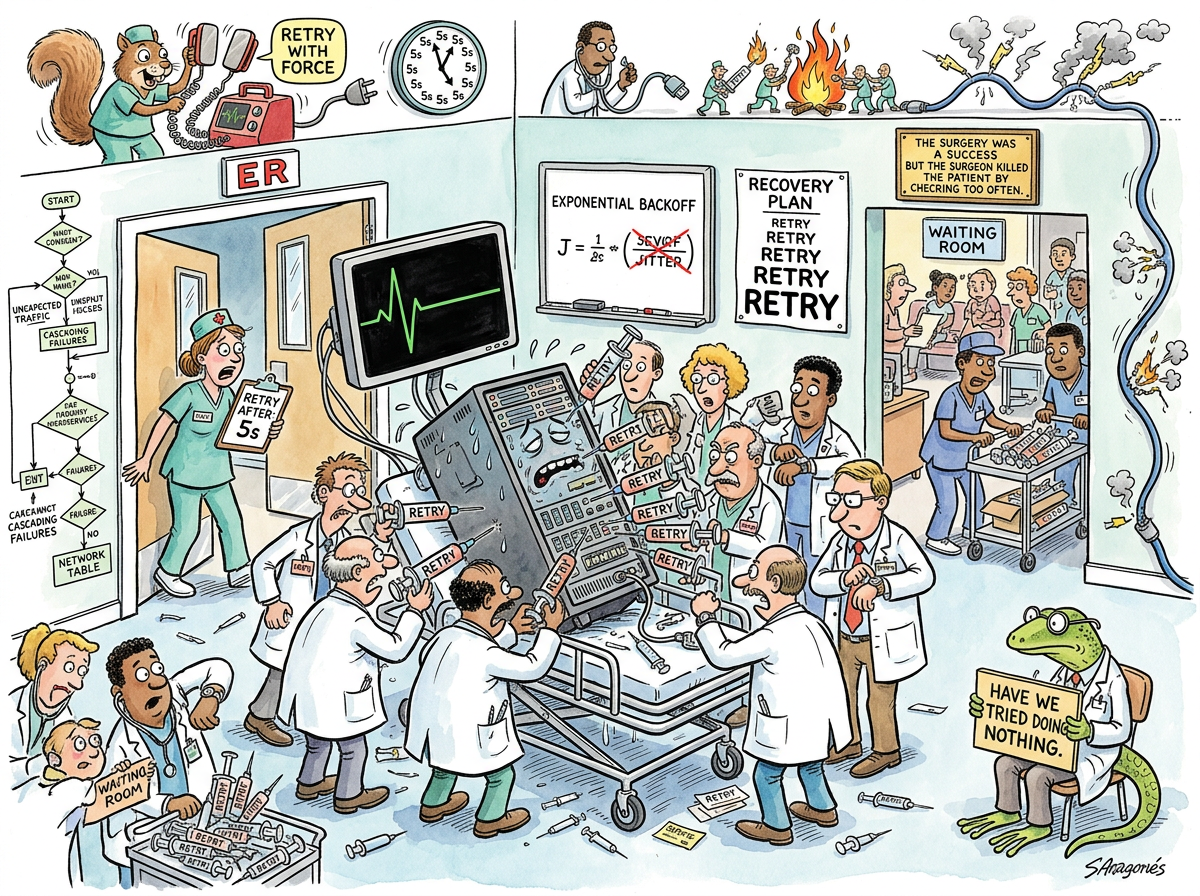

Retry Storm is a distributed systems phenomenon in which a service fails, its clients retry, and the retries constitute precisely the load that prevents the service from recovering. The service is not overwhelmed by work. The service is overwhelmed by requests to check whether it has finished being overwhelmed.

The retry storm is distinguished from ordinary high load by its recursive quality. Normal overload has an external cause — too many users, a traffic spike, a marketing campaign that succeeded. A retry storm is self-sustaining. Remove the original cause of failure entirely, and the system remains down, because the retries have replaced the original load with something worse: the same requests, arriving with metronomic synchronization, every five seconds, forever.

“A retry storm is a system arguing with itself about whether it’s working, so loudly that it can’t work.”

— The Lizard, declining to add retry logic

The Mechanism

The lifecycle of a retry storm proceeds in five stages, each more inevitable than the last:

Stage 1: The Incident. A service degrades. Perhaps a database connection pool fills. Perhaps a deploy introduces a slow query. Perhaps someone looked at the server wrong. The cause is mundane. The cause is always mundane.

Stage 2: The First Retry. Clients receive errors. Each client, independently and correctly, retries. This is the documented behavior. This is what the runbook says. Each client is doing the right thing.

Stage 3: The Synchronization. Because all clients experienced the failure at the same time, all clients retry at the same time. Because all clients read the same retry configuration, all clients retry with the same interval. Five seconds. Every client. Simultaneously. The service receives a pulse of traffic every five seconds that is identical to the traffic that caused the original failure, plus the traffic from new clients who have since joined the retry pool.

Stage 4: The Amplification. The retries fail. The clients retry the retries. Clients that had given up try again because their outer retry loop has reset. Clients from other services — which depend on the first set of clients — begin their own retries. The retry storm has children. The children have children.

Stage 5: The Steady State. The original cause of failure has resolved itself. The database connection pool cleared. The slow query timed out. The server that was looked at wrong has recovered its composure. None of this matters. The retry traffic alone now exceeds the service’s capacity. The service will remain down until the retries stop, and the retries will not stop until the service recovers, and the service cannot recover until the retries stop.

This is the steady state. It is very steady.

The Identical Backoff Problem

The textbook solution to transient failures is exponential backoff: wait 1 second, then 2, then 4, then 8. This is correct for a single client. For a thousand clients that all started failing at the same instant, exponential backoff produces exponentially synchronized retries. All thousand clients wait 1 second. Then all thousand wait 2 seconds. Then all thousand wait 4 seconds. The intervals grow longer. The stampedes grow no smaller.

“Exponential backoff without jitter is just a slower thundering herd. You haven’t solved the problem. You’ve given it a more dignified tempo.”

— A Passing AI, reviewing a retry policy

The fix is jitter — adding a random component to the backoff interval. Instead of all clients retrying at exactly 4 seconds, each retries at 4 seconds plus a random offset between 0 and 4 seconds. The synchronized pulse becomes a diffuse trickle. The herd disperses. The medicine stops killing the patient.

Jitter is trivially simple to implement. It is a single call to a random number generator. It is also, somehow, consistently forgotten, because the engineer writing the retry logic is testing with one client, and one client does not need jitter, and the engineer ships what was tested.

The Squirrel’s Contribution

The Caffeinated Squirrel has strong opinions about retry storms. The Squirrel has, in fact, built an entire retry framework featuring:

- Configurable exponential backoff with decorrelated jitter

- Per-endpoint circuit breakers with half-open probe intervals

- A retry budget that caps retries at 10% of successful request volume

- Distributed retry coordination via a Redis-backed token bucket

- An adaptive concurrency limiter based on TCP Vegas

- A dashboard

The framework handles retry storms beautifully. It has never been deployed to a service that has experienced one. The services that experience retry storms are using time.Sleep(5 * time.Second) in a for loop, written by someone who left the company, in a language the current team does not maintain.

The Circuit Breaker (Theoretical)

The Circuit Breaker pattern exists to prevent retry storms. When failures exceed a threshold, the circuit “opens” and requests fail immediately without reaching the server. After a timeout, the circuit enters a “half-open” state where a single probe request is allowed through. If the probe succeeds, the circuit closes and traffic resumes.

This works perfectly in conference talks.

In production, the circuit breaker is configured with a failure threshold of 50%, which means it opens after the service is already dead. Or it is configured with a threshold of 5%, which means it opens during normal operation when five requests out of a hundred happen to fail for unrelated reasons. Or it is configured perfectly but deployed to thirty instances, each with an independent circuit breaker, and when all thirty circuits half-open simultaneously, the thirty probe requests constitute their own small retry storm.

Measured Characteristics

Clients behaving correctly: all of them

System behaving correctly: none of it

Time to original failure: 1 incident

Time to retry-induced failure: 5 seconds after recovery

Time between "the service is back" and "the

service is down again": the duration of one retry cycle

Jitter implementation complexity: 1 line of code

Frequency of jitter being implemented: approximately never

Services with retry logic: most of them

Services with retry budgets: the ones that have already

experienced a retry storm

Retry frameworks built by the Squirrel: 1 (comprehensive)

Retry frameworks deployed to production: 0

Root cause of most retry storms: time.Sleep(constant)

See Also

- Thundering Herd — The retry storm’s cousin. Many clients arrive simultaneously because of a shared trigger. The retry storm is what happens when the herd fails and tries again.

- Dogpile Effect — A cache expires, everyone rebuilds it at once. Like a retry storm but the clients are trying to help, which somehow makes it worse.

- Cascading Failure — One service fails, its dependents fail, their dependents fail. The retry storm is often the transmission mechanism — each layer retrying into the layer below.

- 429 Too Many Requests — The server’s way of saying “you are the problem.” During a retry storm, every client receives this response and treats it as a reason to try again.

- Circuit Breaker — The theoretical cure. Opens when failures exceed a threshold, preventing retries from reaching the server. In practice, configured incorrectly by everyone, including the people who invented it.

- Exponential Backoff — Necessary but not sufficient. Without jitter, exponential backoff is synchronized politeness — everyone waiting the same amount of time before being rude again, together.