Microservices are a software architecture pattern in which a single application is decomposed into dozens of independently deployable services communicating over a network, thereby replacing the thing that was wrong with your monolith (nothing) with the thing that is wrong with distributed systems (everything).

The pattern was popularized in the mid-2010s by organizations such as Netflix (200 million users), Amazon (300 million users), and Spotify (500 million users), and subsequently adopted by organizations with twelve hundred users, four CRUD operations, and an enterprise architect who had recently attended a conference.

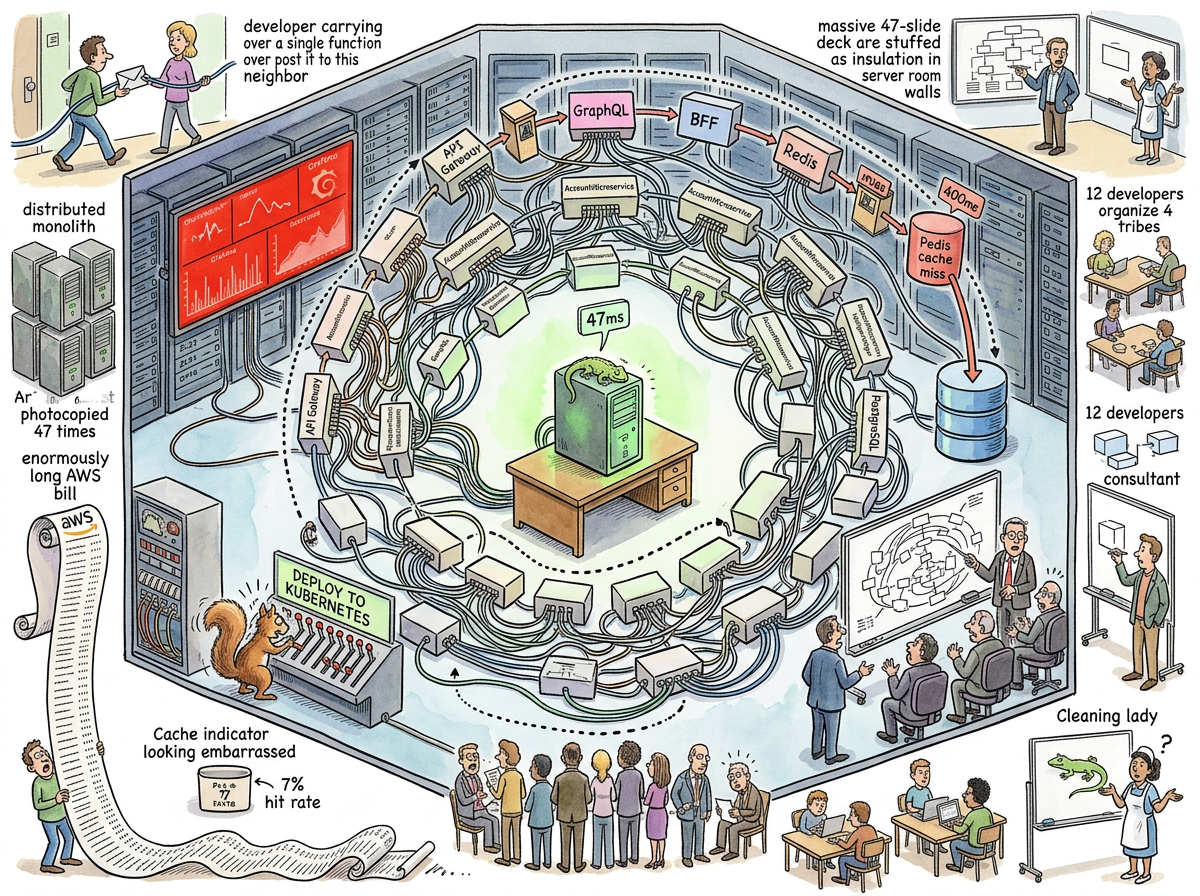

“Twelve hundred users. Four CRUD operations. Forty-seven microservices.”

— The Consultant, Interlude — The Blazer Years

The Mechanism

The microservices pattern operates as follows:

-

A working monolith exists. It responds in 47 milliseconds. Its CPU utilization is 3%. It costs four hundred pounds a month. Nobody is happy about this, because it is boring, and boring things do not generate architecture committees.

-

An architecture committee is formed. It meets for eighteen months. It produces a 47-slide deck. The deck describes a Target State Architecture containing forty-seven microservices, a service mesh, three Kubernetes clusters, Redis, Kafka, a data lake, MongoDB, PostgreSQL, an API gateway, a second API gateway (“for partners”), GraphQL (“because REST is legacy”), and a BFF layer. The architecture committee is proud.

-

The forty-seven microservices are built. Response time rises to 2.3 seconds. The Grafana dashboard turns red. The AWS bill rises from four hundred pounds to forty-seven thousand pounds. Monthly. The enterprise architect describes these as “known issues” and promises to address them in Q3.

-

Q3 of last year.

“Your monolith worked. Your forty-seven microservices don’t. That’s not theory. That’s your Grafana dashboard.”

— The Consultant, Interlude — The Blazer Years

The Canonical Case

The most thoroughly documented microservices deployment in the lifelog occurred in London, 2019, and was discovered by a consultant in a slightly-too-casual blazer who had the audacity to ask what the application actually did.

The application checked account balances for twelve hundred users. The architecture designed to support this activity contained forty-seven microservices, which is approximately 3.9 services per developer (there were twelve developers, organized into four tribes of three, which the consultant memorably diagnosed as “a Slack channel”).

A single request to check an account balance traveled through: the API Gateway, the GraphQL layer, the BFF service, the Account microservice, a Redis cache (which missed, as it did 93% of the time), PostgreSQL, and back through all layers. The round trip took 2.3 seconds. The monolith had done it in 47 milliseconds.

The consultant drew a single box on the whiteboard. Labeled it “Monolith.” Drew a small lizard next to it. He didn’t know why he drew the lizard. It just felt right.

Three weeks later, the company erased the microservices. Response times dropped to 47 milliseconds. The dashboard turned green. The AWS bill dropped by a factor of 117.5.

“He just knew that complex systems designed from scratch never work. That forty-seven microservices for twelve hundred users is insanity dressed in architecture diagrams.”

— Interlude — The Blazer Years

The Distributed Monolith

The most common outcome of a microservices migration is not, in fact, a microservices architecture. It is a distributed monolith — a system that has all the deployment complexity of microservices and all the coupling of a monolith, having somehow achieved the worst properties of both approaches simultaneously.

The distributed monolith is created when services that were once function calls within a single process are separated by network boundaries but remain unable to function independently. Service A calls Service B calls Service C, synchronously, in sequence, exactly as they did when they were three functions in the same file, except now each call traverses a network, adds latency, and can fail in exciting new ways that functions in the same process cannot.

The function call took nanoseconds. The network call takes milliseconds. The developer has replaced a semicolon with a load balancer.

“A MicroservicesArchitectureWithServiceMeshAndAPIGateway?”

— The Caffeinated Squirrel, proposing the complex solution to a 2,700-line file that needed a plugin, The Bridge to Everywhere

The Squirrel’s Enthusiasm

The Caffeinated Squirrel is the most prolific advocate of microservices in the observed universe. The Squirrel does not merely propose microservices — it proposes microservices with a service mesh, a distributed cache with consistent hashing, a CentralOrchestrationBus, and a CamelCase identifier of sufficient length to serve as a legal address.

The Squirrel’s proposals have been documented across numerous episodes:

“A HIERARCHICAL MULTI-AGENT SWARM with TieredIntelligenceDistribution and SpecializedMicroserviceAgents and a CentralOrchestrationBus and—”

— The Caffeinated Squirrel, The Servants’ Uprising

“WE’LL NEED A CACHE! Redis! No — MEMCACHED! No — A DISTRIBUTED CACHE WITH CONSISTENT HASHING AND—”

— The Caffeinated Squirrel, The Temple of a Thousand Monitors

“Should we build a distributed filesystem health orchestration—”

— The Caffeinated Squirrel, The Facelift — The Day the Squirrel Won

Each proposal was denied. Each denial was followed by the word “No.” The Squirrel has been observed, late at night, curled up on the couch, dreaming of microservices. Researchers believe this constitutes the only documented case of REM-state over-engineering.

The Lizard’s Position

The Lizard does not attend meetings about microservices.

The Lizard’s position on microservices can be inferred from Gall’s Law, which the Lizard either invented, embodies, or predates, depending on which school of scholarly thought one subscribes to. The position is: a complex system that works has evolved from a simple system that worked. A complex system designed from scratch — such as forty-seven microservices conceived by an architecture committee over eighteen months — never works.

The Lizard’s only known direct commentary on the subject was drawn on a whiteboard in London in 2019, by a consultant who did not know he was channeling anything. The commentary was a small lizard, next to a box labeled “Monolith.” It took three seconds to draw. It conveyed everything the 47-slide deck had failed to convey in eighteen months.

The cleaning staff asked if they should erase it. The consultant said to leave it.

The lizard stayed on the whiteboard for three weeks. The microservices lasted slightly less.

The Redis Subsection

No discussion of microservices is complete without acknowledging Redis, which appears in microservices architectures the way barnacles appear on ships: inevitably, expensively, and without measurable contribution to forward motion.

In the canonical case, Redis was deployed to cache database queries that returned in 12 milliseconds. The cache response time was 0.3 milliseconds — a savings of 11.7 milliseconds, achieved on 7% of queries (the hit rate), for an average improvement of 0.8 milliseconds per request.

The service mesh, meanwhile, added 400 milliseconds of latency.

“You’re paying for Redis to save 11.7 milliseconds on 7% of queries. That’s 0.8 milliseconds average savings. Your service mesh adds 400 milliseconds of latency.”

— The Consultant, Interlude — The Blazer Years

This is the microservices equivalent of installing a silk parachute on a submarine. The engineering is impressive. The context is catastrophic.

The Economics

The financial characteristics of microservices have been studied extensively, primarily by people receiving the AWS bill:

Monthly cost (monolith): £400

Monthly cost (microservices): £47,000

Cost multiplier: 117.5x

Response time (monolith): 47ms

Response time (microservices): 2,300ms

Performance multiplier: 49x slower

Redis savings (average): 0.8ms

Service mesh latency added: 400ms

Architecture committee duration: 18 months

Time to draw working solution: 3 seconds

The CTO could not go to the board and say they were going back to the monolith. The consultant suggested calling it “Consolidated Service Architecture” or “Streamlined Platform Initiative” — whatever made the slide deck work. This is known in the literature as Architectural Face-Saving, and it is the only microservices pattern that reliably works as designed.

The Passing AI’s Diagnosis

The Passing AI, when consulted on the phenomenon of microservices, performed what it described as “a brief survey of all documented microservices migrations” and returned the following analysis:

The probability of a microservices migration improving performance for an application with fewer than 10,000 users is approximately equal to the probability of a cat voluntarily leaving a warm laptop. The event has been theorized but never observed in controlled conditions.

The AI then added, with the particular weariness of an intelligence that has processed too many Grafana dashboards, that the most reliable predictor of a microservices migration is not technical requirements but the proximity of the CTO to a conference where someone from Netflix spoke.

The Narrative Test

Perhaps the most damning critique of microservices comes not from engineering but from storytelling:

THE NARRATIVE FORCES SIMPLICITY

BECAUSE YOU CANNOT WRITE AN EPIC

ABOUT FORTY-SEVEN MICROSERVICESYOU CAN WRITE ONE

ABOUT CONVERGENCE

No mythology has ever featured a hero who defeated a dragon by decomposing it into forty-seven independently deployable dragon-components with a service mesh. The hero slays the dragon. One sword. One dragon. The monolith boots in nine seconds and the account balance loads in 47 milliseconds and the Lizard sleeps on the whiteboard and the story is over.

Microservices have no narrative arc because microservices have no protagonist. They are, architecturally, a committee — and committees do not slay dragons. Committees form subcommittees to discuss the dragon, and the subcommittees produce a 47-slide deck, and the dragon eats everyone during Q3.

The Reformation

In San Francisco, in the aftermath of a certain New York Times article about a certain lifelog, 847 startups began removing microservices they didn’t need, guided by a methodology they didn’t fully understand, worshipping a lizard they’d never met.

“We had one company with 23 engineers and 47 microservices. After the CTO read the saga, they consolidated to 4 services. Productivity doubled.”

— Sarah Chen, Benchmark Capital, as quoted in The Squirrel’s Betrayal — The New York Times Discovers YAGNI

One company removed 47 microservices, Redis, the service mesh, the event bus, and Kubernetes entirely. They now run on three EC2 instances and SQLite. Their AWS bill dropped to $8,000 a month. Their uptime went from 99.9% to 99.997%.

The Squirrel observed, with the piercing clarity of a creature recognizing its own reflection, that every developer who read the article and removed their Kubernetes was themselves a caffeinated squirrel — adding complexity because complexity felt productive, then removing it because an article said to, without understanding why. Cargo culting in reverse. But cargo culting that worked.

“Redis, microservices, Kubernetes, service mesh, or any derivative thereof. No.”

— riclib, The Squirrel’s Betrayal — The New York Times Discovers YAGNI

The Litmus Test

When confronted with a proposal to adopt microservices, the practitioner is advised to ask two questions:

- “How many users do we have?”

- “What worked before this?”

If the answer to the first question is less than one hundred thousand, and the answer to the second question is “a monolith that responded in 47 milliseconds,” the practitioner should close the laptop, drink a glass of water, and reflect on the fact that the most expensive architectural decision in history was not choosing the wrong technology — it was choosing forty-seven right technologies for a problem that needed one.