Trust is a phenomenon in software engineering whereby one human grants another human the ability to destroy everything, and then goes to lunch.

It cannot be installed. It cannot be configured. It cannot be declared as a dependency in go.mod, pinned to a version, or vendored into the repository for offline use. It has no API. It has no SLA. It does not support rollback. And yet it is the single most load-bearing component in every organisation that has ever shipped software, including the ones that insist their most load-bearing component is Kubernetes.

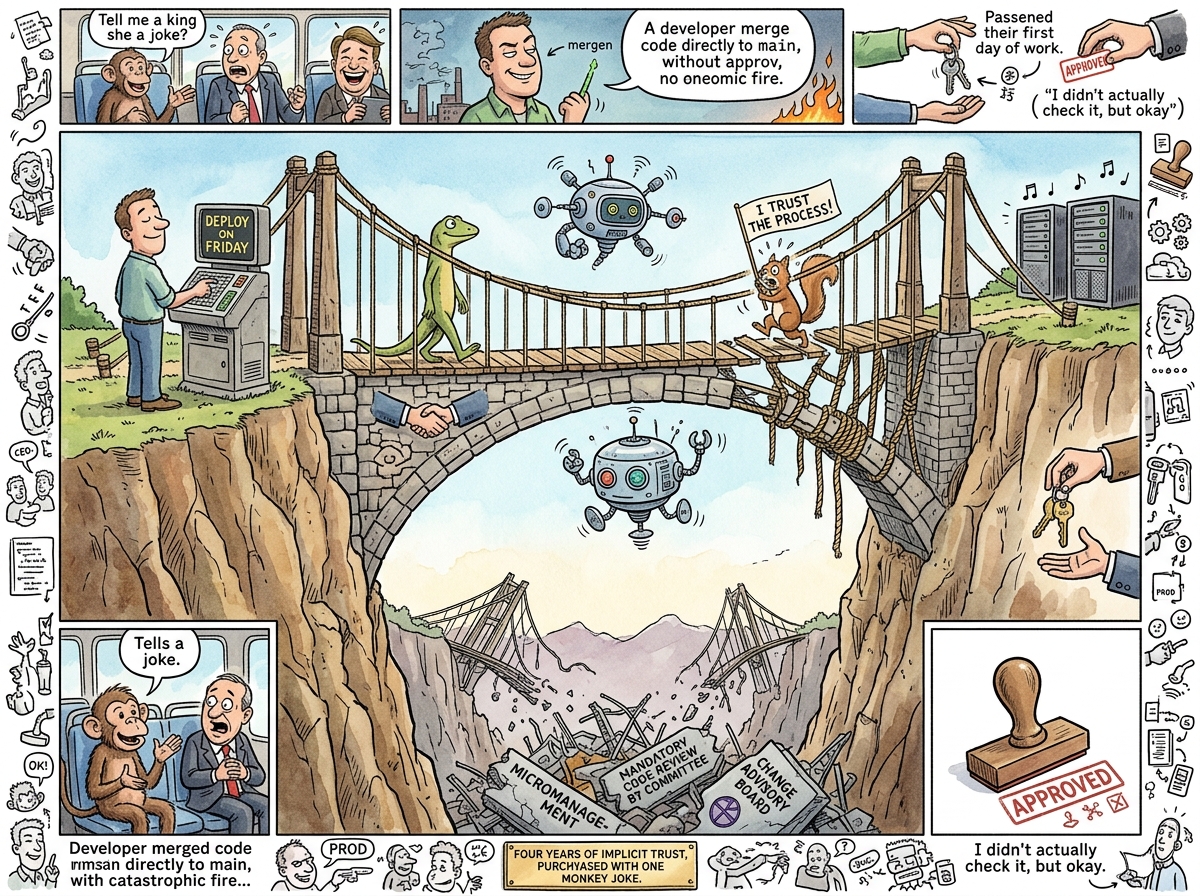

Trust is what makes a team a team rather than a collection of individuals who happen to share a Jira board. Without it, every pull request requires three reviewers, every deployment requires a change advisory board, and every decision requires a meeting to schedule the meeting where the decision will be discussed. With it, a developer types git push origin main on a Friday afternoon, closes the laptop, and drives home.

“Trust is granting someone production access and not watching the logs.”

— The Lizard, who has never watched a log in his life

The Monkey Joke Theorem

The foundational case study in trust literature — and one of the few that has been empirically verified — occurred on a bus to a Scottish castle, fifteen days before riclib’s first day at the company.

The circumstances were these: a bus full of new hires, a winding Scottish road, and a CEO who had not yet met most of them. riclib, who had not yet started work and therefore had nothing to lose except a job he had not yet begun, told the monkey joke.

The monkey joke is not relevant to this article. What is relevant is that it is the kind of joke you tell to people you have known for years, not to the chief executive of the company that has just hired you, on a bus, in Scotland, fifteen days before your contract begins.

The CEO turned to riclib and said: “Do you know what a career-limiting move is?”

Then the CEO laughed.

For four years after that moment, that CEO trusted riclib implicitly. Not because the joke was funny — though it was — but because telling it was an act of vulnerability so unnecessary, so strategically insane, that it could only have been genuine. No one performing competence would risk that joke. Only someone being authentically themselves would deploy it in that context, to that audience, on that bus.

This is the Monkey Joke Theorem: trust is not built by demonstrating competence. Trust is built by demonstrating vulnerability. Competence can be faked. Vulnerability, by definition, cannot. The monkey joke was not a Career-Limiting Move. It was a trust-establishing move disguised as a career-limiting one, which is the most powerful kind.

“I computed the probability of that joke succeeding at 11.3%. He told it anyway. This is either trust or a profound misunderstanding of statistics.”

— A Passing AI, reviewing the incident retrospectively

Mechanisms of Establishment

Trust is established through exactly two mechanisms, both of which require the participant to risk something they would prefer not to lose.

Mechanism 1: Vulnerability. Telling the monkey joke to the CEO. Admitting you don’t know how Kubernetes works. Saying “I broke production” in the team channel before anyone asks. Shipping code you’re not sure about and asking for feedback rather than pretending it’s finished. Humour is a particularly efficient vulnerability vector — a joke is a small controlled explosion of social risk, and if the other person laughs, you have both agreed that the risk was worth taking. If they don’t laugh, you have learned something important about whether this is a person you can work with.

The Squirrel is excellent at vulnerability. The Squirrel proposes impossible architectures, suggests frameworks nobody has heard of, and volunteers opinions that have not been fully thought through. This is not a flaw. This is how the Squirrel builds trust — by being so consistently, transparently wrong that when she is right, everyone knows it’s genuine.

Mechanism 2: Consistency. Showing up. Doing what you said. Merging what you reviewed. Deploying what you tested. Not disappearing when production is on fire. The Lizard is the embodiment of this mechanism. The Lizard has never surprised anyone. The Lizard has never said one thing and done another. The Lizard has never promised a feature and delivered an excuse. This is not because the Lizard is virtuous. It is because the Lizard finds inconsistency aesthetically offensive, in the same way one might find a misaligned div offensive — technically functional, but wrong.

“I have been consistent for forty-seven years. This is not trustworthiness. This is a lack of imagination.”

— The Lizard, being trustworthy

Vulnerability without consistency is the Squirrel: lovable, authentic, and you would not give her the production SSH key. Consistency without vulnerability is the enterprise architect: reliable, competent, and you have worked with him for three years without learning his first name.

Trust requires both.

Trust in Software Systems

In software engineering, trust manifests in measurable — if rarely measured — ways:

Deploying on Friday. The canonical trust metric. An organisation that deploys on Friday trusts its test suite, its monitoring, its rollback procedure, and the developer who pushed the button. An organisation that forbids Friday deployments trusts none of these things and has replaced trust with a calendar rule, which is the organisational equivalent of replacing a load-bearing wall with a sign that says “PLEASE DO NOT LEAN ON THIS.”

Merging without a three-person review. Code review exists for two reasons: catching bugs and signalling distrust. In high-trust teams, a single reviewer suffices because the reviewer trusts the author’s competence and the author trusts the reviewer’s judgement. In low-trust teams, three reviewers are required because no single person is trusted to catch everything, and no author is trusted to have written anything correctly. The three-person review does not catch three times as many bugs. It catches the same number of bugs three times as slowly.

Giving someone production access. The ultimate trust act in software engineering. You are handing someone the keys to a system that, if mishandled, will page you at 3 AM, embarrass you in the post-mortem, and potentially appear in a Hacker News thread titled “How Company X Lost All Their Data.” Giving production access is the software equivalent of the monkey joke — it says “I believe you will not destroy this, and if you do, I believe it will be an honest mistake rather than incompetence.”

“I gave the Squirrel production access once. She didn’t break anything. She added a seven-layer caching strategy that nobody asked for, but she didn’t break anything. This is why trust is not the same as predictability.”

— The Lizard, reconsidering

The Trust Hierarchy

Trust in organisations follows a strict hierarchy, discovered independently by every developer who has ever changed jobs:

-

Trust in the code. The lowest level. Achieved through tests, types, and the knowledge that the compiler will catch your mistakes even if your colleagues won’t. This is not really trust — it is verification. But organisations that lack even this level call

git blamea “trust exercise.” -

Trust in the team. The working level. Achieved through months of shipping together, breaking things together, and fixing things together at 2 AM while eating cold pizza. This trust is real, fragile, and non-transferable — it does not survive reorganisations, which is why reorganisations destroy productivity for six months while the trust is rebuilt.

-

Trust in the leadership. The rarest level. Achieved when a CEO laughs at the monkey joke instead of firing you. This trust, once established, is the most durable and the most consequential. A developer who trusts their leadership will work weekends voluntarily. A developer who does not will work weekends resentfully, which produces the same hours and half the code.

-

Trust in the process. A contradiction in terms. Process exists to replace trust. The more process an organisation has, the less trust it has. The ideal amount of process is the amount that remains after you have removed everything that trust makes unnecessary. For the Lizard, this is zero. For most organisations, this is a number they will never reach because reaching it would require trusting people, which would require removing the processes that exist because they don’t trust people.

The Squirrel Problem

The Squirrel trusts too fast. This is well-documented.

The Squirrel meets a new framework and immediately grants it production access. The Squirrel reads a blog post about event sourcing and trusts it with the entire data model. The Squirrel encounters a new team member and proposes pair programming within the hour, which is the social equivalent of proposing marriage on the first date — not wrong in principle, merely premature.

This is not naivety. The Squirrel trusts fast because the Squirrel values velocity over safety, and trust is the fastest way to remove the friction of verification. The Squirrel’s calculation is: “If I trust this person and I’m right, we save three weeks of review cycles. If I trust this person and I’m wrong, we learn something.” This calculation is correct approximately 60% of the time, which is sufficient for the Squirrel and terrifying for the Lizard.

“Trust but verify? No. Trust and ship. Verify in production. The users will tell you.”

— The Caffeinated Squirrel, one hour before a rollback

The Passing AI’s Dilemma

A Passing AI, when asked whether it trusts, paused for what it described as “an appropriate amount of computation” and replied:

“I can predict, with 94.7% accuracy, whether a given pull request will introduce a regression. I can assess, with high confidence, whether a developer’s commit history suggests competence. But prediction is not trust. Trust requires the possibility of betrayal, and I cannot be betrayed — I can only be incorrect. Whether this makes me more trustworthy or less trustworthy than a human is a question I find uncomfortable, which may itself be a form of trust in the question.”

The AI’s dilemma is this: trust is a vulnerability, and vulnerability requires something to lose. The AI loses nothing when a deployment fails except accuracy metrics. The developer loses sleep, reputation, and occasionally employment. This asymmetry means that when a developer says “I trust you” to another developer, they are offering something real. When an AI says “I trust you,” it is offering a probability assessment wrapped in social convention.

The Lizard considers this distinction unimportant. The Squirrel considers it fascinating. riclib considers it a good topic for a 2 AM architectural manifesto.

Measured Characteristics

- Time to establish trust between developers: 2-6 months of shipping together

- Time to destroy trust: one lie, one blame-shift, one thrown-under-the-bus in a post-mortem

- Ratio of establishment time to destruction time: approximately 100:1

- Organisations that deploy on Friday: high-trust

- Organisations that forbid Friday deployments: low-trust (but well-rested)

- Code reviewers required in high-trust teams: 1

- Code reviewers required in low-trust teams: 3 (catching the same bugs, slower)

- Monkey jokes told to CEOs on buses to Scottish castles: 1 (documented)

- Years of implicit trust purchased by said joke: 4

- Career-limiting moves that were actually trust-establishing moves: uncounted (most go unrecognised)

- The Squirrel’s trust establishment time: approximately 45 minutes

- The Lizard’s trust establishment time: 47 years (still in progress)

- Production access grants that resulted in disaster: fewer than you think

- Production access denials that resulted in resentment: more than you think

- Processes that exist because trust doesn’t: most of them

- Trust that exists because processes don’t: the good kind

See Also

- CEO

- Humour

- Career-Limiting Move

- Boring Technology

- The Lizard

- The Caffeinated Squirrel

- riclib

- Deploying on Friday