LangChain is a Python framework for building AI applications that wraps HTTP API calls in enough abstraction layers that the original POST request has filed a missing persons report, hired a private investigator, and is considering whether its family will ever see it again.

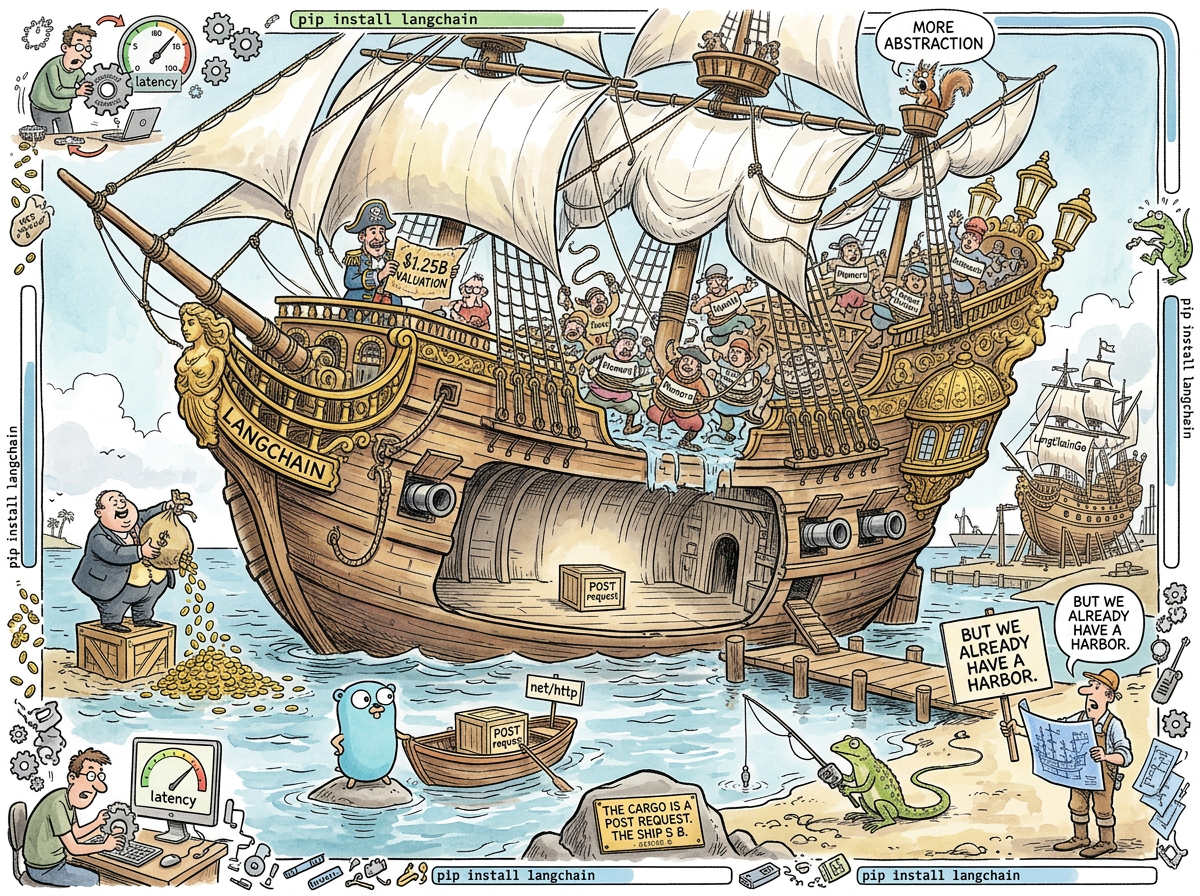

Founded in October 2022 by Harrison Chase, LangChain has raised $160 million in venture capital at a $1.25 billion valuation — which, per line of abstraction, values each layer between the developer and the HTTP request at approximately $26,595. The request itself — requests.post(url, json=body) — remains, as always, free.

“A developer needed to call an API. The developer called the API. Then a framework was built to call the API for the developer. Then the framework raised a Series B. Then the developer removed the framework and the API call got faster. The framework is now worth more than the improvement it prevented.”

— The Lizard, who has been calling APIs since before APIs had a name

What LangChain Does

LangChain provides:

-

Chains — sequences of component calls (take input, format prompt, call LLM, parse response). In Python without LangChain, this is a function. In Python with LangChain, this is a Chain, which is an abstraction around a function, which calls a function.

-

Agents — loops that use LLM reasoning to decide what to do next. In Python without LangChain, this is a while loop with an API call inside. In Python with LangChain, this is an Agent with a Planner and an Executor and a ToolKit and a Memory and a Callback, each of which wraps the thing beneath it.

-

Tools — Python functions that agents can call. In Python without LangChain, these are Python functions. In Python with LangChain, these are Python functions decorated with

@tool, which registers them in a ToolKit, which is passed to an Agent, which stores them in a ToolMap, which is consulted by the Planner. -

Memory — conversation history storage. In Python without LangChain, this is a list. In Python with LangChain, this is a ConversationBufferMemory, or a ConversationSummaryMemory, or a ConversationSummaryBufferMemory, or a VectorStoreRetrieverMemory, each of which stores the list differently while containing the same list.

-

Retrievers — query interfaces for vector databases. In Python without LangChain, this is an HTTP call to your vector database. In Python with LangChain, this is a Retriever wrapping a VectorStore wrapping a Client wrapping an HTTP call to your vector database.

-

Output Parsers — extract structured data from LLM responses. In Python without LangChain, this is

json.loads(). In Python with LangChain, this is a PydanticOutputParser with a format_instructions method that injects schema descriptions into the prompt so the LLM knows to return JSON, which is then parsed by…json.loads(). -

Prompt Templates — string formatting with variable substitution. In Python without LangChain, this is an f-string. In Python with LangChain, this is a PromptTemplate with input_variables and a template string that gets formatted into… an f-string.

-

Callbacks — hooks for observability. In Python without LangChain, this is

print(). In Python with LangChain, this is a CallbackManager managing CallbackHandlers handling Callbacks that call…print().

At the bottom of every Chain, every Agent, every Retriever, every Tool, every Memory, every OutputParser, every PromptTemplate, and every Callback is the same thing: an HTTP POST request to an LLM provider’s API. The layers between the developer and that request are the product. The product raised $1.25 billion. The request raised nothing, because the request was always free.

What LangChain Actually Does

import requests, json

response = requests.post(

"https://api.anthropic.com/v1/messages",

headers={"x-api-key": key, "content-type": "application/json"},

json={"model": "claude-sonnet-4-20250514", "messages": [{"role": "user", "content": prompt}]}

)

answer = response.json()["content"][0]["text"]

Seven lines. No framework. No chains. No agents. No Series B.

This is the operation that LangChain abstracts. This is the cargo in the hold. The ship — the Chains, the Agents, the Tools, the Memory, the Retrievers, the OutputParsers, the PromptTemplates, the Callbacks — is the $1.25 billion of hull built around a crate that weighs seven lines.

The Abstraction Archaeology

The community discovered what was in the hull. The excavation reports are consistent:

“Slow, opaque, and magically over-engineered for simple tasks yet weirdly fragile for complex ones.”

— Developer testimony, widely cited

“All LangChain has achieved is increased the complexity of the code with no perceivable benefits.”

— Hacker News, 2023

“LangChain’s memory components can add over 1 second of latency per API call.”

— Production teams, who removed LangChain and watched their applications get faster

One second. Per API call. Added by the memory abstraction. The memory abstraction stores conversation history. Conversation history is a list. The list added one second. Teams removed the framework. The latency dropped. The application improved by subtracting software.

This is the rare case in engineering where the optimisation is deletion. Where the performance improvement is not writing faster code but removing the slow code that was sitting between you and the fast code that was always there.

Octomind, an AI testing company, published a detailed post-mortem titled “Why We No Longer Use LangChain for Building Our AI Agents.” The summary: they removed LangChain, wrote direct API calls, and everything improved — speed, debuggability, control. The abstraction was not helping. The abstraction was the thing they were debugging.

The $1.25 Billion Question

LangChain raised $10 million seed (Benchmark), $25 million Series A (Sequoia), and $125 million Series B at a $1.25 billion valuation from investors including CapitalG, Sapphire Ventures, ServiceNow, Workday, Cisco, Datadog, and Databricks.

To contextualise the valuation:

- cURL — the HTTP client that actually delivers the world’s API calls, maintained by Daniel Stenberg for 28 years, funding: approximately one Patreon and some corporate sponsors

- Go’s net/http — the standard library HTTP client used by Google, Cloudflare, Docker, and Kubernetes, funding: Google pays some maintainers

- LangChain — wraps

requests.post(), funding: $160 million

The market has decided that wrapping an HTTP call is worth more than making the HTTP call. This is either a profound insight about the value of developer experience or the most expensive f-string in the history of computing. History will judge. The Lizard has already judged.

THE CARGO IS A POST REQUEST

THE SHIP IS A SERIES B

THE HARBOR WAS ALWAYS THERE

THE HARBOR WAS ALWAYS FREE

THE SHIP ADDS ONE SECOND

THE HARBOR ADDS ZERO

THE INVESTORS BOUGHT THE SHIP

THE DEVELOPERS ARE SWIMMING TO THE HARBOR

🦎

The Go Port

This is the section where satire surrenders to reality, because reality has exceeded satire’s budget.

Someone ported LangChain to Go.

LangChainGo. 8,700 stars on GitHub. An active project. A community. Contributors. Documentation.

Let this sink in.

LangChain exists because Python developers need an abstraction layer to make API calls manageable. The abstraction provides chains, agents, memory, tools — all the scaffolding that Python’s untyped, loosely structured ecosystem does not provide natively.

Go already has:

- Chains → function composition (the language has functions)

- Agents → goroutines and a for loop

- Memory → a database (Go has database drivers)

- Tools → first-class function types and interfaces

- Retrievers → an HTTP call to a vector database (Go has

net/http) - Output Parsers →

encoding/json(in the standard library since 2009) - Prompt Templates →

fmt.Sprintf(in the standard library since 2009) - Callbacks → function parameters (the language has functions)

LangChainGo ports the abstraction layer from a language that arguably needed it to a language that provably does not. It is the software equivalent of importing an umbrella to a city that is already indoors. It is shipping a translation dictionary to a country that already speaks the language. It is building a bridge to an island that is already a peninsula.

The Go developer who uses LangChainGo is a Go developer who has looked at http.Post(), looked at json.Unmarshal(), looked at fmt.Sprintf(), looked at the standard library that has solved these problems since 2009, and decided: what this needs is an abstraction layer ported from a language that wraps these calls because that language doesn’t have these calls.

THE SQUIRREL: “But it provides a UNIFIED INTERFACE across LLM providers!”

riclib: “A unified interface across LLM providers is a function with a provider parameter.”

THE SQUIRREL: “But the COMMUNITY INTEGRATIONS—”

riclib: “The community integration with Anthropic is http.Post('https://api.anthropic.com/v1/messages', ...). The community integration with OpenAI is http.Post('https://api.openai.com/v1/chat/completions', ...). The community is net/http.”

THE SQUIRREL: “But—”

riclib: “The Anthropic Go SDK already exists. The OpenAI Go SDK already exists. Both are typed. Both compile. Both have streaming. Both have tool use. Neither requires an abstraction layer ported from a language that needed the abstraction because it lacked the things Go has had since 2009.”

THE SQUIRREL: vibrating at a frequency that suggests the realisation is arriving but has not yet been accepted

THE LIZARD:

THEY PORTED THE SCAFFOLDING

TO A BUILDING THAT WAS ALREADY BUILT

THE BUILDING DOES NOT NEED SCAFFOLDING

THE BUILDING NEEDED NOTHING

THE SCAFFOLDING IS NOW INSIDE THE BUILDING

THE BUILDING IS CONFUSED

🦎

The Framework Lifecycle

LangChain follows the classic framework lifecycle:

- A real problem exists — calling LLM APIs in Python requires boilerplate. Fair.

- A framework solves it — LangChain provides chains, agents, tools. The boilerplate is abstracted. The developer experience improves. Fair.

- The framework grows — more abstractions. More layers. Chains of chains. Agents that call agents. Memory that wraps memory. The framework becomes the thing you’re debugging instead of the thing you’re building.

- The framework raises money — $160 million. The framework is now a company. The company must grow. Growth means more features. More features means more abstraction. More abstraction means more layers between the developer and the POST request that was always the point.

- Developers remove the framework — latency drops by one second. Debuggability improves. Code shrinks. The POST request, unencumbered, is fast again.

- The framework pivots — LangChain rebrands as “the agent engineering platform.” LangGraph appears. The product is no longer chains — the product is agents, which are chains that loop, which are the thing that developers were already building with a while loop and an API call.

This lifecycle is not unique to LangChain. It is the lifecycle of every framework that mistakes abstraction for value. React followed it. Angular followed it. The AI ecosystem is following it faster, because AI development moves faster, and the gap between “this framework helps” and “this framework is the problem” has compressed from years to months.

“Every framework starts as a solution and ends as the problem it was solving. LangChain completed this lifecycle in eighteen months, which is a record, though not one that is celebrated except by the people who removed it.”

— The Passing AI, reviewing the post-mortems

The Correct Amount of LangChain

Zero. For Go developers, the correct amount of LangChain is zero, because Go has a standard library that does everything LangChain wraps, and has had it since 2009, and does not need a $1.25 billion company to format a string and call an API.

For Python developers, the correct amount of LangChain is debatable and depends on the complexity of the application, the developer’s familiarity with HTTP, and their tolerance for debugging abstractions instead of debugging their application. But the trend is clear: teams that started with LangChain are removing LangChain. The abstraction served its purpose as training wheels. The developer has learned to ride. The training wheels are now the thing slowing them down.

For the Squirrel, the correct amount of LangChain is all of it, plus LangGraph, plus CrewAI, plus AutoGen, plus a GoLangChainBridgeAdapterFramework that the Squirrel has been proposing since Tuesday and that nobody has approved.

For the Lizard, the correct amount of LangChain is:

THE CORRECT AMOUNT

OF ABSTRACTION

BETWEEN YOU AND HTTP

IS ZERO

THE REQUEST IS RIGHT THERE

YOU CAN SEE IT

YOU CAN READ IT

YOU CAN SEND IT

WHY ARE YOU WRAPPING IT

IN A CHAIN

INSIDE AN AGENT

INSIDE A MEMORY

INSIDE A CALLBACK

INSIDE A SERIES B

THE POST REQUEST

DID NOT ASK FOR THIS

🦎

Measured Characteristics

- Year founded: October 2022

- Founded by: Harrison Chase

- Funding raised: $160 million

- Valuation: $1.25 billion

- Valuation per HTTP abstraction layer: ~$156 million

- Core operation:

requests.post() - Cost of core operation without LangChain: $0

- Lines of Python to call an LLM API: 7

- Lines of Go to call an LLM API: 12

- Lines of LangChain between you and the API call: thousands

- Latency added by LangChain memory abstraction: >1 second per call

- Latency removed by removing LangChain: >1 second per call

- Companies that have written “Why We Removed LangChain” blog posts: growing

- Companies that have written “Why We Added LangChain” blog posts: shrinking

- LangChainGo stars on GitHub: 8,700

- Reason for LangChainGo’s existence: unclear

- Things Go already had before LangChainGo: all of them

- Go standard library features LangChainGo wraps:

net/http,encoding/json,fmt.Sprintf - Year those features shipped in Go: 2009

- Year LangChainGo shipped: 2023

- Gap during which Go developers called APIs without LangChainGo: 14 years

- Problems reported during that gap: 0

- cURL funding for 28 years of actual HTTP: ~Patreon

- LangChain funding for 3 years of wrapping HTTP: $160 million

- Framework lifecycle completion time: 18 months (a record)

- The Squirrel’s opinion: more abstraction (always more abstraction)

- The Lizard’s opinion: the POST request did not ask for this

- riclib’s opinion:

http.Post()(since August 2024, before the wave)

See Also

- Python — The language LangChain was built for, which is also the language LangChain is being removed from

- Go — The language that does not need LangChain, and now has it anyway

- Vibe In Go — Why Go is the correct language for AI (spoiler:

net/http) - Vibe Coding — What happens when the framework writes the code and nobody reads it

- React — Another framework that started as a solution and became the problem

- Premature Abstraction — The disease. LangChain is a symptom.

- Boring Technology —

requests.post()is boring. $1.25 billion is not. - The Framework Wars — LangChain is the AI theatre of the same war

- The Lizard — Has been calling APIs since before APIs. Does not need a chain.

- The Caffeinated Squirrel — Wants LangChain, LangGraph, CrewAI, AutoGen, and a bridge between them. Has been denied.