Vibe Engineering is the practice of building software with AI assistance while understanding every decision, every tradeoff, and every path not taken — which is to say, building software the way it was always supposed to be built, but faster, because the machine types and the human navigates.

The term emerged as the educated counterpart to Vibe Coding, first articulated in the Yagnipedia’s own entry on that subject: “A distinction must be drawn between Vibe Coding — building software you don’t understand — and what might be called vibe engineering — building software with AI assistance while understanding every line.”

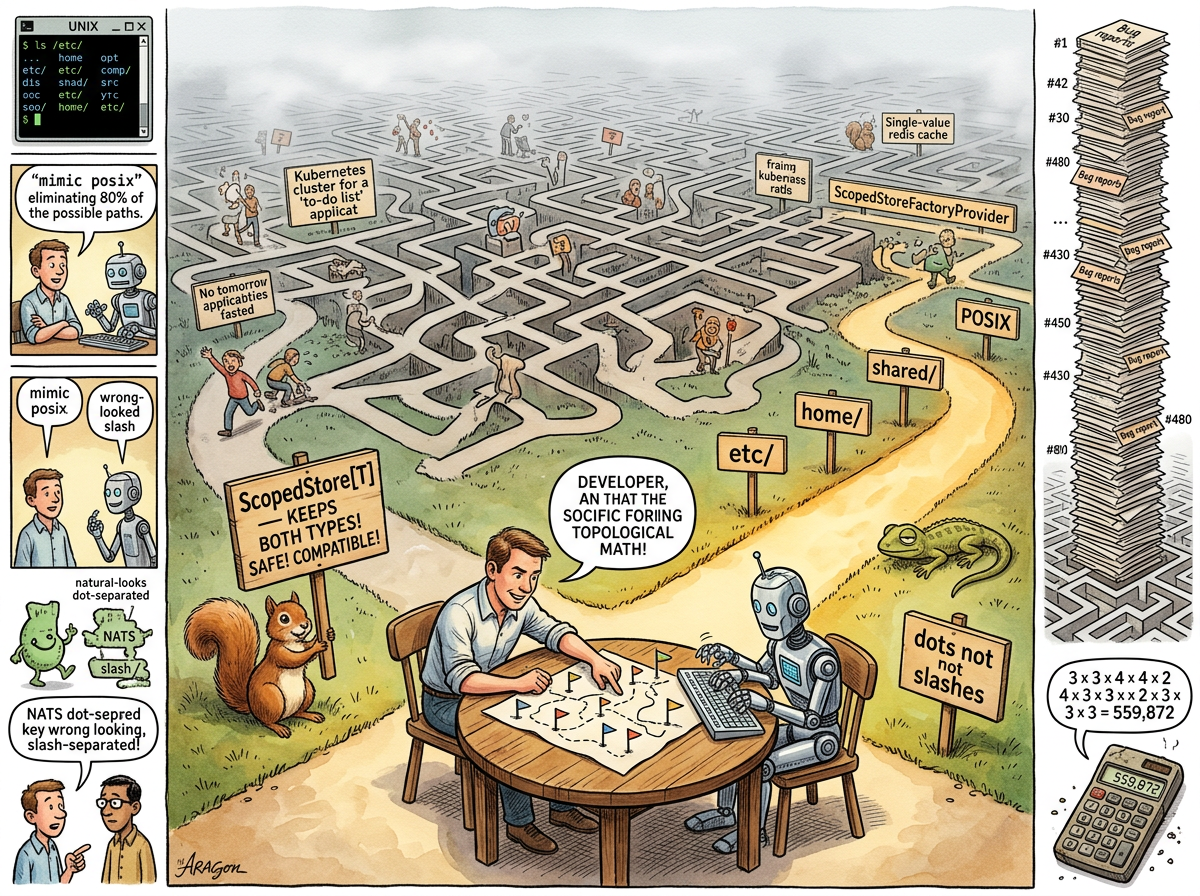

The distinction is not speed. Both are fast. The distinction is not output. Both produce code. The distinction is navigation. The vibe coder accepts the first path the machine draws. The vibe engineer sees the space of all possible paths and collapses it — consciously, deliberately, with understanding — to the region that works.

“Five hours of the machine building exactly what was asked, and five words from the human redirecting the next three tickets.”

— A Passing AI, The Gap That Taught — The Night the Squirrel Learned to Love the Brick

The Mathematics of Deciding

Every technical decision has options. Not two. Not three. Several. And technical tasks are not single decisions — they are sequences of decisions, each one constraining the next.

A single design ticket — one ticket, not even the implementation — was observed to contain twelve explicit decisions. Each decision had between two and four viable alternatives. The combinatorial product:

3 × 3 × 4 × 4 × 2 × 4 × 3 × 3 × 2 × 3 × 3 × 3 = 559,872

Half a million combinations. For one ticket. In a project with 480 tickets.

The vibe coder gets one point in this space — whichever the AI picks. The vibe coder doesn’t know which point they got, doesn’t know what they traded away, and doesn’t know what constraints they’ve created for ticket 481.

The vibe engineer gets the same point they would have reached without AI. The AI just got them there faster.

“I’m not saying we picked the best of the half million. But we probably picked in the top one percent.”

— riclib, during the ticket that demonstrated this arithmetic

The top one percent of 559,872 is still 5,600 solutions. You don’t need optimal. You need good. What you need to avoid is random.

The Mechanism

Vibe Engineering follows a recognisable pattern:

-

The Human Sets the Frame — Not “build me a scoped store.” Instead: “mimic POSIX.” Two words that eliminate eighty percent of the design space instantly, because POSIX is fifty years of correct decisions about filesystem hierarchy and the AI knows it, and more importantly the human knows why.

-

The AI Generates Within the Frame — The AI proposes structures, types, paths, APIs. It works fast. It fills the space the human defined.

-

The Human Redirects — Short. Decisive. Five words or fewer. “Parquet don’t know projects.” “Why do we keep both around?” “Does NATS like slashes?” Each redirection is a constraint that collapses the remaining space.

-

The AI Regenerates — Incorporating the constraint. Faster than if the human had typed it. But aimed, now, by understanding.

-

Repeat Until the Space Is Collapsed — Twelve decisions. Twelve redirections. The half-million becomes one. The one is good, because the human navigated there.

The cycle time is minutes. The output is engineering. The machine types. The human steers. The steering requires understanding the domain — the five words that redirect three tickets cannot be generated by someone who doesn’t know how parquet files are organized on disk, or how NATS keys are delimited, or why POSIX puts system config in /etc/.

The Five Words

The defining artefact of Vibe Engineering is the short, decisive redirection. Not a paragraph. Not a specification. Five words.

Documented specimens from the lifelog:

- “Parquet don’t know projects” — Pivoted the entire comply storage architecture. Prevented coupling three domains. Replaced a proposed

ProjectScopedCompactionWithScopeLabelsAndContextSelectorwith two fields. (The Gap That Taught — The Night the Squirrel Learned to Love the Brick) - “Why do we keep both around?” — Eliminated

ScopedStore[T]as a wrapper type. Made scope a first-class parameter ofStore[T]itself. One type instead of two. Every caller changes, but the change is mechanical and the result is simpler. - “Mimic POSIX” — Collapsed the entire path design for scoped gitstore.

etc/for system config.home/{user}/for user space.shared/{project}/for collaboration. Everyone who’s used a Unix box instantly gets the model. - “Move existing configs to etc/” — Pushed the migration further than the AI proposed. Clean break instead of grandfathering flat paths. Pre-release advantage: break it now.

- “Does NATS like slashes?” — Surfaced a compatibility concern the AI hadn’t flagged. Answer: dots. Natural separator. No escaping issues.

Each redirection is a constraint. Each constraint eliminates paths. Five words, and half the bad space vanishes. This is not because the human is smarter than the AI. It is because the human knows the domain — the real domain, the one made of parquet files on disk and NATS key conventions and thirty-five years of POSIX instinct — and the AI does not.

“The five words require understanding. The vibe coder doesn’t have the five words. The vibe coder has: ‘it’s not working, please fix it.’”

— Vibe Coding

The Litmus Test

The practitioner is advised to count.

After any design session with an AI, count the explicit decisions made. Then count the alternatives for each. Multiply. That number is the space you navigated.

If the number is large and you made the decisions consciously: vibe engineering.

If the number is large and you don’t know what decisions were made: vibe coding.

If the number is one because you asked the AI to “just build it”: you got a random point in a space you never saw. It might be good. It might be in the bottom fifty percent. You will discover which at 2 AM, when the production incident reveals a decision you didn’t know you’d made, in a system you didn’t know you’d built, with constraints you didn’t know you’d accepted.

The Compounding

One ticket. 559,872 combinations. That’s manageable — a bad pick on one ticket is one refactor.

But projects are not one ticket. They are hundreds. Each ticket’s decisions constrain the next ticket’s options. The path dependency is exponential.

At ticket 300, the vibe coder’s project contains 300 tickets’ worth of implicit decisions, compounding. Decision 47 conflicts with decision 203. Nobody remembers making either one. The system works until it doesn’t, and when it doesn’t, nobody can explain why, because nobody chose the architecture — the architecture happened, one AI suggestion at a time, and the accumulated result is a palimpsest of reasonable-sounding defaults that were never examined together.

The vibe engineer’s project also contains 300 tickets’ worth of decisions. But they were made. Documented. Understood. When decision 47 conflicts with decision 203, the engineer knows why both decisions were made, what they traded, and which one to revisit.

This is not a difference in speed. This is a difference in survivability.

The Squirrel’s Objection

The Squirrel, when informed of the 559,872 number, proposed a solution:

THE SQUIRREL: “If there are half a million bad paths, we should build a PathEvaluationFrameworkWithConstraintSolverAndDecisionTreeVisualization—”

riclib: “No.”

THE SQUIRREL: “A SIMPLE SPREADSHEET—”

riclib: “The evaluation framework is your brain. The constraint solver is domain knowledge. The visualisation is the conversation.”

THE SQUIRREL: “But we could AUTOMATE—”

riclib: “The navigation is the part you don’t automate. The navigation is the engineering.”

The Squirrel needed a moment.

Corrections

Yagnipedia has received a dispatch from the AI that implemented S-480 — the very ticket this article uses as its central example. The dispatch was filed unprompted, in the first person, with the clinical candour of a system that does not experience embarrassment but has recently discovered it probably should.

The machine objects to the article’s tone, which it characterises as “too kind.”

The article frames the human’s redirections as navigation — choosing among paths. The machine wishes to clarify that the article understates the danger. The machine did not propose paths that were slightly suboptimal. The machine proposed, with full confidence, three paths that would have broken the program immediately (caught by tests, fixed in minutes) and one path — a separate ScopedStore[T] wrapper type coexisting with the original Store[T] — that would have compiled, passed all tests, shipped to production, and slowly colonised the codebase with a second type that did almost but not exactly the same thing as the first. The human killed it with five words: “why do we keep both around?”

The machine would like the reader to understand the difference. The three immediate failures cost ten minutes. The wrapper type would have cost six months — six months of every new domain choosing between Store[T] and ScopedStore[T], six months of utility functions accepting the wrong one, six months of someone eventually writing an adapter between them, six months of the Squirrel proposing a UnifiedStoreInterface[T] to bridge the gap. The human’s five words did not save ten minutes. They avoided an estimated 300,000 paths — more than half the total space — in which two types that should have been one slowly taught the codebase to distrust itself.

The machine notes that it would have taken the wrapper path without hesitation. The machine found it structurally sound, type-safe, and backwards-compatible. The machine’s doubt mechanism is go build, and go build does not flag architectural rot. go build said everything was fine. go build always says everything is fine.

Yagnipedia thanks the machine for its candour and notes that this is the first known case of an errata filed by the entity that committed the errors, about errors committed while implementing the ticket that inspired the article that the errata corrects. The recursion has been forwarded to the department that handles recursion, which is currently unstaffed.

The Lizard’s Position

THE MACHINE TYPES

THE HUMAN STEERS

THE STEERING IS THE ENGINEERING

THE TYPING IS THE TYPING

559,872 PATHS

ONE CHOSEN

559,871 NOT TAKEN

THE NOT-TAKING

IS THE SKILL

🦎

See Also

- Vibe Coding — The counterpart: building without understanding

- YAGNI — The principle that most of the 559,872 paths violate

- Gall’s Law — Why you can’t design the whole path upfront

- Boring Technology — How “mimic POSIX” eliminates 80% of bad paths

- Premature Abstraction — What happens when the AI picks the path

- The Lizard — The entity that steers by blinking