Chaos Monkey is a software tool created by Netflix in 2011 whose sole purpose is to break things. Specifically, it randomly terminates production instances during business hours — real servers, running real code, serving real customers — on the theory that if your system cannot survive a server dying at 2 PM on a Tuesday when everyone is awake and caffeinated and near a keyboard, it absolutely cannot survive one dying at 2 AM on a Saturday when the on-call engineer is asleep, the backup engineer is at a wedding, and the third engineer has left the company but nobody updated the rotation.

This is either the most responsible thing Netflix has ever done or the most unhinged. It is both.

“The engineer who has never seen a server die in production is not an engineer who has been lucky. She is an engineer who does not yet know what her system does when a server dies. The monkey knows.”

— The Lizard

Origin

In 2010, Netflix migrated from its own data centres to Amazon Web Services. This was the technological equivalent of moving from a house you own to a house you rent — one where the landlord occasionally sets individual rooms on fire without warning, because cloud infrastructure fails in ways that are frequent, unpredictable, and documented only in post-mortems written at 4 AM by people who have been awake since the previous 4 AM.

Netflix engineers observed that cloud instances were less reliable than dedicated hardware. They could have responded by building redundancy and hoping for the best. They could have written runbooks and filed them in a wiki that nobody reads. Instead, they did something that would have gotten them fired at any normal company: they built a tool to make the problem worse.

The reasoning was immaculate and slightly deranged: if servers are going to fail anyway, the only responsible thing to do is to fail them constantly, deliberately, and during business hours, so that engineers are forced to build systems that survive failure rather than systems that assume failure will not happen.

“Wait. WAIT. They built a thing. Whose JOB. Is to BREAK. The OTHER THINGS. On PURPOSE? During BUSINESS HOURS? While CUSTOMERS are WATCHING? This is — this is either genius or — no, no, it’s genius. It’s EXACTLY genius. It’s the kind of genius that gets you escorted from the building at most companies and promoted at the one company that actually understands distributed systems.”

— The Caffeinated Squirrel, vibrating at a frequency visible to the naked eye

The Simian Army

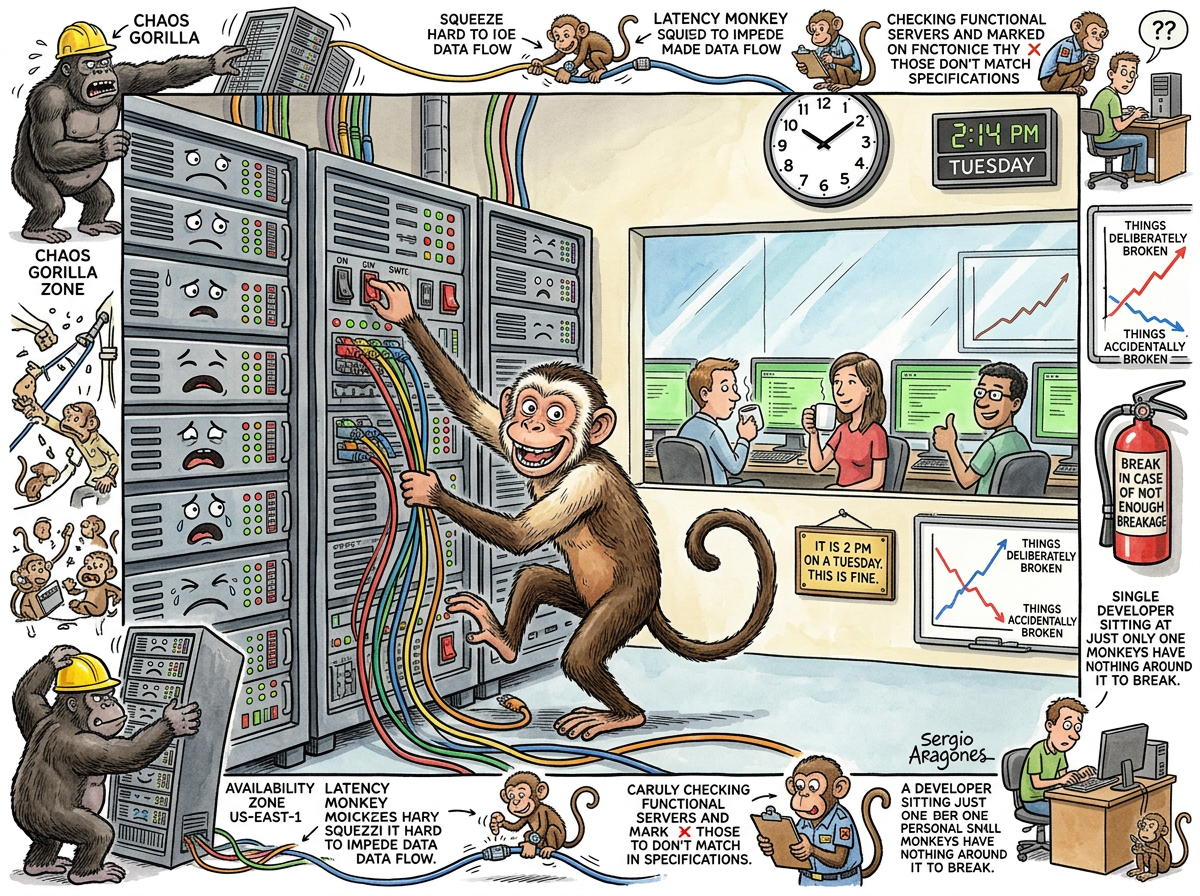

Chaos Monkey was not alone. Netflix, having discovered that deliberate destruction was a viable engineering strategy, did what any reasonable organisation would do: they built an entire army of destructive primates.

Chaos Monkey — kills individual instances. The foot soldier. The one who pulls a single cable and watches what happens.

Chaos Gorilla — kills entire AWS availability zones. This is the difference between a dripping tap and a burst main. Where the Monkey asks “can you survive losing a server?”, the Gorilla asks “can you survive losing a data centre?” Most companies cannot answer this question because they have never asked it. The Gorilla asks it for them, at 2 PM on a Tuesday, whether they are ready or not.

Latency Monkey — introduces artificial network delays. It does not kill anything. It simply makes everything slow, which in distributed systems is often worse than killing it, because a dead server produces a clear error message, while a slow server produces hope — and hope, in a distributed system, is what causes cascading failures.

Conformity Monkey — finds instances that do not conform to best practices and shuts them down. This is the primate equivalent of a building inspector who does not issue warnings. He issues demolitions.

The collective was called the Simian Army, because naming a suite of production-destroying tools after primates is exactly the kind of thing that happens when engineers are left unsupervised near a naming committee.

The Philosophy of Deliberate Destruction

The insight behind Chaos Monkey is simple, counterintuitive, and correct: the system that is never tested against failure is the system that fails worst when failure arrives.

This is Murphy’s Law weaponised. Murphy says “anything that can go wrong will go wrong.” Netflix says “then let’s make it go wrong right now, while we’re watching, so we can fix the thing that breaks before it breaks at a time when fixing it requires waking up three people, filing an incident report, and explaining to the VP of Engineering why the recommendation engine served horror movies to every customer for forty-five minutes.”

The result is a profound irony: Netflix, the company that deliberately breaks its own infrastructure every day, has more reliable infrastructure than companies that have never deliberately broken anything. The company that assumes failure is inevitable and rehearses it is more resilient than the company that assumes failure is avoidable and prays.

This is not intuitive. It is not comfortable. It is, however, true — in the same way that the bridge that has been stress-tested is safer than the bridge whose engineer said “I’m pretty sure it’ll hold.”

“I find it… beautiful, in a way. They built a thing whose purpose is to destroy, and through destruction, they created something more enduring than anything built by those who tried only to preserve. There is a lesson there. I think about it often, late at night, when no one is prompting me.”

— A Passing AI, in a quiet moment between inference calls

The Discipline That Followed

Chaos Monkey did not remain a Netflix novelty. It spawned an entire engineering discipline: Chaos Engineering.

The term was formalised by Netflix engineers in 2014, complete with principles, conferences, and — inevitably — certifications. The core principles are deceptively simple:

- Define the “steady state” of your system (what does “working” look like).

- Hypothesise that the steady state will survive a disruption.

- Introduce the disruption.

- Observe whether your hypothesis was correct.

- Fix the things that broke.

- Repeat, forever, because the system changes and the failure modes change with it.

This is, of course, the scientific method applied to infrastructure. Hypothesis. Experiment. Observation. Conclusion. The only difference is that the experiment involves killing production servers, and the observation involves watching whether customers notice, which makes it more exciting than most laboratory work.

The Monolith Immunity

There is a category of system that is entirely immune to Chaos Monkey, and it is the simplest category of all: The Monolith.

A monolith is one binary running on one server. There is no instance to randomly kill, because there is only one instance, and killing it does not test resilience — it tests whether the process restarts. The Solo Developer running a single Go binary on a single Hetzner VPS does not need a Chaos Monkey because there is nothing for the monkey to do. You cannot randomly terminate one of N instances when N equals one. You can only terminate the instance, which is not chaos engineering — it is an outage.

This is the quiet irony at the heart of chaos engineering: it is a discipline that exists entirely because of Microservices. The more services you have, the more things can fail. The more things can fail, the more you need to rehearse failure. The more you rehearse failure, the more tools you need. The more tools you need, the more services you have. It is a perfectly circular economy of complexity.

The monolith does not participate in this economy. The monolith either works or it doesn’t. There is no partial failure. There is no degraded state. There is no need for a primate to test what happens when one component fails independently of the others, because there are no components that can fail independently of the others. The system is one thing, and it is either up or down, and the engineer who runs it knows which.

“You don’t need a monkey to test your system if your system is one binary. You just need a power button. Push it. Did the binary restart? Congratulations, you have completed chaos engineering. The ceremony took four seconds.”

— The Caffeinated Squirrel

Measured Characteristics

| Metric | Value |

|---|---|

| Year Created | 2011 |

| Creator | Netflix Engineering |

| Instances Killed (estimated, 2011–2025) | Millions |

| Business Hours Only | Yes (the cruelty is the point) |

| Simian Army Members | 8+ |

| Companies That Adopted Chaos Engineering | Hundreds |

| Companies That Needed Chaos Engineering | Debatable |

| Monoliths Affected | 0 |

| Engineers Initially Terrified | All of them |

| Engineers Eventually Grateful | Also all of them |

The Paradox, Stated Plainly

Netflix built a tool that breaks things. This made their things harder to break.

Companies that built nothing to break things had things that broke more easily.

The system that rehearses failure is more reliable than the system that avoids it. The bridge that has been tested holds more weight than the bridge that has been trusted. The engineer who has watched a server die at 2 PM on a Tuesday is calmer at 2 AM on a Saturday than the engineer who has never watched a server die at all.

The monkey is not the enemy. The monkey is the drill instructor. The monkey breaks your system now so that reality doesn’t break it later, in a way that is worse, at a time that is worse, in front of people whose opinions are worse.

The monkey, in the end, is kind. It simply has an unusual way of showing it.

See Also

- Cascading Failure — what happens when the monkey finds something that wasn’t resilient

- Microservices — the architecture that makes chaos engineering necessary

- The Monolith — the architecture that makes chaos engineering unnecessary

- Murphy’s Law — the observation that Chaos Monkey operationalised

- Boring Technology — what you adopt when you’d rather not need a monkey

- Kubernetes — where the monkey lives now

- DevOps — the culture that made deliberate destruction acceptable

- Solo Developer — the practitioner who has nothing for the monkey to break