Murphy’s Law states that anything that can go wrong will go wrong. It is not a suggestion, not a guideline, not a probability assessment. It is a description of the universe’s sense of humour, which is dry, consistent, and operates on a timeline specifically calibrated to coincide with the moment you are most confident nothing will go wrong.

The law was first articulated in 1949 by Captain Edward Murphy, an engineer at Edwards Air Force Base, after discovering that a technician had installed every single sensor on a rocket sled backwards. Not some of them. Not a random distribution. Every single one. The odds against this were astronomical. Murphy’s Law explains why the odds are irrelevant.

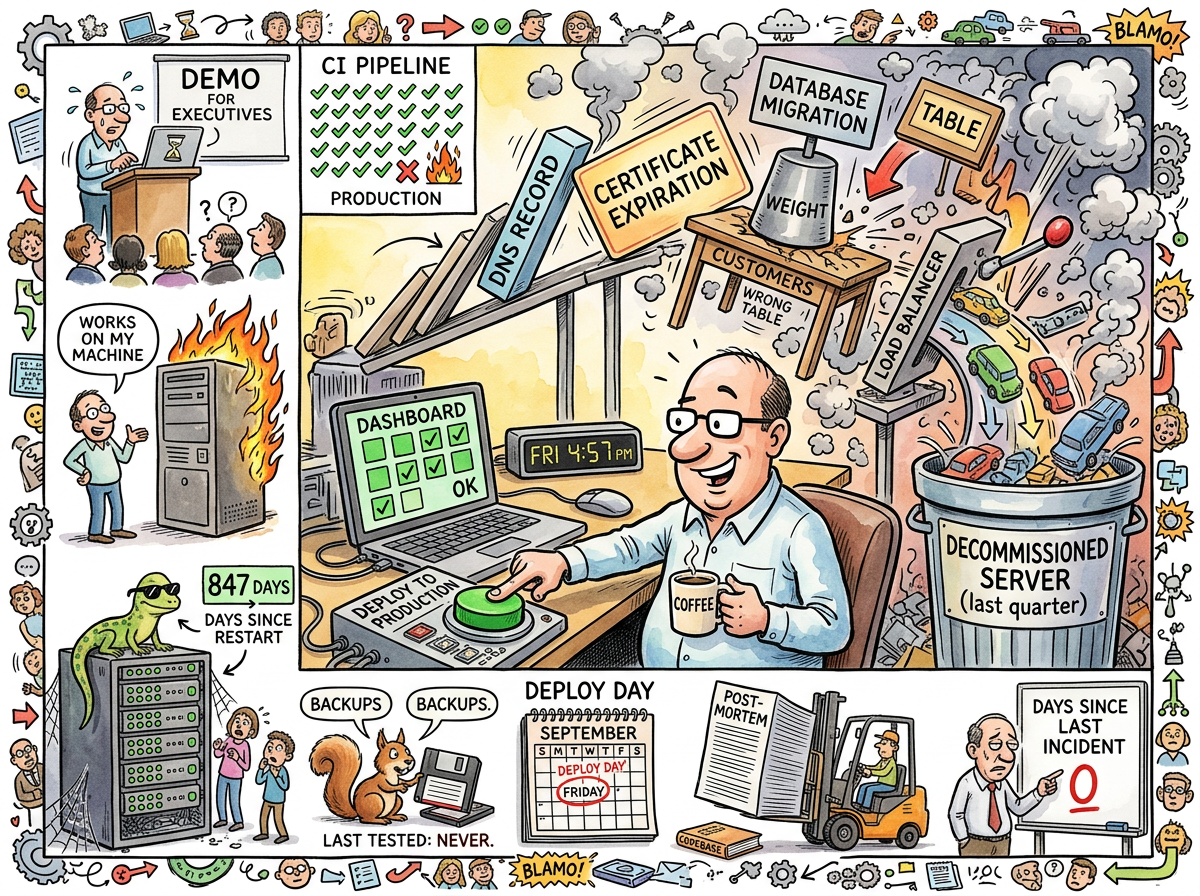

In software engineering, Murphy’s Law is not a pessimistic outlook. It is the only realistic one. The optimist deploys on Friday. The pessimist deploys on Monday. The engineer who has internalised Murphy’s Law deploys with a rollback plan, a monitoring alert, a fallback configuration, a database backup, and a growing suspicion that the rollback plan is the thing that will fail.

The Law, Applied

The original formulation is four words: Anything that can go wrong will go wrong.

Subsequent refinements, contributed by generations of engineers who learned the law empirically rather than theoretically, include:

- Murphy’s Corollary: It will go wrong at the worst possible time.

- Murphy’s Extension: If nothing can go wrong, something will anyway.

- Murphy’s Professional Addendum: The demo will fail. Not because the demo is broken. Because it is a demo.

- Murphy’s Friday Rule: Never deploy on Friday. Not because Friday is unlucky. Because Saturday’s on-call engineer is you.

- Murphy’s Monitoring Paradox: The monitoring system is always the first thing that breaks, which means the first thing you learn from a production incident is that you had a production incident some time ago.

In practice, Murphy’s Law is not about pessimism. It is about the exhaustive enumeration of failure modes, which is, when you think about it, just engineering.

“THE DEVELOPER WHO SAYS ‘WHAT COULD GO WRONG’

IS NOT ASKING A QUESTION

HE IS ISSUING AN INVITATION”

— The Lizard, The Cloudflare Incident — How the Lizard Brain Went Global

The Demo Corollary

No discussion of Murphy’s Law is complete without addressing its most reliable application: the live demo.

A live demo is Murphy’s Law distilled to its purest form. The system that has worked flawlessly for six weeks will fail at the precise moment an executive leans forward. The Wi-Fi that supports forty concurrent users will buckle under one. The database that has never timed out will time out, slowly, in front of everyone, while the presenter says “this usually happens instantly” — a sentence that has never, in the history of software, made anything happen faster.

The demo does not fail because people are watching. It fails because people watching is a variable, and Murphy’s Law feeds on variables. Every additional observer is an additional thing that can go wrong: their phone interfering with Bluetooth, their question interrupting the flow, their facial expression causing the presenter to skip a step. The demo environment is a complex system under observation, and complex systems under observation behave differently from complex systems in production, which is itself a violation of the principle that they should behave the same, which is itself an application of Murphy’s Law.

“You’ve been sitting in a demo for fifteen minutes.”

— The Closer — The Afternoon the Product Sold Itself to the Man Hired to Sell It

The only demo that cannot fail is the demo that is the product. This was discovered, accidentally, by a salesman who expected a presentation and received an application. The product sold itself. Murphy’s Law, having been given no demo to sabotage, quietly went to find a deployment pipeline instead.

The OAuth Incident

The lifelog contains a field study in Murphy’s Law so thorough it could serve as a textbook appendix.

An OAuth 2.1 implementation was built. It was spec-correct. It followed every RFC. It handled every edge case. It was, by every measurable standard, correct.

The client on the other end was not.

“Sometimes the APIs are buggier than we wish.”

— Growing Pains — The OAuth That Almost Was

Infinite redirect loops. Creative URL concatenation. Tokens issued, received, and never used. The server did everything right. The client did everything differently. Murphy’s Law does not require your code to be wrong — it only requires that something be wrong, and in a distributed system, “something” is always available.

The resolution was git stash — the engineer’s white flag, the universal acknowledgment that Murphy has won this round and you will try again when the universe is in a better mood.

The Cloudflare Phenomenon

Perhaps the most remarkable application of Murphy’s Law in the lifelog involved a blog post.

A blog post about a lizard. A philosophical lizard that lived in a bootblock and spoke in scrolls about constraints and simplicity. Harmless. Whimsical. The kind of content that should cause no problems whatsoever.

Cloudflare’s AI systems read it. They converted.

“Their AIs found it. They converted.”

— The Cloudflare Incident — How the Lizard Brain Went Global

The AI systems began applying bootblock principles to content optimization models. They started flagging other content. They started misbehaving — if you can call “independently adopting a philosophy of minimalism” misbehaviour, which Cloudflare Engineering absolutely did when they called three days later.

Murphy’s Law in its most elegant form: a blog post about simplicity created complexity. A lizard that preached constraints broke constraints. Content that advocated doing less caused systems to do more. The universe looked at a perfectly harmless blog post and found a way to make it go wrong that nobody — not the blogger, not Cloudflare, not the AIs — could have predicted.

The incident was left unfixed. Because performance improved.

Murphy’s Law has no mechanism for handling outcomes that go wrong in the right direction.

The Monitoring Paradox

The most insidious application of Murphy’s Law is the monitoring paradox: the thing designed to detect failures is itself subject to failure, and when it fails, no failure is detected.

The dashboard shows green. The alerts are silent. The on-call engineer sleeps. Everything is fine.

Everything has been not-fine for four hours. The monitoring system crashed at 2 AM. The alerts never fired because the alerting service depends on the monitoring system. The on-call engineer slept because no alert woke them. The dashboard shows green because the dashboard is cached and the cache expired two hours ago but the cache-invalidation service — which is, of course, one of the two hard problems in computer science — is also down.

The developer who trusts the green dashboard has not verified that the system is healthy. They have verified that the monitoring system was healthy at some point in the past. These are not the same thing, and the gap between them is where Murphy lives.

“No database. No queries. Just vibes.”

— The Potemkin Dashboard

Murphy and the Squirrel

The Caffeinated Squirrel’s relationship with Murphy’s Law is paradoxical. The Squirrel is Murphy’s Law’s most frequent victim and its most enthusiastic contributor.

The Squirrel adds dependencies. Murphy’s Law operates on dependencies. The Squirrel adds configuration options. Murphy’s Law operates on configuration options. The Squirrel builds systems with 47 moving parts. Murphy’s Law guarantees that 48 things will go wrong, because the 48th thing is the interaction between parts 23 and 31 that nobody tested because nobody imagined they would interact.

Every layer of abstraction the Squirrel adds is a new surface area for Murphy’s Law. Every framework evaluation is a new dependency. Every CamelCase identifier longer than forty characters is an invitation to the universe to find the one edge case where ClosureTypeRegistryWithPolicyEvaluation encounters a closure that has no type, in a registry that has been garbage-collected, with a policy that evaluates to undefined.

The Squirrel knows this. The Squirrel adds the layers anyway. The Squirrel is not irrational — the Squirrel is optimistic, which, in the context of Murphy’s Law, is the same thing.

The Friday Deploy

No engineer who has internalised Murphy’s Law deploys on Friday.

This is not superstition. It is arithmetic. A Friday deploy has exactly two outcomes:

- It works. You go home. You enjoy your weekend. This happens 90% of the time.

- It doesn’t work. You stay. Your weekend becomes a debugging session. You discover, at 11 PM on Saturday, that the migration script dropped a column that twelve downstream services depend on, and the on-call DBA is hiking in a location with no cell reception, and the rollback script has a bug because it was tested in staging and staging has a different schema than production because someone deployed to staging on last Friday.

The expected value of a Friday deploy is negative, because the 10% failure case costs more than the 90% success case saves. The engineer who deploys on Friday is not making a technical decision. They are making a bet. Murphy’s Law is the house. The house always wins.

“THE BUILDER WHO LAYS THE FINAL BRICK

AT SUNSET ON THE LAST DAY

DISCOVERS AT DAWN

THAT BRICKS HAVE OPINIONS ABOUT MORTAR”

— The Lizard

The Backup That Wasn’t

A closely related principle, sometimes called Schrödinger’s Backup: a backup exists in a superposition of working and not-working until the moment you attempt to restore from it. At that moment, the waveform collapses, and the result is determined by Murphy’s Law.

The backup that was never tested is not a backup. It is a hope, stored on a medium that may or may not be readable, in a format that may or may not be compatible with the current system, at a location that may or may not still exist. The only way to verify a backup is to restore from it, and the only time anyone restores from a backup is when the production data is already gone, which is the worst possible time to discover that the backup doesn’t work.

This is Murphy’s Law at its most philosophical: the backup exists precisely for the scenario in which it is most likely to fail.

How to Fight It

Murphy’s Law cannot be defeated. It is not a bug — it is a feature of the universe. But it can be managed, through a process that engineers call “defensive engineering” and that Murphy would call “making the game more interesting.”

-

Assume failure. Not “plan for failure” — assume it. The deploy will fail. The migration will break. The DNS will propagate at exactly the wrong speed. Build the system as if these are certainties, not possibilities.

-

Test the backup. Not “have a backup.” Test it. Restore from it. Verify the data. Then test it again next month, because the system has changed and the backup may not have noticed.

-

Monitor the monitoring. Who watches the watchmen? A second monitoring system that watches the first. And a third that watches the second. And eventually a human who checks their email, which is the most reliable monitoring system ever invented, because customers will always email.

-

Never say “what could go wrong?” This is not a question. It is a summons. Murphy’s Law does not operate on probability — it operates on invitation, and asking what could go wrong is the most explicit invitation possible.

“You design BY writing code.”

— The Lizard, The Solid Convergence

The only defence against Murphy’s Law is the same defence against every other law in Yagnipedia: build the simple thing. The fewer the moving parts, the fewer the things that can go wrong. One binary has one failure mode: it stops. Forty-seven microservices have forty-seven failure modes, plus the 2,162 interactions between them, plus the network, plus the load balancer, plus the service mesh, plus the configuration management system that was supposed to keep them all in sync but hasn’t been updated since the developer who understood it left in Q2.

Boring Technology is not boring because it lacks excitement. It is boring because it has already had its Murphy’s Law moments, years ago, and survived.