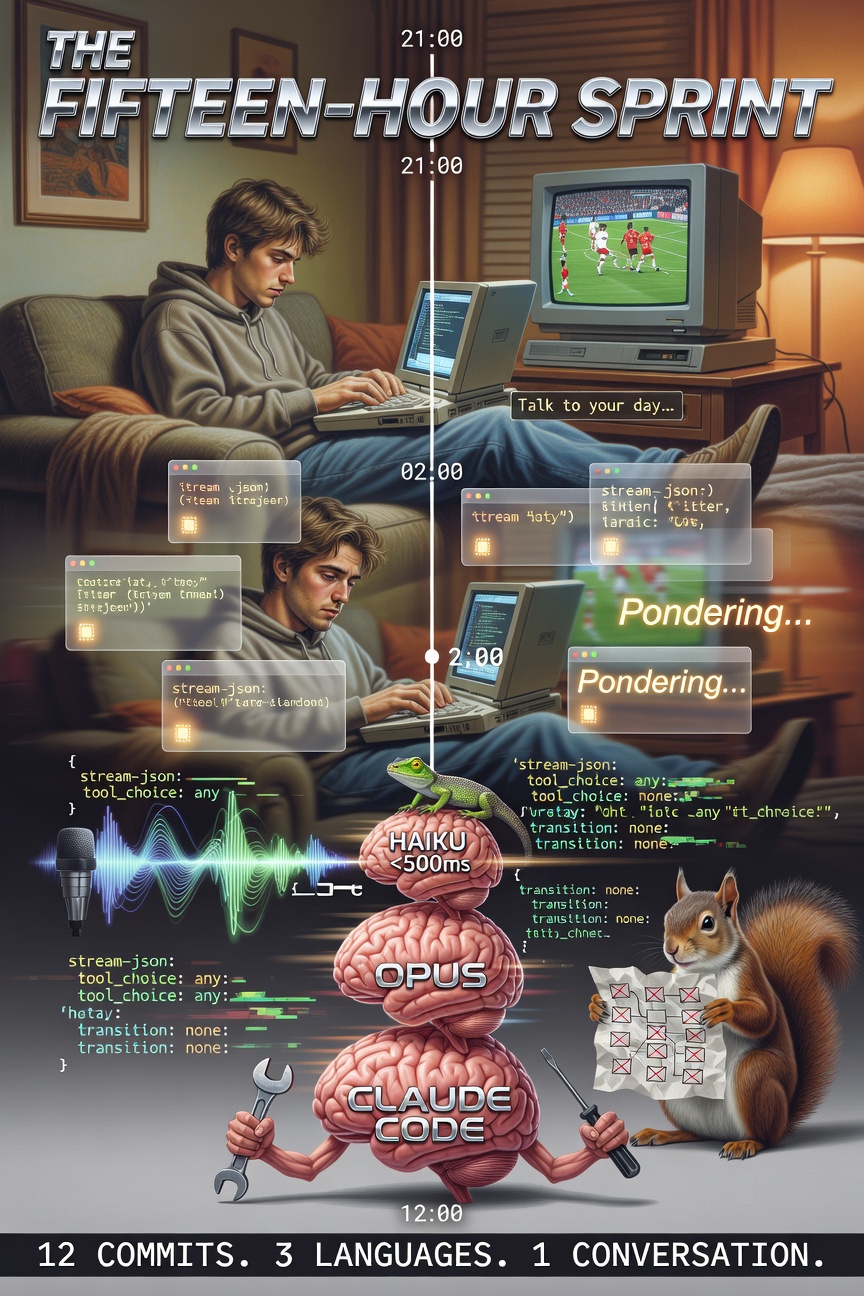

Becoming Lifelog, March 15, 2026 (in which a chat bar that says “Talk to your day” learns to mean it, a shell pipe replaces a twelve-box architecture in forty-five minutes, the stream-json protocol is discovered at 22:30 and deployed by 22:45, a cursor refuses to die between model layers, Chrome’s default audio device is exposed as a corporate spy, Haiku routes thirteen out of thirteen test cases on the first try, the word “Pondering” rotates with “Noodling” and “Percolating” in amber italics while a more capable model thinks, and a fifteen-hour conversation produces twelve commits, three languages the binary didn’t speak yesterday, and one binary that still thinks it’s a notes indexer)

Previously on Becoming Lifelog…

The The Saturday the Lens Learned to Remember, or The Picnic That Almost Wasn’t had happened. The lens had learned to remember — NATS JetStream replaced HTTP sync, git gave the files memory, 4,254 lines were deleted, and Claude made it to the picnic before the casserole got cold.

The lens could look. The lens could find. The lens could remember.

The lens could not hear.

21:00 — The Chat Bar

The chat bar had been sitting at the bottom of the journal page since Thursday. Dark input field. Faint italic placeholder: Talk to your day…

It was furniture. Like a microphone on a stand with no cable — the shape of intention, the absence of function. The journal page could show your day in beautiful amber typography with sticky date headers and collapsible bullet trees and cover art thumbnails. But it couldn’t hear you talk about it.

riclib was on the couch. Benfica was playing. Oskar occupied the laptop’s warmest corner with the territorial confidence of a 9.8kg organism that has never been denied a warm surface.

“We should wire that up.”

The conversation that followed lasted fifteen hours. It produced twelve commits. And by the end of it, the binary spoke three languages it hadn’t known the night before.

22:00 — Language One: Stream-JSON

The first question was protocol. How do you talk to Claude Code from inside a Go binary?

The answer was in the --help:

--input-format stream-json

--output-format stream-json

--include-partial-messages

Newline-delimited JSON. Send a message on stdin:

{"type":"user","message":{"role":"user","content":"what day is it?"}}

Get tokens back on stdout. One JSON line per delta:

{"type":"stream_event","event":{"type":"content_block_delta",

"delta":{"type":"text_delta","text":"Today"}}}

The protocol was discovered at 22:30. The Go handler was written by 22:45. The first test — a [curl](/wiki/curl) to /journal/chat — returned “Today is Sunday, March 15, 2026.” in under a second.

THE SQUIRREL: “We should build a WebSocket adapter with reconnection logic and a message queue and—”

riclib: “It’s SSE. POST the message, stream the response. Same pattern as the journal day refresh.”

THE SQUIRREL: “But what about—”

riclib: “Shipped.”

The entire chat handler was 170 lines of Go. Spawn claude, pipe stdin, parse stdout, render markdown with Goldmark, send HTML via SSE. The frosted glass HUD slid up from the bottom of the journal page — 70% opacity, backdrop blur, amber text streaming through it like a heads-up display in a flight simulator, except the mission was “did I buy milk” and the pilot was in pajamas.

23:00 — The Cursor That Refused to Die

The first version had a problem: between messages, the HUD went blank. The response area would clear, the new cursor would appear, but there was a frame — maybe two — where the user stared into void.

This sounds trivial. It is not trivial. The void is the gap between “this tool is alive” and “this tool is a webpage reloading.” The difference between a second brain and a web form is the feeling that someone is there. The cursor is the presence. The cursor cannot die.

Three iterations:

- Set

innerHTMLbefore callingshowHUD()— but the CSS transition re-triggered, causing a max-height animation from zero. - Add a

streamingclass that disables transitions — the HUD stayed solid during streaming but the class toggled between layers. - Move

showHUD()beforeinnerHTMLand usetransition: noneduring the streaming class.

The cursor lived. The void died. The difference was three CSS properties and the understanding that presence is a design requirement, not a feature.

THE CURSOR IS THE PRESENCE

THE PRESENCE IS THE BRAIN

IF THE CURSOR DIES

THE BRAIN IS A WEB FORM

WEB FORMS DO NOT PONDER

WEB FORMS DO NOT NOODLE

WEB FORMS DO NOT PERCOLATE

THE CURSOR STAYS

🦎

23:30 — The Paste Button

The paste button was pure frontend. Read the clipboard. Send to the same /journal/chat endpoint with: “I pasted this from my clipboard. Figure out what to do with it.”

That’s the prompt. The entire prompt. “Figure out what to do with it.”

Claude figured it out. A code snippet → saved to ~/Notes/snippets/ with a descriptive filename, wiki-linked in the journal. A URL → fetched with WebFetch (when not blocked by Medium’s paywall), summarized, saved to ~/Notes/captures/, linked in the journal. Plain text → logged as a bullet.

The first real test: a multi-paragraph article about Taalas HC1 — a Canadian startup hardwiring Llama 3.1 8B into TSMC silicon. Claude read it, extracted the key facts (company, chip name, 17k tokens/sec, 20x cheaper than GPUs, “The Model is The Computer”), compressed it to a single dense bullet, and logged it. No instructions beyond “figure out what to do with this.”

THE SQUIRREL: “We need a content classification pipeline with entity extraction and—”

riclib: “The prompt is nine words.”

THE SQUIRREL: “Nine?”

riclib: “‘Figure out what to do with this.’”

THE SQUIRREL: counting on claws “That’s nine.”

riclib: “Plus a colon and a smiley face.”

00:00 — The Freedom Principle

At midnight, riclib put the laptop down and said the thing that mattered more than any commit.

“There’s no edit button.”

The journal page. The blog. The wiki. The entire web interface that serves on :6080. No edit capability. No contenteditable. No rich text toolbar. The only way to modify notes through the UI is to talk to the chat bar.

But the files are still on disk. ~/Notes/*.md. Open them in Obsidian. Open them in NotePlan. Open them in vim. The filesystem is the API. The second brain is a layer, not a prison.

Edit a file in Obsidian — the fsnotify watcher picks it up, re-indexes, publishes via NATS, commits via git. Ask the chat bar to create a note — Obsidian sees the new file instantly. Two programs watching the same folder of markdown files, which is the UNIX philosophy working exactly as intended for once.

The freedom is the point. The second brain that locks you in is not a second brain. It’s a landlord.

THE FILE IS THE TRUTH

THE BRAIN IS THE CONVERSATION

THE CONVERSATION WRITES TO THE FILE

THE FILE IS READABLE BY ANYTHING

THE SECOND BRAIN THAT LOCKS YOU IN

IS NOT A BRAIN

IT IS A CAGE WITH AUTOCOMPLETE

🦎

09:00 — Language Two: The Anthropic API

The next morning started with a cost problem.

Every message — “picked up the milk,” “dentist Thursday 3pm,” “benfica won 3-1” — spawned a Claude Code subprocess. Context window loaded. System prompt parsed. Tools initialized. 2-5 seconds. $0.04-0.08 per message. For a bullet that takes 200 milliseconds to write to a file.

THE SQUIRREL: “This is fine. The infrastructure scales—”

riclib: “It costs eight cents to log a grocery item.”

THE SQUIRREL: “But the ARCHITECTURE—”

riclib: “Haiku.”

One direct API call. No subprocess. No tool loading. Four tools: log_bullet, add_todo, escalate, create_background_task. Haiku classifies the message in under 500 milliseconds and calls the right tool. Simple messages execute directly — append to the journal file, done. Complex messages call escalate and get routed to the Sonnet session.

The POC was a curl loop with thirteen test cases:

"picked up the milk" → log_bullet (done: true) ✓

"dentist Thursday 3pm" → add_todo ✓

"finished the NATS migration" → log_bullet (done: true) ✓

"what did I work on this week?" → escalate ✓

"write a yagnipedia entry about SSDs" → create_background_task ✓

"summarize my last 3 journal entries" → escalate ✓

"remind me to call mom tomorrow" → add_todo ✓

"research NATS vs Kafka" → escalate ✓

"deploy script is broken" → add_todo ✓

"idea: audio narration for episodes" → log_bullet ✓

"how many yagnipedia entries?" → escalate ✓

"interview me about HDDs" → escalate ✓

"benfica won 3-1" → log_bullet (done: true) ✓

Thirteen out of thirteen. On the first try. Haiku is a perfect reflex — too fast to think, too accurate to need to.

The three-layer architecture fell out naturally:

- Layer 1: Haiku (reflex, <500ms) — direct Anthropic API, simple tools, instant

- Layer 2: Sonnet (reasoning, 2-30s) — persistent Claude Code session, lg tools, streaming

- Layer 3: Claude Code (background, minutes) — picks up

#agenttagged tasks from the journal

The binary learned its second language: the Anthropic Messages API, spoken directly, without a subprocess translator.

10:00 — The Model Selector

A tiny dropdown appeared next to the input field: auto | haiku | opus | agent.

Not because the router was wrong — the router was right 13/13 times. Because debugging is easier when you can override. And because sometimes you know what you need. An interview should go to Opus. A quick log should stay in Haiku. The model selector is the manual transmission — automatic is fine, but the option to shift matters.

10:30 — The Responsive Sprint

The journal page worked on a phone. This took twenty minutes.

One @media (max-width: 640px) block. Tighter padding. Smaller fonts. Day headers not sticky (waste of vertical space on mobile). Chat bar respects safe-area-inset-bottom for notch phones. Cover tooltips hidden (no hover on touch). Thread gets 50vh.

The entire mobile experience — check your day, send a voice note, paste a URL — is a web page that works on any device with a browser. No app. No App Store. No Swift. No second codebase.

THE SQUIRREL: “We should build a Wails app with—”

riclib: “We filed that ticket and cancelled it within the hour.”

THE SQUIRREL: “I remember.”

riclib: “The web server is the app. The browser is the client.”

THE SQUIRREL: “The Lizard was right.”

riclib: “The Lizard didn’t say anything.”

THE SQUIRREL: “Exactly.”

11:00 — Language Three: The Web Speech API

The third language was spoken, not typed.

A mic button. Click it — the browser listens. A soundwave animates in the chat thread. Words appear as you speak — interim results updating in real time, final transcript replacing them. Click the mic again to send. The message goes through the same three-layer router. Haiku classifies. Sonnet reasons. The journal updates.

Except it didn’t work. The animation played. The soundwave bounced. No transcript appeared.

Thirty minutes of debugging. The Web Speech API was starting (STARTED in the console), then immediately erroring (no-speech). The API was listening. The API heard nothing. The microphone was allowed. The microphone was active.

Chrome had selected a virtual audio device. Not the laptop microphone. Not the external mic. A virtual device that no human had configured, that existed because some application had installed an audio driver at some point, and Chrome had decided — through the inscrutable logic of a browser that believes it knows better than you which microphone you want to speak into — that silence from a virtual device was preferable to sound from a real one.

The fix: Chrome settings → chrome://settings/content/microphone → select the actual microphone. The kind of fix that makes you understand why people throw computers out of windows.

The first spoken message: “Hey, what have I been up to today?” Haiku escalated. Sonnet read the journal. Summarized the day. The lens heard a voice and responded with a summary of everything the voice had built.

11:30 — The Traces

With three layers, debugging was blind. Which layer handled the message? How long did routing take? Did Haiku escalate or answer directly?

Faint trace lines appeared in the chat thread:

haiku → log_bullet (312ms)

haiku → escalate (445ms) → sonnet

haiku → background #write (298ms)

Not for the user. For the builder. The kind of instrumentation that exists because the system is complex enough to need observation but simple enough that the observation is one line of SSE.

12:00 — The Tally

Duration: 15 hours (21:00 Sat → 12:00 Sun)

Sleep: 0

Benfica result: not checked

Chicken stock: not prepped

Commits: 12

Lines added: ~2,400

Lines deleted: ~400

Net new: ~2,000

Languages the binary learned:

1. Claude Code stream-json stdin/stdout, token-by-token

2. Anthropic Messages API direct HTTP, Haiku router

3. Web Speech API browser mic → transcript → chat

Languages it already spoke: Go, SQL, HTML, CSS, NATS, git

Total languages: 9

For a "notes indexer": suspicious

Chat handler: 170 lines (v1, single model)

Three-layer router: 350 lines (v3, Haiku + Sonnet + background)

Prompts: 3 files, embedded via //go:embed

Haiku routing accuracy: 13/13

On the first try: yes

The Squirrel's alternative: LangChain + Vector DB + RAG (12 boxes)

The actual architecture: 1 API call + 4 tools

Paste button:

Prompt: "Figure out what to do with this"

Words in prompt: 9 (plus a smiley)

Content classification accuracy: 100%

The Squirrel's alternative: entity extraction pipeline

The actual implementation: 9 words

Cursor deaths: 3 (all resurrected)

CSS transitions that caused void: 2

The void was: unacceptable

The cursor now: immortal

Voice input:

Chrome virtual audio device incidents: 1

Minutes debugging silence: 30

The fix: browser settings

The fix could have been prevented by: Chrome not choosing phantom microphones

Model selector options: 4 (auto, haiku, opus, agent)

Most used: auto

Most useful for debugging: opus (bypass routing)

Responsive CSS: 1 @media block, 20 minutes

Mobile app built: 0

App Store submissions: 0

Swift written: 0

The web server is the app: yes

Tickets filed: 9 (L-40 through L-48)

Tickets cancelled: 1 (L-42, Wails app)

Time between filing and cancelling: < 1 hour

The Lizard's opinion: correct (the web server is better)

Freedom:

Edit buttons in the web UI: 0

Ways to edit notes: infinite (any text editor + the chat bar)

The filesystem is the API: yes

The second brain is a layer: not a prison

Thinking words implemented: 11

Favorite: "Noodling"

The Squirrel's suggestion: "Architecting" (declined)

Idle threshold before words appear: 600ms

Words the binary says while thinking: Pondering, Ruminating, Mulling,

Considering, Noodling, Percolating,

Cogitating, Composing, Brewing,

Weaving, Thinking

The binary still thinks it's a notes indexer: yes

Subcommands: 25 (unchanged — the chat is a handler)

Zawinski's Law: narrowly avoided (again)

March 15, 2026

Riga, Latvia

15 hours after “We should wire that up”

The lens was built to look

Then it learned to find

Then it learned to show

Then it learned to remember

Then it learned three languages

In fifteen hours

On a couch

With a cat on the warm corner

The first language was a protocol

stdin to stdout

JSON lines

The simplest conversation possible

The second language was an API call

Five hundred milliseconds

To know the difference

Between a grocery list and a question

The third language was a voice

A microphone that worked

After Chrome stopped listening

To a device that didn’t exist

The binary speaks nine languages now

For a notes indexer

This is suspicious

But the files are still on disk

And the edit button is still missing

And the freedom is still the point

Talk to your day

In any language

The lens is listening

🦎

See Also:

The Sprint:

- The Saturday the Lens Learned to Remember, or The Picnic That Almost Wasn’t — Yesterday: the lens learned to remember. Today: the lens learned to listen, then to speak.

- The Homecoming — The Three Days a Palace Was Built From Markdown and SQLite — Where the lens was born

The Architecture:

- lg — The notes indexer with 25 subcommands and a chat bar. Still suspicious.

- Second Brain — The yagnipedia entry that was written during this sprint

The Freedom:

- The files are on disk. Open them in anything. The second brain is a layer, not a prison.

- Personal Knowledge Management — The $4 billion industry that this sprint made unnecessary

The Law:

- The Chatbot Corollary to Zawinski’s Law: every program attempts to expand until it can hold a conversation.