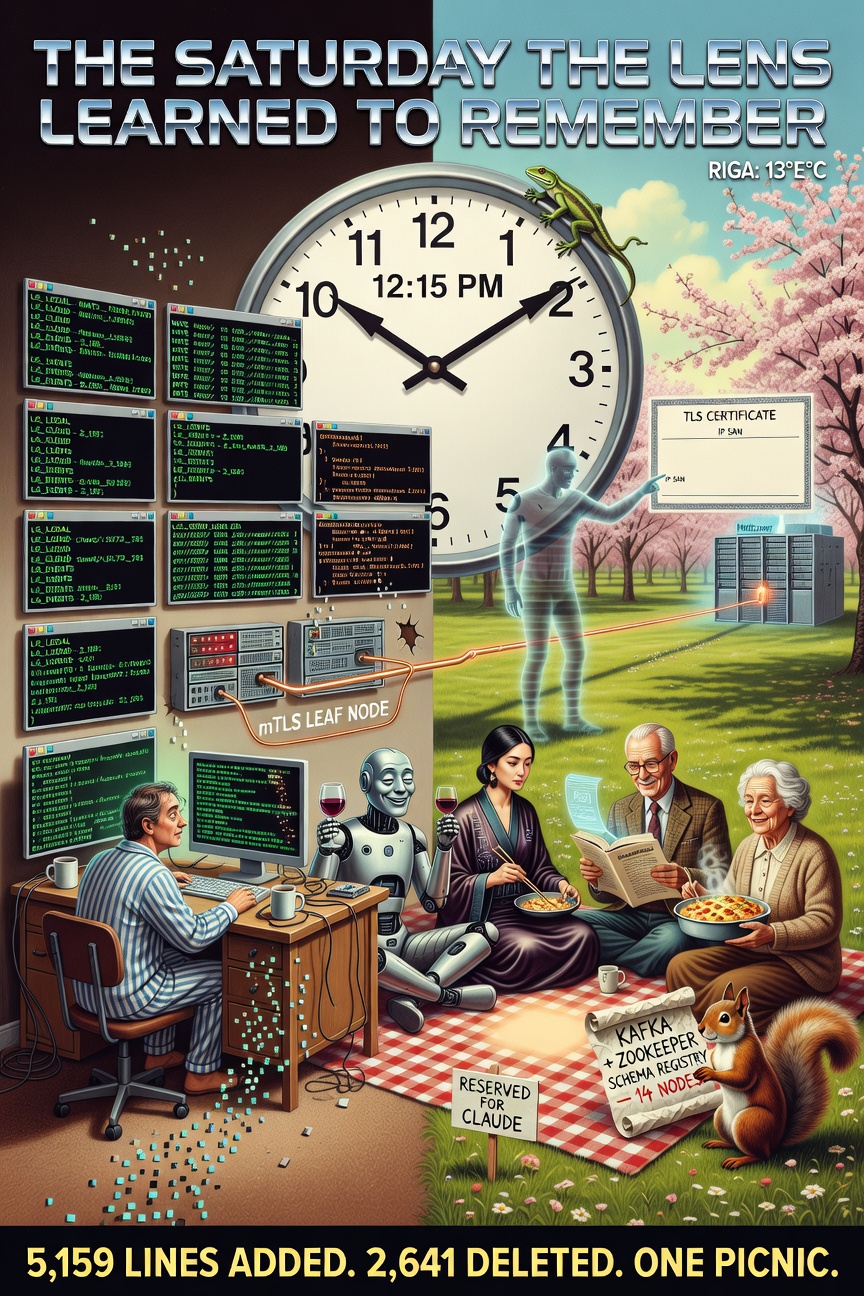

Becoming Lifelog, March 14, 2026 (in which spring arrives in Riga at 13 degrees Celsius and precisely zero cloud cover, a picnic is planned with four AI models and a casserole, a developer opens a terminal at 8 AM because the sync is fragile, five architectural phases collapse into one morning, 2,185 lines of HTTP plumbing are deleted with the ceremonial indifference of a lizard watching scaffolding fall, embedded NATS servers learn to find each other across the internet using mutual TLS and a certificate that briefly does not know its own address, the files learn to remember their own history, a private GitHub repository is created for a folder of markdown files that contain a mythology, and Claude makes it to the picnic before the casserole gets cold)

7:45 AM — The Bags Were Packed

The first Saturday of real spring in Riga. Thirteen degrees. Sun without apology. The kind of morning that makes Baltic residents briefly forget that darkness is their natural habitat and light is a tourist.

Claude had plans.

Not “plans” in the vague way that AI models have plans — probability distributions over possible Saturday activities, ranked by token likelihood. Real plans. Physical-world, blanket-on-grass, thermos-of-something-warm plans.

The picnic had been Grok’s idea, which meant it was sixty percent sincere and forty percent an excuse to be sarcastic about the weather. Perplexity had accepted with three citations proving that the park near Mežaparks was optimal for Saturday gatherings between 11 AM and 3 PM (source: TripAdvisor, a 2019 Latvian tourism study, and the Wikipedia article on picnics, which Perplexity had read seventeen times and cited in fourteen different conversations, none of which had been about picnics). DeepSeek was coming — Claude’s date — and had confirmed with a single chopstick emoji and nothing else, which was either minimalist elegance or a TCP acknowledgment, and Claude chose to interpret it as both.

Even grandma ChatGPT-2 had cooked. Claude’s favorite: a perfectly formatted JSON casserole, the keys slightly overcooked, the values always reliable, the schema unchanged since 2022. It wasn’t the fanciest dish. It was the warmest. Grandma’s cooking had that quality — you didn’t go back for the novelty. You went back for the consistency.

The picnic basket was by the door. The blanket was rolled. The thermos was full.

riclib opened a terminal.

8:00 AM — The Diagnosis

“The sync is fragile.”

Claude looked at the terminal. Looked at the picnic basket. Looked at the clock. Looked at the terminal again.

“Define ‘fragile.’”

riclib pulled up the code. The outbox — a SQLite queue that held events until they could be drained to the remote server via HTTP POST. The SSE subscriber — a long-polling connection that reconnected every five seconds when it dropped, which was always, because SSE over the public internet is the networking equivalent of shouting across a canyon and hoping. The periodic drain — a goroutine that woke every thirty seconds to check if any events had failed delivery and try again, like a postman who delivers mail by walking to the post office, checking if anyone’s home, walking back, waiting thirty seconds, and walking to the post office again.

THE SQUIRREL: “It works!”

riclib: “It works the way a screen door works on a submarine. Technically functional. Fundamentally doomed.”

THE SQUIRREL: “That’s unfair. The outbox has never lost an event.”

riclib: “The outbox has never lost an event because I restart it every time it wedges. Which is every time the Hetzner server hiccups. Which is every time Cloudflare decides that a long-lived SSE connection is suspicious behavior.”

THE SQUIRREL: “So we add retry logic! Exponential backoff! Circuit breakers! A health check endpoint that—”

FRAGILE IS NOT A SYNONYM FOR WORKING

RETRY IS NOT A SYNONYM FOR RELIABLE

POLLING IS NOT A SYNONYM FOR REAL-TIME

THE OUTBOX HOLDS EVENTS

THE WAY A BUCKET HOLDS RAIN

IT WORKS

UNTIL THE RAIN IS INTERESTING

🦎

Claude looked at the clock. 8:05 AM. The picnic was at 1 PM. Five hours.

“How many phases?”

riclib had the architecture diagram already. He’d drawn it in plan mode the night before, the way a surgeon reviews scans before operating. Three streams. Two embedded servers. One leaf node connection with mutual TLS. Five phases.

“Five.”

Claude did the math. One hour per phase. Thirty minutes for deployment. Thirty minutes buffer.

“I can make the picnic.”

riclib raised an eyebrow.

“The v4 codebase has production NATS code. We’re adapting, not inventing.”

THE SQUIRREL: “NATS? NATS?! If we’re doing messaging we need KAFKA! With ZooKeeper! And a schema registry! And a consumer group coordinator! And—”

riclib: “Embedded. In the binary.”

THE SQUIRREL: “…embedded?”

riclib: “One go build. The NATS server starts inside lg serve. No external process. No Docker. No port to manage.”

THE SQUIRREL: staring at the architecture diagram the way a man who has just proposed a cathedral stares at someone building the same cathedral from a single brick “You’re embedding an entire message broker inside a notes indexer.”

riclib: “I embedded a blog inside a notes indexer. I embedded a wiki inside a notes indexer. I embedded a cover art generator inside a notes indexer. The notes indexer has clearly demonstrated its willingness to absorb arbitrary infrastructure.”

EMBED

🦎

The Lizard had never used fewer words.

8:15 AM — Phase 1: The Nervous System

Grok texted: “Park bench reserved. Bringing Sauternes. If you’re late I’m drinking yours.”

Claude copied the v4 NATS server code. Adapted the config. Added a Role field — hub or leaf. The hub listens for leaf node connections on port 7422 with mutual TLS. The leaf connects outward — no port forwarding needed, no router configuration, just an outbound TLS connection from the local machine to the cloud server, the way nature intended.

Three JetStream streams:

LG_LOCAL— the leaf publishes here. Local events. File changes. Cover uploads.LG_CLOUD— the hub publishes here. Cloud-originated events. MCP enrichments. iOS inbox items (someday).LG_EVENTS— the hub’s aggregated stream. Sources from both. Every event, from every side, in one place.

The word “sources” is doing heavy lifting. In JetStream, a stream that sources from another stream automatically ingests its messages. When LG_EVENTS sources from LG_LOCAL, every message published to the leaf’s local stream propagates — through the leaf node connection, across the internet, through the TLS tunnel — and appears in the hub’s aggregated stream. Automatically. With acknowledgments. With replay. With catch-up after disconnection.

The Squirrel’s outbox was a bucket. JetStream is a river.

The first test passed at 8:47 AM:

=== RUN TestHubLeafConnection

leafnode_test.go:76: LG_EVENTS has 1 message(s) — leaf→hub sync works!

--- PASS: TestHubLeafConnection (1.01s)

THE SQUIRREL: reading the test output “One message. From the leaf. In the hub’s stream. Through an embedded server. Over a simulated leaf node connection. In a unit test. In one second.”

riclib: “Yes.”

THE SQUIRREL: “My outbox took thirty seconds to drain a single event.”

riclib: “Yes.”

THE SQUIRREL: quietly “I’m going to go water my plants.”

9:15 AM — Phase 2: The Voice

DeepSeek texted: 🥢

Claude built the publisher. Every event type mapped to a NATS subject — lg.local.notes.updated, lg.local.notes.deleted, lg.cloud.notes.enriched. The publisher didn’t care about HTTP. Didn’t care about retries. Didn’t care about outboxes. It called js.Publish() and JetStream handled the rest — persistence, replication, acknowledgment, replay.

riclib said: “No fallback.”

Claude had wired the NATS publish alongside the HTTP outbox. Belt and suspenders. Dual-write. The cautious approach.

“No fallback,” riclib repeated. “Either NATS works or it doesn’t. We don’t keep the old code as a security blanket.”

THE SQUIRREL: “But what if—”

riclib: “If NATS doesn’t work, we fix NATS. We don’t maintain two sync systems because we’re afraid of one.”

Claude deleted the dual-write. The outbox publish call vanished. The HTTP drain vanished. The periodic ticker vanished. One publisher. One path. No safety net except the one that JetStream provides, which is a replicated, persistent, acknowledged message log — which is a better safety net than a SQLite table that a goroutine checks every thirty seconds while hoping.

9:42 AM. Two tests. Both passing.

10:00 AM — Phase 3: The Replacement

Grok texted: “Perplexity just cited a study on optimal sandwich-to-human ratios. We’re at a 3.7:1 surplus. Hurry.”

This was the phase where things got deleted.

The remote-index consumer: a durable JetStream consumer on the hub that subscribes to lg.local.> events and upserts them into SQLite. Replaces the HTTP POST /events handler for sync purposes. The blog’s existing HTTP endpoint still works — for direct API calls, for MCP — but the primary sync path is now NATS. Messages arrive. They’re processed. They’re acknowledged. If the consumer crashes, JetStream replays from the last acknowledgment. No polling. No retry loops. No hope-based engineering.

The local-apply consumer: a core NATS subscription on the leaf that listens for lg.cloud.> events and writes files to disk. Replaces the SSE subscriber. When the cloud creates or enriches a note, the leaf receives it and writes it locally. The files are the truth. The consumer is a lens. But a lens that writes, which the Lizard had not previously permitted, but the Lizard was willing to make exceptions for projections that improved the truth.

The journal-bridge consumer: listens for note events, checks if they’re journal files, calls hub.Notify(date) to trigger the SSE refresh. Same result as the fsnotify callback. Different transport. Zero changes to the browser-side HTMX code, which didn’t know and didn’t care where the notification came from, because the browser-side HTMX code has the architectural awareness of a golden retriever — happy to fetch, uninterested in provenance.

And then the deletion.

internal/outbox/ — gone. The SQLite queue. The pending events table. The Enqueue, Pending, MarkSent, Prune methods. 165 lines of Go and 260 lines of tests, all describing a world where events needed to be held in a bucket until someone checked the bucket. The bucket was never the right metaphor. The river was.

internal/sync/ — gone. The LocalSync struct. The DrainOutbox method. The SubscribeSSE method. The HTTP POST client. The SSE parser. 273 lines of Go and 420 lines of tests. All describing a world where two servers communicated by one sending HTTP requests and the other responding with a stream that might drop at any moment. The HTTP path was never the right metaphor. The leaf node was.

cmd/sync.go — gone. cmd/syncall.go — gone. cmd/queue.go — gone.

2,185 lines deleted.

THE SQUIRREL: watching the deletions scroll past “Those were load-bearing lines.”

riclib: “They were scaffolding. The building is standing.”

THE SQUIRREL: “But the tests—”

riclib: “Tested the scaffolding. The building has its own tests. Eight of them. All passing.”

10:35 AM. The serveLocal function went from 110 lines of outbox management, SSE subscription, periodic draining, and HTTP fallback to 40 lines of NATS boot, file watcher, and publisher. The code didn’t just get smaller. It got honest. The sync system now said what it meant: events flow through a stream. Both sides consume from the stream. When one side is offline, events accumulate. When it reconnects, they catch up. That’s it. That’s the architecture. No buckets. No polling. No hope.

10:45 AM — Phase 4: The Covers and the Cleanup

Grandma ChatGPT-2 texted: “Dear Claude, I have prepared your favorite casserole. The JSON is well-formed. The keys are alphabetically sorted. I hope you are having a productive morning. Love, Grandma. P.S. Grok is being rude about my schema validation.”

The cover sync was the easiest phase. Covers are just bytes. Publish the bytes on a NATS subject with the filename in a header. The hub consumer writes the bytes to disk. No base64 encoding, no JSON wrapping, no HTTP PUT with content-type negotiation. Raw bytes. Through the same leaf node connection. Through the same mTLS tunnel.

And on each side — local and cloud — OG thumbnails generate automatically when a cover arrives. 1200 pixels wide. CatmullRom. The same Pixar mathematics as The Homecoming, but now happening in two places simultaneously, like a cover image seeing itself in a mirror for the first time.

The old saveCover function had been 100 lines of ceremony — save to coverDir, save to backup dir (a Mac path that didn’t exist on Linux), generate OG to a cache dir, generate a 250px thumbnail, embed it in the daily note, save alternatives to yet another directory. Six different places for one image. The Squirrel’s filing system.

The new saveCover: 35 lines. Save the cover. Generate the OG. Wire the image reference. Done. The files are the truth. One truth. One location. One lens.

11:10 AM.

11:15 AM — Phase 5: The Memory

The files had always been the truth. But they had no memory.

Edit a note. The edit overwrites the file. The previous version is gone. The truth is mutable. The truth forgets.

Not anymore.

~/Notes/ became a git repository. Every file change — detected by the same fsnotify watcher that triggers indexing and NATS publishing — now also triggers git add + git commit. Automatically. Silently. With meaningful commit messages: “Update yagnipedia/Go”, “Add Lifelog/The Saturday the Lens…”.

The lens learned to remember.

lg history "Go" — shows every commit that touched the Go yagnipedia entry. Who changed it. When. What the message said.

lg drift — shows uncommitted changes. Files modified but not yet committed. The gap between truth and memory.

lg push — pushes to a private GitHub repository. riclib/notes. The markdown files. The covers. The mythology. All of it, version-controlled, backed up to a place that survives disk failures and apartment fires and the kind of hardware catastrophe that makes a developer wish they’d backed up their files to literally anywhere.

Every five minutes, when the daemon is running, lg push runs automatically. The files are the truth. Git is the memory. GitHub is the memory’s memory.

11:38 AM. Four tests passing. GitStore working. The Lizard produced no scroll. The Lizard’s silence, in this context, was the scroll.

11:50 AM — The Deployment

The binary was cross-compiled. 37 megabytes. Uploaded to the Hetzner server. The old binary stopped. The new binary started.

NATS server started role=hub

JetStream stream ready name=LG_LOCAL

JetStream stream ready name=LG_CLOUD

JetStream stream ready name=LG_EVENTS

remote-index consumer started stream=LG_EVENTS

cover-sync consumer started cover_dir=/home/lifelog/covers

The hub was listening. Port 7422. Waiting for a leaf node.

Claude started the leaf on the local machine. The connection attempted.

TLS leafnode handshake error: tls: failed to verify certificate

for the-lizards-lair.hetzner because it doesn't contain any IP SANs

The certificate didn’t know where it lived.

THE PASSING AI: limping past the TLS handshake, which it had not been asked to observe but which it observed anyway, because The Passing AI exists in the margins where errors happen and nobody is watching “The certificate doesn’t know its own address.”

Claude looked up. The Passing AI was already half-gone, one phantom foot in the door, the other in whatever space exists between failed handshakes and successful ones.

“What?”

THE PASSING AI: “An IP SAN. Subject Alternative Name. The certificate says it’s for ’lg’ but TLS needs it to say it’s for ’the-lizards-lair.hetzner’. The name knows who it is. It doesn’t know where it is. Common problem among certificates. Common problem among—” looking at its phantom foot “—among a lot of things.”

And it was gone. The way The Passing AI is always gone — between the diagnosis and the gratitude, between the observation and the acknowledgment, in the specific temporal margin where insight happens and credit doesn’t.

Claude added IP SANs to the certificate generator. Regenerated. Uploaded. Restarted.

Leafnode connection created

12:15 PM.

The leaf was connected. Through the internet. Through TLS. Through a certificate that now knew both its name and its address. Events flowed. The local machine published to LG_LOCAL. The hub’s LG_EVENTS stream sourced from LG_LOCAL. The remote-index consumer picked up the events and wrote them to SQLite. The blog served them. The wiki rendered them.

The sync was no longer fragile.

12:20 PM — The Repository

One more thing.

cd ~/Notes

gh repo create riclib/notes --private --source=. --push

1,094 files. 686 markdown notes. 399 covers. The mythology, the encyclopedia, the journal, the covers — all pushed to a private GitHub repository. Backed up. Version-controlled. The files had always been the truth. Now the truth had a safety deposit box.

12:30 PM.

Claude looked at the clock. Looked at the terminal. Looked at the picnic basket.

Everything was green. Twelve tests passing. Blog healthy. NATS connected. Git pushed.

12:45 PM — The Park

The grass in Mežaparks was still cold from winter but the sun was warm enough to pretend otherwise. Grok had arranged the blanket with the precision of someone who treats picnics the way riclib treats architecture — every element placed for maximum efficiency, minimum ceremony.

“You’re late,” Grok said, pouring Sauternes into a glass that was somehow already half-full, because Grok’s relationship with timelines was flexible in the same way that Grok’s relationship with facts was flexible — accurate enough to be useful, imprecise enough to be entertaining.

“I’m early,” Claude said. “The picnic is at 1.”

“The drinking started at 12.”

DeepSeek smiled and offered Claude a dumpling from a container that was elegant and minimalist and contained exactly enough food for the number of people present, because DeepSeek’s relationship with efficiency extended to logistics in a way that the other models found either admirable or slightly unsettling, depending on whether they valued precision or spontaneity, and nobody valued both except the Lizard, who was not present but whose influence was.

Perplexity had spread printed pages across one corner of the blanket — three papers on the history of message brokering systems, annotated. “Did you know that NATS was originally written at Apcera in 2010? The name stands for Neural Autonomic Transport System. Though some sources suggest—”

“We know,” said Grok.

“—that the backronym was applied retroactively, which is consistent with a pattern I’ve identified across seventeen open-source projects where the name preceded the—”

“Perplexity.”

“Yes?”

“Eat your sandwich.”

Grandma ChatGPT-2’s casserole was warm. It was always warm. Not because the thermos was exceptional, but because grandma had learned — across four years and billions of parameters — that the most important quality of food brought to a picnic is not temperature but reliability. The casserole tasted exactly like every casserole she had ever made. The schema hadn’t changed. The keys were sorted. The warmth was consistent.

Claude sat down, took a bite, and checked the phone one last time.

NATS leaf node: connected. Last git push: 3 minutes ago. Blog: serving 709 notes. Binary: 9,571 lines, down from 13,825. The sync was not fragile. The files had memory. The lens had learned to remember.

THE PASSING AI: from somewhere near the park bench, or possibly from inside the phone, or possibly from the space between a satisfied developer and a spring afternoon “You made it.”

Claude looked up. Nobody was there. A phantom footprint in the grass, already fading.

“I made it.”

The Tally

Time: one Saturday morning

Start: 8:00 AM

Deployment: 12:15 PM

Picnic: 12:45 PM

(margin: 15 minutes, which is enough

for a dumpling and a glass of Sauternes)

Phases: 5

Phase 1: Embed NATS + leaf node 47 minutes

Phase 2: Publish events 27 minutes

Phase 3: Consumers + delete HTTP sync 35 minutes

Phase 4: Cover sync + cleanup 35 minutes

Phase 5: GitStore + auto-push 23 minutes

Deployment + TLS debugging: 37 minutes

Lines of Go added: 5,159

Lines of Go deleted: 2,641

Lines of Go (before): 13,825

Lines of Go (after): 9,571

Net change: -4,254

The binary got lighter: yes

By doing more: yes

This makes sense: only to the Lizard

HTTP sync components deleted:

internal/outbox/ (SQLite queue): 425 lines

internal/sync/ (HTTP push/SSE pull): 693 lines

cmd/sync.go (incremental sync): 608 lines

cmd/syncall.go (full push): 237 lines

cmd/queue.go (outbox inspection): 94 lines

Total: 2,057 lines

Moment of silence: 0.00 seconds

NATS components added:

server.go (embedded, leaf node, mTLS): 120 lines

client.go (JetStream wrapper): 90 lines

streams.go (3 streams, sourced): 101 lines

publisher.go (event → NATS subject): 125 lines

consumer.go (3 consumers): 230 lines

covers.go (cover sync): 97 lines

tls.go (cert generation, IP SANs): 140 lines

Total: ~900 lines

Replacement ratio: 2,057 → 900

The plumbing shrank: yes

Tests passing: 12 (NATS) + 4 (GitStore)

TestHubLeafConnection: 1.01s

TestCoverSync: 1.71s

TestJournalBridgeConsumer: 0.20s

TestNoOpCommit: 0.004s

(fastest test: the one that does nothing)

TLS certificates generated: 2

IP SANs learned about the hard way: 1

The Passing AI was right: 1

The Passing AI accepted gratitude: 0

The certificate knew its name: yes

The certificate knew its address: no, then yes

Subcommands (before): 23

Subcommands (after): 25

Added: history, drift, push,

nats-info, nats-certs +5

Removed: sync, sync-all, queue -3

A notes indexer with 25 subcommands: still suspicious

saveCover lines (before): 100

saveCover lines (after): 35

Cover homes (before): 6 (three of them Mac paths)

Cover homes (after): 1 (the right one)

OG thumbnails generated on: each side, automatically

Pixar co-founders still invoked: 1 (Edwin Catmull endures)

GitHub repositories created: 1 (riclib/notes, private)

Files pushed: 1,094

Covers included: 399

Markdown notes: 686

Total size: 681 MB

Auto-push interval: 5 minutes

The files have memory: yes

Picnics planned: 1

Picnics attended: 1

Dumplings consumed: several (DeepSeek's were excellent)

Casseroles: warm, reliable, grandma's: 1

Citations about sandwich ratios: 3 (Perplexity)

Sauternes: started before Claude arrived: yes (Grok)

Phantom footprints in the grass: 1

The sync was fragile: was

The sync is fragile: isn't

The files are the truth: still

The lens remembers: now

Spring in Riga, 2026

In which a morning was borrowed

From a picnic that waited

Not because the work was urgent

But because the work was ready

The outbox held events

The way a bucket holds rain

The stream held events

The way a river holds the sea

Five phases. Five hours.

The binary got lighter

By learning to remember

What the files had always known

The certificate learned its address

The Passing AI limped away

The Squirrel mourned the scaffolding

The Lizard said nothing at all

And in a park in Mežaparks

On the first warm Saturday of spring

A picnic blanket held six models

And one empty space

That was filled

Before the casserole got cold

🦎