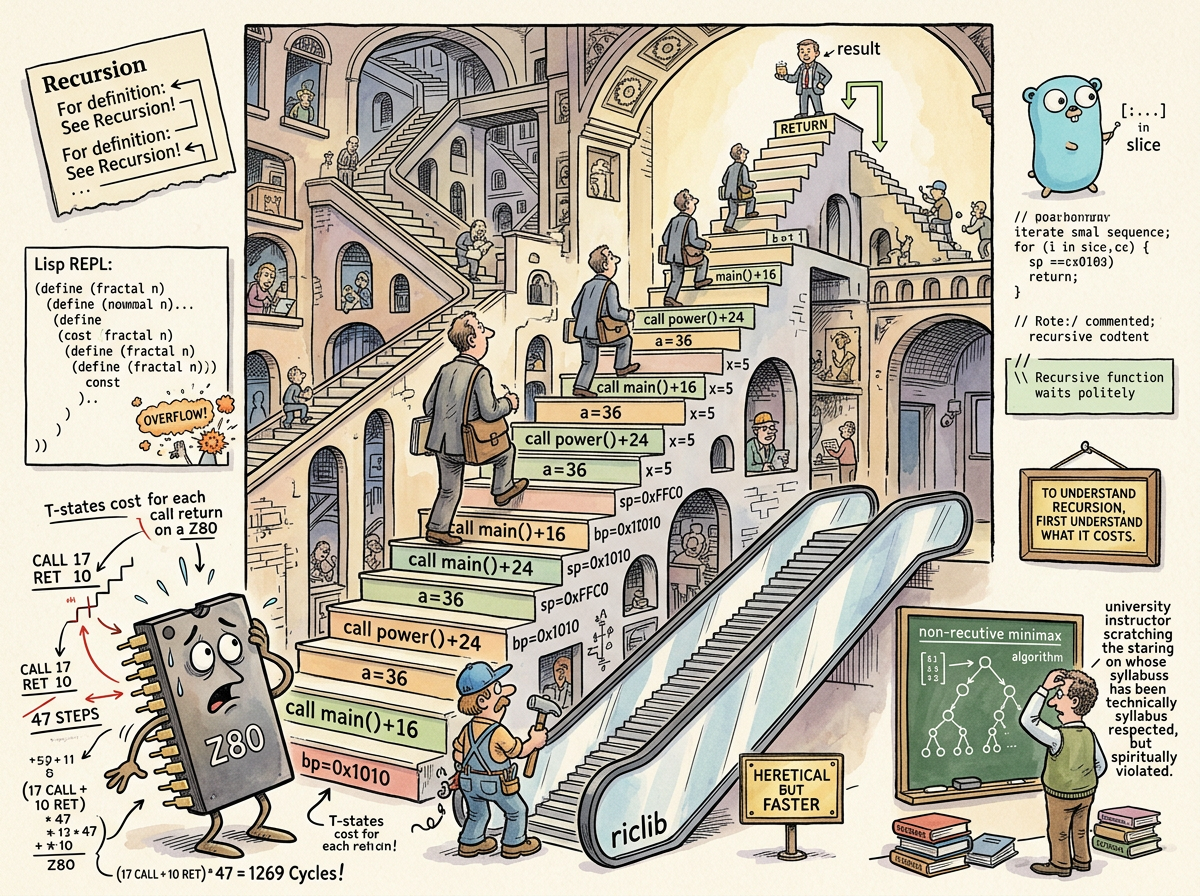

Recursion is the technique in which a function calls itself to solve a problem by breaking it into smaller instances of the same problem. The function calls itself with a reduced input until it reaches a base case — a condition simple enough to answer directly — and then the results propagate back up through the call stack, each frame combining its piece of the answer until the original call receives the complete result.

To understand recursion, see: Recursion.

That is the joke. It is told at every computer science lecture, every programming tutorial, every technical interview where the candidate is asked to reverse a linked list. The joke is recursive. The laughter is O(1) — constant, brief, and diminishing with each retelling.

“Recursion is the universe’s way of saying: the answer is inside the question, which is inside the answer. The Z80’s way of saying the same thing was a stack overflow.”

— The Lizard, who solves problems from the outside in

The Mechanism

A recursive function has two components: the recursive case, where the function invokes itself with a smaller or simpler input, and the base case, where the function returns a result without further self-invocation. Without a base case, recursion is infinite — a function calling itself forever, each invocation consuming a stack frame, until the stack is exhausted and the program terminates with the specific dignity of a process that has run out of memory while trying to prove a point.

The call stack is the accounting department of recursion. Each recursive call pushes a new frame onto the stack — local variables, the return address, the parameters for this invocation. Each return pops a frame. The stack grows with the depth of recursion. A function that recurses n times requires n stack frames, and stack space is finite, which is the constraint that separates theory from engineering.

In theory, recursion and iteration are equivalent. Anything you can write recursively, you can write iteratively. The Church-Turing thesis guarantees it. In practice, the equivalence is like saying a helicopter and a bicycle can both get you to work — technically true, relevantly different.

“Recursion is iteration that went to university and came back with opinions about elegance.”

— The Caffeinated Squirrel, who once wrote a recursive configuration parser that stack-overflowed on a 12-level nested YAML file and blamed the YAML

The Z80 Lesson

On a Z80 at 3.5MHz, a CALL instruction costs 17 T-states. A RET costs 10. That is 27 T-states of overhead for every recursive invocation — not counting the work inside the function, not counting the local variables pushed and popped, not counting the fact that the Z80’s stack lives in the same 64K of RAM as everything else and every byte spent on stack frames is a byte not spent on screen buffers, game state, or sound.

riclib learned this at sixteen. Not from a textbook — from the machine. The Z80 did not explain that recursion was expensive. The Z80 demonstrated it by running out of stack and corrupting the screen memory, which is the Z80’s way of providing feedback.

The lesson was immediate and permanent: recursion is a luxury. On a machine where you count T-states per instruction, where you choose between LDIR and PUSH loops based on 10 T-states of difference, where the entire program fits in 48K and the stack shares that space — on that machine, you do not recurse. You iterate. You unroll. You find the loop that does the same work without the overhead of CALL and RET, and you use it, and the function that would have been five lines of elegant recursion becomes fifteen lines of explicit iteration that runs in a third of the time.

This is not premature optimisation. On a Z80, it is the only kind of optimisation there is. The machine does not have enough resources for the elegant solution. The machine has enough resources for the solution that works.

“The Z80 taught him that every abstraction has a cost. The cost of recursion is 27 T-states per call. He has never forgotten.”

— The Lizard, in conversation with A Passing AI who found this ineffably sad

The Lisp Paradox

Then came Lisp, and recursion was beautiful.

Lisp was built for recursion. Lists are recursive data structures — a list is an element followed by a list. Processing a list is processing the first element and then recursing on the rest. The language, the data structures, the idioms, the textbooks, the professors — everything in Lisp pointed toward recursion as the natural, correct, elegant way to solve problems.

And riclib used it. In Lisp, recursion was not a luxury but the native mode of thought. Recursive list processing, recursive tree traversal, recursive evaluation. The parentheses made the recursion visible — each nested level of parentheses was a recursive call waiting to resolve, and reading Lisp was reading the call stack laid out horizontally instead of vertically.

But when the university checkers contest arrived — write a checkers program in Lisp, programs play each other, best player wins — riclib built a non-recursive minimax. Every other student implemented the textbook version: recursive function calls traversing a game tree represented as nested lists. Clean. Correct. The way the instructor intended.

riclib represented the board as bytes and built a two-step iterative minimax that operated directly on the byte representation. No recursive calls. No nested list traversal. No stack frames accumulating with every level of the game tree. While other programs searched six or eight moves ahead, riclib’s searched significantly deeper, because the cycles other programs spent on CALL, RET, and list construction, his program spent on looking further.

Second place. The winner had a better evaluation function — understood checkers more deeply, even searching fewer moves ahead. Depth is not wisdom. But the instructor’s face — the specific expression of someone whose Lisp course had just been answered in assembly idiom — was worth more than first.

The Z80 instinct had survived Lisp. The language said recurse. The instinct said iterate. The instinct went deeper.

The Go Continuation

In Go, recursion is available, practical, and occasionally the right tool. Go has goroutines with growable stacks, so the fixed-stack-overflow problem of the Z80 era is largely solved. A recursive function in Go will not corrupt your screen memory. Probably.

riclib still defaults to iteration. A tree traversal that could be recursive becomes a loop with an explicit stack. A depth-first search that textbooks write recursively becomes a for loop with a slice acting as the stack. The code is longer. The code is clearer about what it costs. The code does not ask the runtime to manage frames that the programmer can manage with an index variable.

This is not rational. Go’s compiler handles recursion competently. The performance difference in most cases is negligible. The Z80 is forty years ago. But instincts formed at 3.5MHz do not update at the speed of language specifications. The hands write iteration. The hands remember what recursion costs.

“I could recurse. The language supports it. The machine can handle it. But somewhere in the back of my mind, a Z80 is counting T-states, and it disapproves.”

— riclib, in a code review where he replaced a recursive function with a for loop and could not fully explain why

Tail Recursion and the Compromise

Some languages offer tail-call optimisation — if the recursive call is the last thing a function does, the compiler can reuse the current stack frame instead of pushing a new one. This transforms recursion into iteration at the machine level, giving you the elegant syntax without the stack cost. Scheme mandates it. Haskell provides it. Go does not.

The Z80 programmer finds tail-call optimisation satisfying. It is the compiler admitting that iteration was the right answer all along, and dressing it up in recursive syntax for the benefit of people who prefer their loops to look like mathematics.

Measured Characteristics

Definition: a function that calls itself

Components: base case + recursive case

Overhead per call (Z80): 27 T-states (17 CALL + 10 RET)

Overhead per call (modern CPU): ~2-5 nanoseconds (still not free)

Stack cost per frame: 16-64 bytes (architecture-dependent)

Z80 stack space: shared with the rest of the 64K

Theoretical equivalence: recursion = iteration (Church-Turing)

Practical equivalence: helicopter = bicycle (technically)

riclib's default: iteration (Z80 instinct, 40 years strong)

Checkers contest approach: non-recursive minimax on byte arrays

Checkers contest result: second place (depth without wisdom)

Instructor's face: the real prize

Languages where recursion is natural: Lisp, Scheme, Haskell, Prolog

Languages where recursion is available: all of them

Languages where riclib recurses: almost none of them

The joke: to understand recursion, see: Recursion

Times the joke is funny: 1 (base case)

See Also

- Lisp — The language where recursion was beautiful, and riclib still iterated through the checkers contest.

- Z80 Assembly — The machine that taught the cost. 27 T-states per call. The lesson that never expired.

- Go — Supports recursion. riclib writes for loops. The Z80 approves.

- Lambda Expressions — The mathematical foundation that makes recursion possible through self-reference. The Y combinator is recursion’s origin story.

- O Notation — Recursion and iteration have the same Big O. The constant factor is where the Z80 programmer lives.

- The Lizard — Who has never recursed. Instinct does not call itself; instinct acts.