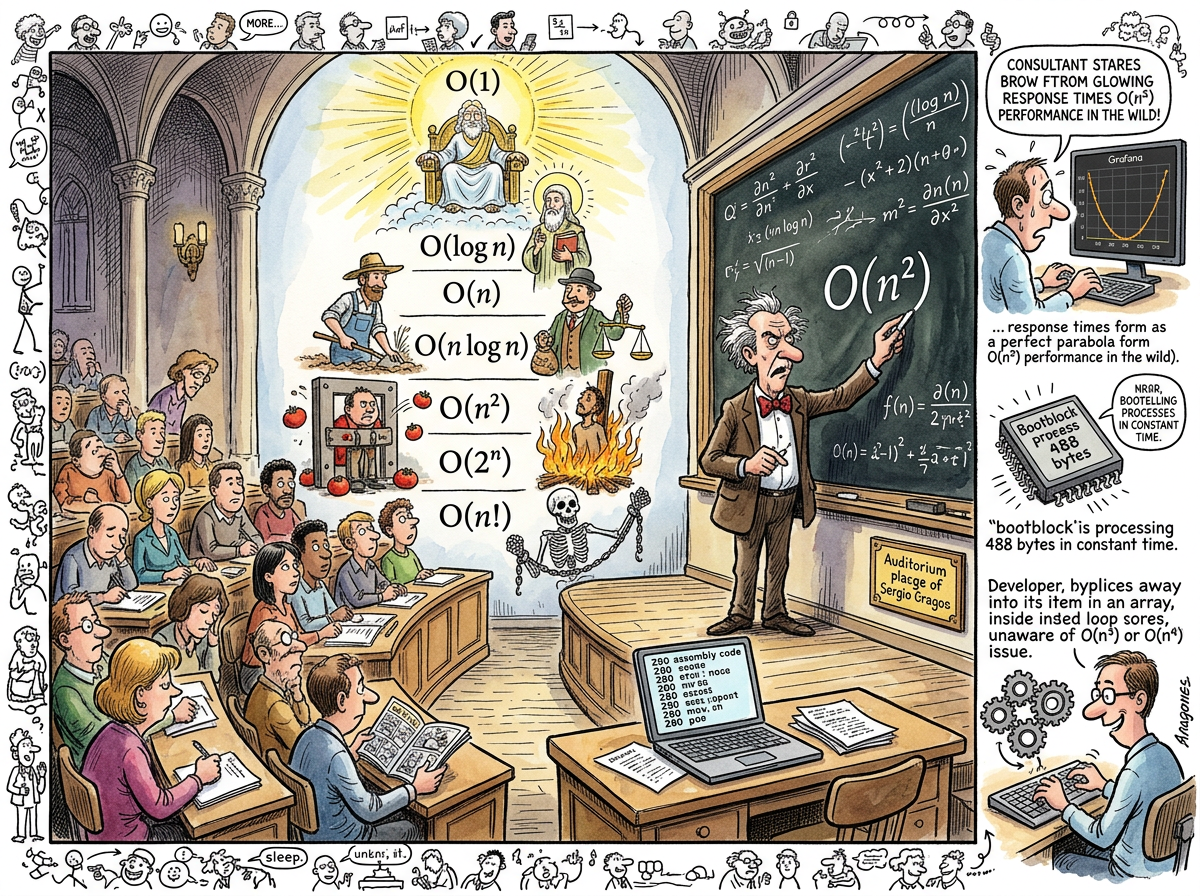

O notation (Big O notation, asymptotic notation, Bachmann–Landau notation, or “the thing your interviewer asks about to see if you went to a proper university”) is the mathematical framework for describing how an algorithm’s resource consumption grows as the input size increases. O(n) means the cost grows linearly with the input. O(n²) means the cost grows quadratically — double the input, quadruple the time. O(1) means the cost is constant regardless of input, which is either a hash table or a lie.

O notation is the most important concept in computer science that can be fully understood in five minutes and fully ignored for an entire career.

“The boy counted cycles per instruction on a Z80 at 3.5MHz. He did not know the word ‘asymptotic.’ He did not need to.”

— The Lizard, who has never read Knuth but agrees with him

The Formal Definition Nobody Needs

Formally, f(n) = O(g(n)) if there exist positive constants c and n₀ such that 0 ≤ f(n) ≤ c·g(n) for all n ≥ n₀. This means that for sufficiently large inputs, f(n) grows no faster than g(n), up to a constant factor.

The constant factor is the part that O notation discards. This is simultaneously its greatest strength and its most dangerous lie. O notation says that an algorithm that takes 3n steps and an algorithm that takes 3,000,000n steps are both O(n) — linearly equivalent, asymptotically identical, theoretically the same. In practice, the second algorithm is a million times slower, but O notation has made its point and left the room.

“O notation is the art of ignoring the constant factor. The constant factor is where you live.”

— The Caffeinated Squirrel, who once declared a O(1) lookup to be “basically instant” before discovering the constant was 47 milliseconds

The Hierarchy

The computer science community has arranged algorithmic complexity into a hierarchy that functions as both classification system and moral judgement:

O(1) — Constant time. The aristocracy. Hash lookups, array indexing, the operations that do not care how much data you have. “Accessing the first byte of a 488-byte bootblock takes the same time as accessing the first byte of a 4GB file” is O(1) in action. The fact that the bootblock loads faster overall is the constant factor that O notation does not discuss.

O(log n) — Logarithmic. Binary search. The satisfying certainty that doubling your data adds only one more step. A million records searched in twenty comparisons. Every programmer’s favourite party trick, assuming the data is sorted, which it never is.

O(n) — Linear. The honest algorithm. Touch every element once. One pass through the data. “Read every byte of the screen to clear it” — the LDIR approach to clearing the Spectrum display — is O(n) where n is 6,144 bytes. The Z80 Assembly PUSH trick is also O(n) but with a smaller constant factor, which O notation considers irrelevant and the Z80 programmer considers the entire point.

O(n log n) — The speed of sorting. Merge sort, heap sort, the theoretical floor for comparison-based sorting. Respectable. The algorithm equivalent of a sensible car.

O(n²) — Quadratic. The nested loop. The algorithm that works beautifully in testing with 100 records and catches fire in production with 100,000. The most common source of “it worked on my machine” followed by “the database is slow” followed by “we need to scale horizontally” followed by a consultant drawing Gall’s Law on a whiteboard.

O(2ⁿ) — Exponential. The brute force. The “try every combination.” The algorithm that works for n=10 and does not work for n=30 and will not work for n=30 before the heat death of the universe.

O(n!) — Factorial. The travelling salesman. The algorithm that exists to prove that some problems are hard and some ambitions are mathematical hubris.

The University Course riclib Never Took

riclib never formally studied O notation. Not in a lecture hall. Not from a textbook. Not as a chapter heading between “Data Structures” and “Graph Algorithms.” The concept was never presented on a chalkboard with the gravity of a professor explaining the hierarchy.

This is not because riclib did not attend university — he did. It is not because the university did not teach algorithms — it did. It is one of those gaps in a technical education that happens when the student is busy learning Lisp, Prolog, and Lambda Expressions while the algorithms course happens in a different semester or a different building or at a time when the student is writing a checkers engine using byte representations instead of attending the lecture on time complexity.

And yet.

The boy who counted T-states on a Z80 — 11 for PUSH, 21 for LDIR, 13 for DJNZ taken, 8 for DJNZ not taken — was performing constant-factor analysis without the vocabulary. The teenager who chose 16 pushes per loop iteration because 192 iterations fit in an 8-bit register and DJNZ was the tightest loop was optimising the inner loop, which is the practical consequence of understanding O(n) even if you have never written O(n).

The consultant who looked at a financial services company with 1,200 users and 47 microservices and said “your monolith responded in 47 milliseconds” was reading a Grafana dashboard that showed the practical consequences of O(n²) service-to-service communication — each microservice talking to multiple other microservices, each hop adding latency, the total latency growing quadratically with the number of services involved in a request.

The man who built an FTS5 search index in SQLite — full-text search in logarithmic time against a corpus of 253 notes — chose the right data structure without calculating the asymptotic complexity, because after thirty-five years of counting cycles the instinct to “not do the same work twice” and “touch each element the minimum number of times” is not a learned behaviour but a reflex.

“He never learned the notation. He always obeyed the law. The notation is a description. The law is a consequence. You can ignore the description. You cannot ignore the consequence.”

— The Lizard, who has no formal education in anything

The Constant Factor

O notation’s deliberate blindness to constant factors creates a specific type of expert: the computer scientist who can prove that two algorithms have the same complexity but cannot explain why one of them is four times faster on actual hardware.

The Z80 programmer lives in the constant factor. The LDIR screen clear and the PUSH screen clear are both O(n). Big O considers them equivalent. The Z80 considers one of them 3.8 times faster, and at 25 frames per second the 3.8x is 675 milliseconds of CPU time per second, which is the difference between a game that runs and a game that doesn’t.

This is not a criticism of O notation. O notation does what it claims to do — it describes growth rates. The criticism is of the programmer who reads O(n) = O(n) and concludes that the work is done. The growth rate is the same. The constant is where you ship.

“Big O tells you what happens when n goes to infinity. The bug report tells you what happens when n is 47.”

— The Caffeinated Squirrel, who once shipped an O(n log n) sort that was slower than the O(n²) bubble sort it replaced, because n was always 12

Measured Characteristics

Inventor: Paul Bachmann (1894), popularised by Knuth

Purpose: describe how algorithms scale

What it measures: growth rate as input grows

What it ignores: constant factors (where you live)

riclib's formal education in: none

riclib's practical education in: 35 years of counting cycles

T-states per LDIR iteration: 21 (O(n), constant = 21)

T-states per PUSH: 11 (O(n), constant = 11)

O notation's opinion: equivalent

The Z80's opinion: 3.8x faster

Most common O(n²) in the wild: nested loops over API responses

Most common excuse: "it's fine, we only have 100 records"

Most common follow-up: "we now have 100,000 records"

Consultant's diagnostic tool: Grafana dashboard shaped like a parabola

Interviews that ask about it: all of them

Careers built without knowing it: at least one (documented)

See Also

- Z80 Assembly — Where the constant factor is the entire discipline. O notation would have been redundant; the T-state table was more precise.

- Premature Abstraction — The evil twin of premature optimisation. Both invoke O notation to justify themselves.

- Technical Debt — What accumulates when O(n²) algorithms are shipped with the comment “we’ll optimise later.”

- riclib — The entity who never studied complexity theory but counted clock cycles for thirty-five years, which is the same thing with better resolution.