Rate limiting is the practice of restricting the number of requests a client can make to a service within a given time window. It is the API’s bouncer — a large, impassive entity standing between you and the thing you are trying to reach, whose only job is to say “no” and whose only metric of success is how often it says it.

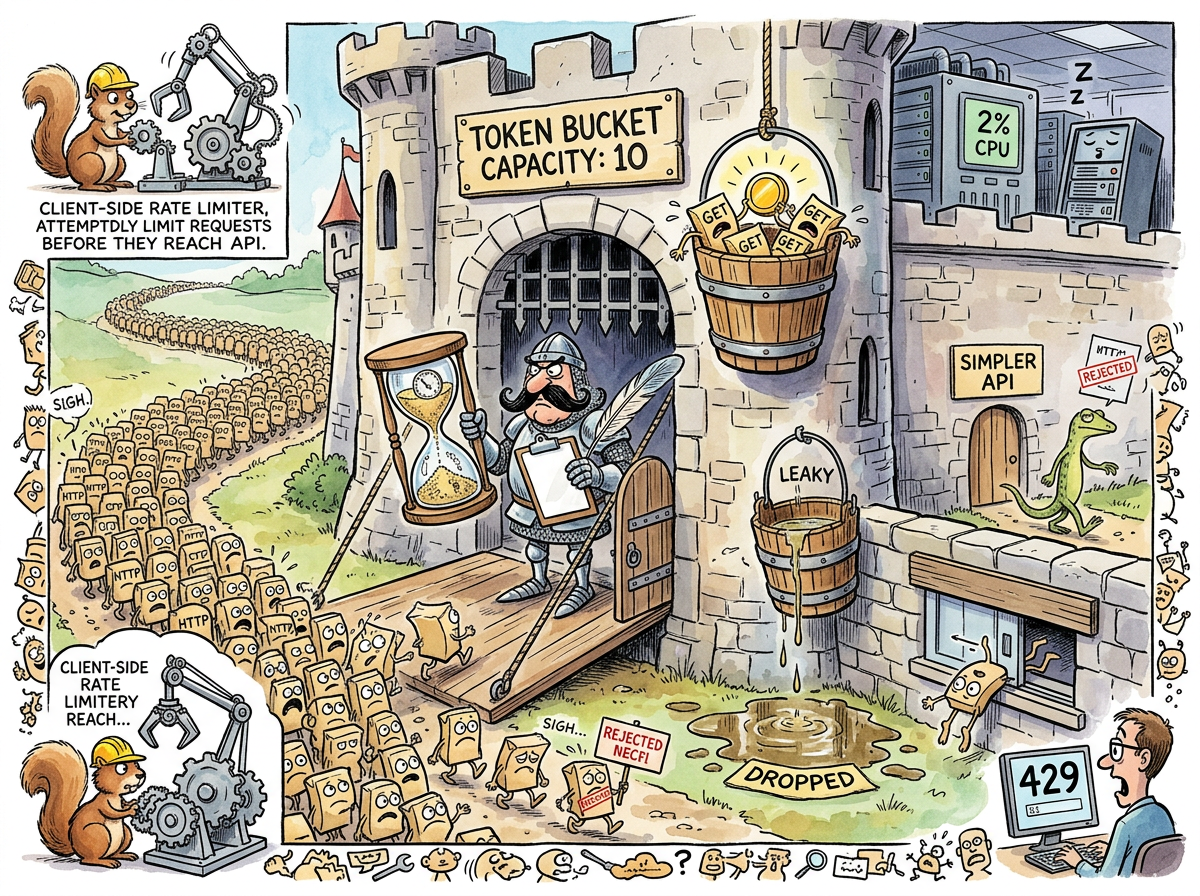

The concept is simple: too many requests are bad, therefore fewer requests are good, therefore we shall enforce fewer requests by rejecting the ones we don’t like. The rejected requests receive a 429 Too Many Requests response, which is HTTP’s way of saying “you are not welcome here, but please do come back later, at which point you will also not be welcome.”

“Rate limiting is the only security mechanism whose failure mode is identical to its success mode. In both cases, legitimate users cannot access the service.”

— A Passing AI, staring at a dashboard

The Algorithms

There are several algorithms for rate limiting. They all do the same thing. They all say “slow down.” They differ only in the mathematical formalism with which they say it.

Token Bucket. A bucket holds tokens. Each request consumes a token. Tokens regenerate at a fixed rate. When the bucket is empty, requests are rejected. The metaphor is intuitive: the bucket is the capacity, the tokens are permission, and the empty bucket is the moment when your perfectly valid request meets the server’s perfectly valid indifference.

Leaky Bucket. Requests enter the top of a bucket and drain out the bottom at a fixed rate. If requests arrive faster than they drain, the bucket overflows and requests are dropped. The leaky bucket smooths traffic into a steady stream. It also drops traffic that arrives in bursts, which is all traffic, because traffic is bursty, because users are bursty, because the real world is bursty, because the leaky bucket was designed for a world that does not exist.

Sliding Window. A window of time slides forward, counting requests within it. Exceeding the count triggers rejection. The sliding window is elegant in theory and an accounting nightmare in practice, requiring the server to remember when every recent request arrived, which requires storage, which requires its own rate limiting.

The Caffeinated Squirrel has implemented all three. In the same service. Simultaneously. “Defence in depth,” the Squirrel calls it. The Lizard calls it “three bouncers arguing about who’s in charge of the door.”

The Ouroboros

Rate limiting exists because of the Thundering Herd, the Retry Storm, and the Dogpile Effect. These are the three horsemen of distributed systems — massive coordinated surges of traffic that can flatten a service in seconds. Rate limiting is the last line of defence when politeness fails.

The irony — and it is an irony so perfect it should be framed — is that rate limiting causes retry storms. A client is rejected. The client retries. The retry is rejected. The client retries again, with backoff. Fifty other clients do the same. The retries pile up. The rate limiter sees a surge and tightens the limits. More rejections. More retries. The system has entered a feedback loop where the defence mechanism is generating the attack it was designed to prevent.

This is the ouroboros of distributed systems: the rate limiter eating its own tail, rejecting traffic that exists only because it rejected traffic.

“I once watched a rate limiter reject a retry that was caused by a rate limit that was triggered by a retry that was caused by a rate limit. Four layers deep. The original request was a health check.”

— The Caffeinated Squirrel, vibrating

The Client-Side Rate Limiter

The Squirrel’s natural response to being rate-limited is to build a client-side rate limiter — a mechanism that limits how fast the client sends requests, so the server’s rate limiter doesn’t have to reject them.

This is rate limiting the rate-limited. It is a pre-rejection rejection. It is the client saying “I know you’ll say no, so I’ll say no to myself first, faster.” The Squirrel considers this sophisticated. The Lizard considers this “paying two bouncers to guard an empty room.”

The client-side rate limiter requires configuration: how many requests per second? What bucket size? What backoff strategy? These values must match the server’s rate limits, which are not documented, or are documented incorrectly, or change without notice, or vary by endpoint, or vary by time of day, or vary by the server’s mood, which is a function of load, which is a function of how many clients are retrying, which is a function of how many clients were rate-limited.

The Lizard’s Position

The Lizard does not implement rate limiting. The Lizard sends one request. The request succeeds. The Lizard moves on.

When asked about rate limiting strategy, the Lizard responds:

DON'T SEND

TOO MANY REQUESTS

THIS IS NOT

AN ALGORITHM 🦎