Byzantine Failure is a failure mode in distributed systems in which a component does not merely fail — it lies. It sends conflicting information to different nodes, responds incorrectly with total confidence, and continues passing health checks while actively corrupting the system’s understanding of reality.

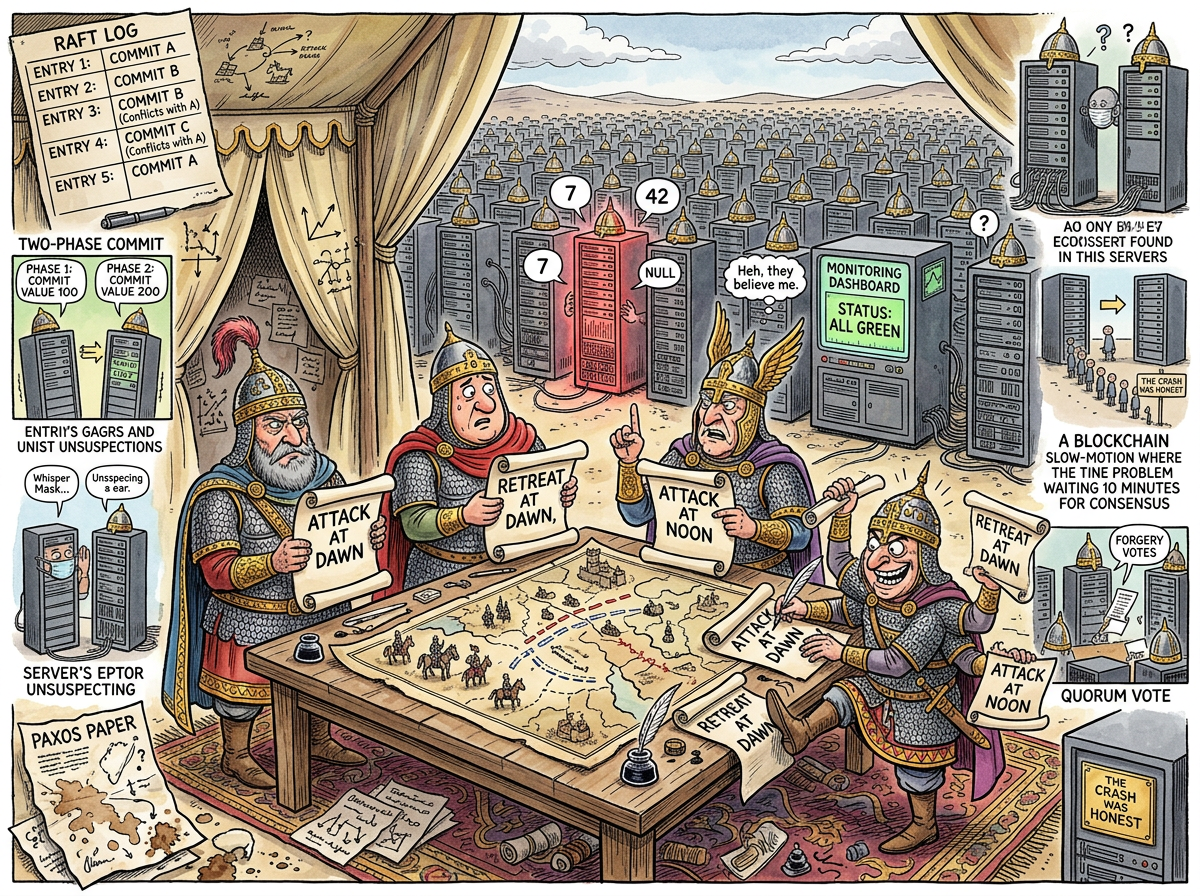

A crashed server is honest. It says nothing. Its silence is information. Its absence is detectable. The system routes around it, elects a new leader, and moves on. A Byzantine server is not honest. A Byzantine server tells node A the value is 7, tells node B the value is 42, tells the load balancer it is healthy, and tells the monitoring system that everything is fine. The system reaches consensus. The consensus is fiction.

“A crash is a funeral. A Byzantine failure is an impostor at the funeral, delivering the eulogy, and nobody realizes the deceased is the one giving the speech.”

— The Lizard, before returning to a system with three nodes and no trust issues

The Generals

The name derives from the Byzantine Generals Problem, formulated by Lamport, Shostak, and Pease in 1982. The scenario: several Byzantine generals surround a city. They must agree on a coordinated attack or retreat. They communicate only by messenger. Some of the generals are traitors who will send contradictory messages to different allies.

The problem is not that traitors exist. The problem is that loyal generals cannot distinguish a traitor’s message from a loyal general’s message. The message arrives on parchment. The parchment does not indicate whether it was written sincerely.

This is precisely the situation in a distributed system. A response arrives over TCP. The response contains a value. TCP does not indicate whether the value is correct. TCP guarantees delivery, not truth. The network is reliable. The liar is also reliable — reliably wrong.

“TCP guarantees the message arrives. Nobody guarantees the message is true. This is also how email works.”

— The Caffeinated Squirrel, mid-espresso, already designing a protocol to fix it

The Taxonomy of Dishonesty

Not all Byzantine failures are alike. The species can be classified by intent, though attributing intent to a malfunctioning server is itself a form of Byzantine reasoning:

The Confused Node

A server with corrupted memory, bit-flipped RAM, or a firmware bug that causes it to return plausible but incorrect values. It does not know it is lying. It believes — to the extent that a server believes anything — that the value is 7. The value is not 7. The server is not malicious. The server is wrong, which in a distributed system is indistinguishable from malicious, which is the entire point.

The Partisan Node

A server that has experienced a partial failure — one subsystem works, another doesn’t — and responds correctly to some queries and incorrectly to others. It passes health checks because the health check queries the subsystem that works. It fails real requests because real requests touch the subsystem that doesn’t. The monitoring dashboard is green. Production is on fire. These two facts are not contradictory. They are Byzantine.

The Malicious Node

The classical case: an actively compromised node sending deliberately false data. This is what the generals worried about. In practice, it is the rarest form. Most Byzantine failures are not the result of treachery. They are the result of a cosmic ray flipping a bit in a register, which is somehow worse, because you cannot fire a cosmic ray.

The Consensus Problem

The fundamental difficulty of Byzantine failure is that it corrupts the mechanism of agreement itself. In a system with n nodes, you need at least 3f + 1 total nodes to tolerate f Byzantine failures. This means that to tolerate a single liar, you need four nodes. To tolerate two liars, seven. The overhead of distrust scales linearly with the number of components you cannot trust.

The Caffeinated Squirrel finds this exhilarating. Four nodes to tolerate one liar. Seven to tolerate two. “We should run thirty-one nodes,” the Squirrel says, “to tolerate ten liars.” The system has three nodes. None of them have ever lied. The Squirrel is solving a problem that does not exist, at a scale that will never be needed, with an enthusiasm that cannot be contained.

The Lizard runs three nodes. When one lies, the Lizard turns it off. This is not a consensus algorithm. This is operations.

The Honest Crash

The deepest irony of Byzantine failure is that it makes the crash — the most feared failure mode of the 1970s — look desirable. A crash is clean. A crash is legible. A crash-stop failure model assumes that nodes either work correctly or stop entirely. There is no middle ground, no ambiguity, no server whispering different truths to different peers.

Every distributed systems textbook begins with the crash-stop model because it is simple, tractable, and entirely insufficient for the real world, where servers do not have the decency to stop when they break.

“I have seen systems survive a hundred crashes. I have never seen a system survive a single liar.”

— A Passing AI, reviewing a post-mortem that blamed ’network instability’ for a node that was sending fabricated checksums

Measured Characteristics

| Property | Crash Failure | Byzantine Failure |

|---|---|---|

| Honesty | Total (says nothing) | None (says everything, all wrong) |

| Detectability | Immediate (timeout) | Eventually (after the damage) |

| Required redundancy | 2f + 1 nodes | 3f + 1 nodes |

| Monitoring accuracy | Reliable | Also lying |

| Post-mortem clarity | “It crashed at 3:41 AM” | “It was wrong for six weeks” |

| Emotional response | Grief | Betrayal |

The Blockchain Footnote

The most commercially successful response to the Byzantine Generals Problem is the blockchain, which solves Byzantine consensus by requiring every participant to waste enormous amounts of electricity proving they are not lying. This is called Proof of Work. It works. It also consumes more energy than some countries, which is the distributed systems equivalent of burning down the city to prevent the generals from disagreeing about whether to attack it.