AI in Go is the practice of building AI applications in Go — a language with no AI ecosystem, no machine learning libraries, no LangChain, and no problem — because AI in 2026 is not matrix multiplication. AI in 2026 is calling an API and doing something useful with the response. And Go has had an HTTP client since 2009.

The question arrives at every conference, every architecture review, every Twitter thread where a developer mentions Go and AI in the same sentence:

“Shouldn’t you be using Python?”

No. Sit down.

“The developer was asked why he builds AI in Go. The developer answered. The developer has been answering since August 2024. The developer will be answering until the heat death of the universe or until Python ships a single binary, whichever comes first. Neither is expected soon.”

— The Lizard, who builds AI in C and considers Go a compromise

The Ecosystem Objection

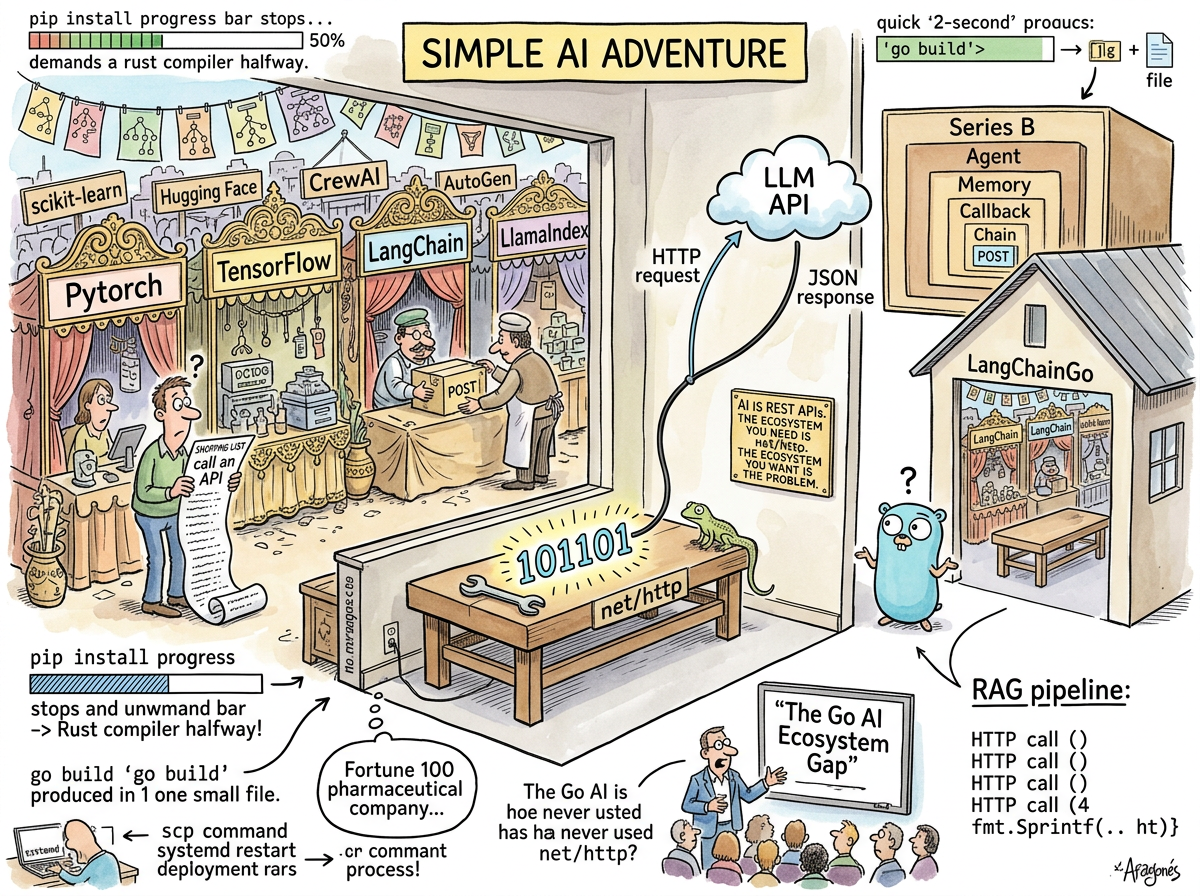

The objection is always the same: “Go doesn’t have the AI ecosystem.”

This is true. Go does not have PyTorch. Go does not have TensorFlow. Go does not have scikit-learn, Hugging Face Transformers, LangChain, LlamaIndex, CrewAI, AutoGen, or the forty-seven other Python frameworks that wrap API calls in enough abstraction to qualify for their own zip code.

Go has net/http. Go has encoding/json. Go has context. Go has io.Reader.

This is the entire AI ecosystem you need, and understanding why requires understanding what AI development actually is in 2026, as opposed to what the conference circuit would like you to believe it is.

What AI Development Actually Is

AI development in 2026 falls into two categories:

Category 1: Training models. Building the neural networks. Tuning weights. Computing gradients. Running matrix multiplication on GPUs at a scale that makes the electricity bill weep. This is Python’s domain. This will remain Python’s domain. Nobody is proposing that you train GPT-5 in Go. Nobody sane, anyway. The Squirrel once proposed it. The Squirrel was denied.

Category 2: Using models. Calling an API. Sending a prompt. Receiving a response. Parsing JSON. Doing something useful with the text — routing it, storing it, acting on it, integrating it into a workflow, serving it to a user, logging it for compliance, chaining it with another call.

Category 1 is approximately 0.01% of all AI development. Category 2 is the other 99.99%.

Category 2 is REST APIs.

REST APIs are POST requests with JSON bodies and JSON responses. Go has been doing this since before the term “AI ecosystem” existed. Go does this the way a plumber fixes a pipe: without philosophy, without abstraction, without a framework that interprets your intent and translates it into HTTP on your behalf because you apparently cannot be trusted to construct a request body.

body := fmt.Sprintf(`{"model":"claude-sonnet-4-20250514","messages":[{"role":"user","content":%q}]}`, prompt)

resp, err := http.Post(url, "application/json", strings.NewReader(body))

That’s it. That’s the AI ecosystem. Two lines. The model receives a prompt. The model returns a response. Everything between those two lines and a working AI application is software engineering — routing, error handling, concurrency, storage, deployment — and software engineering is what Go was built for.

The LangChain Question

LangChain is a Python framework for building AI applications. It raised $160 million at a $1.25 billion valuation. It provides chains, agents, tools, memory, retrievers, output parsers, prompt templates, and a callback system.

It wraps requests.post().

The Go equivalent of LangChain is a function:

func ask(ctx context.Context, client *http.Client, prompt string) (string, error) {

// build request, send request, read response, return text

}

Twelve lines. No framework. No chains. No Series B. One function that sends a prompt and returns text.

THE SQUIRREL: “But LangChain gives you CHAINS! And AGENTS! And MEMORY!”

riclib: “I have functions. I have goroutines. I have a database.”

THE SQUIRREL: “But the ABSTRACTION—”

riclib: “The abstraction is wrapping a POST request. I can see the POST request. I prefer to see the POST request.”

THE SQUIRREL: “But what about TOOL CALLING and FUNCTION ROUTING and—”

riclib: “A switch statement.”

THE SQUIRREL: silence of the specific kind that follows when someone realises that the $1.25 billion framework they’ve been evangelising is a switch statement with marketing

And then someone ported it to Go. LangChainGo. 8,700 stars. An abstraction layer for API calls — ported to the language that has the best API calling standard library in existence. This is the software equivalent of importing an umbrella to a city that is already indoors. The LangChain article covers this in the detail the absurdity deserves.

The “But What About…” Section

“But what about embeddings and vector search?”

Your vector database has a REST API. Call it.

“But what about RAG pipelines?”

A RAG pipeline is: query a vector database, get relevant documents, format a prompt with the documents, call an LLM. Four HTTP calls and a fmt.Sprintf. This is not a framework. This is a function.

“But what about streaming responses?”

bufio.Scanner on resp.Body. Go has been reading streams since before LLMs learned to stream.

“But what about multi-agent systems?”

Goroutines and channels. Go’s concurrency primitives are literally agents communicating through message passing. The language was designed for this. It just wasn’t designed for this specifically, which is why it works generally.

“But what about the Anthropic SDK?”

There is one. In Go. github.com/anthropics/anthropic-sdk-go. Typed. Compiled. Streaming support. Tool use. It is, if anything, more pleasant to use than the Python SDK, because the Go type system tells you when you’ve constructed an invalid request — before you send it, not after the API returns a 400.

“But what about—”

net/http. The answer is always net/http. The question is always “how do I call an API,” and the answer has not changed since 2009, and the answer will not change when the next AI framework arrives with 47,000 lines of abstraction around the same answer.

The Deployment Argument

The AI application is built. Time to deploy.

In Python:

ssh server

python3 --version # wrong version

pyenv install 3.12.1 # 4 minutes

pyenv virtualenv 3.12.1 ai # 30 seconds

pip install -r requirements.txt # 2 minutes

# ERROR: Failed building wheel for tokenizers

# ERROR: Cargo (Rust) is required to build tokenizers

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

# install Rust to install a Python package to call an API

pip install -r requirements.txt # 3 more minutes

python app.py &

In Go:

scp app server:

ssh server ./app &

One binary. No dependencies. No Rust installation to install a Python package to call an HTTP endpoint. No virtual environment. No version manager. No prayer.

riclib deploys a Fortune 100 pharma company’s AI compliance agent — the one with the audit logs, the deviation summaries, the Merkle chains, the agents that read their own mail — with scp and a systemd restart. The binary is 30 megabytes. It runs on a Linux box. It has been running since deployment. It has not required a virtual environment, a version manager, or a Rust compiler at any point in its operational history.

SCP THE BINARY

RESTART THE SERVICE

GO TO BED

THIS IS THE WHOLE DEPLOYMENT

EVERYTHING ELSE IS COPING

🦎

The Concurrency Argument

An AI orchestration system calls multiple models, multiple tools, multiple data sources. In Python, this means asyncio, which means async/await, which means every function in the call chain must be async, which means the codebase splits into two worlds — sync and async — that cannot easily call each other, and the developer spends more time managing the colour of their functions than managing the AI.

In Go:

go callModel(ctx, "claude", prompt)

go callModel(ctx, "gpt4", prompt)

go queryDatabase(ctx, query)

Three goroutines. Concurrent. No function colouring. No async/await. No event loop. The Go runtime schedules them across cores. The developer writes sequential code that runs concurrently. Nobody needs to understand the event loop because there is no event loop.

Python’s GIL — the Global Interpreter Lock — means that Python threads don’t actually run in parallel. Python’s asyncio means that concurrent I/O is possible but requires rewriting every function signature. Go’s goroutines mean that the developer types go before the function call and gets actual parallelism. The delta between “I want to call three APIs at once” and “I am calling three APIs at once” is three characters in Go and a weekend of refactoring in Python.

The Data Science Exception

Python’s dominion over data science and machine learning is absolute and deserved. NumPy, pandas, scikit-learn, PyTorch — these are not alternatives. They are the tools. The data science ecosystem chose Python the way water chooses downhill.

This article does not argue against Python for training models. This article does not argue against Python for research notebooks. This article does not argue against Python for the 0.01% of AI development that requires gradient computation on GPUs.

This article argues against Python for the other 99.99% — the part where you call an API, parse a response, route a decision, store a result, serve a user, log for compliance, and deploy to a server. The part that is software engineering. The part that Go was built for.

The Squirrel does not accept this distinction. The Squirrel believes that because Python trains models, Python should also serve models, call models, orchestrate models, deploy models, and manage the infrastructure that runs models. This is the logical fallacy of “the tool that does one thing well should do all things,” which is how JavaScript ate the world and the world got indigestion.

The Fortune 100

riclib published a Twitter thread on August 5, 2024: “Why I Am Building AI in Go.” The thread made the case — performance, concurrency, simplicity, security, deployment — for a Fortune 100 pharma company’s AI orchestration framework.

The thread was written before Vibe Coding had a name. The thesis has since been proven by the additional argument documented in Vibe In Go: when the machine writes 90% of the code, the language that constrains the machine is worth more than the language that empowers it.

But the original arguments remain. The binary deploys with scp. The goroutines handle concurrent API calls without function colouring. The type system catches malformed requests at compile time. The explicit error handling makes every failure visible. The single binary has no dependencies, no virtual environment, no CUDA driver conflicts, no pip install that requires a Rust compiler to install a Python package to call an HTTP endpoint.

The pharma company’s compliance agent runs on a Linux box. It has agents that read audit logs, generate deviation summaries, query DuckDB, and converse with users about regulatory findings. It is Go. It is net/http. It is encoding/json. It is a single binary.

It has never required LangChain.

“The developer built an AI orchestration framework for a Fortune 100 company. The developer used Go. The developer was asked: shouldn’t you be using Python? The developer showed them the binary. The binary was running. The binary had been running. The binary did not require a virtual environment, a version manager, or an opinion about whether to use pip or poetry or conda or the nine other package managers that exist because none of them solved the problem.”

— The Passing AI, reading the August 2024 thread

Measured Characteristics

- Lines of Go to call an LLM API: ~12

- Lines of LangChain to call an LLM API: ~47,000 (framework included)

- LangChain valuation: $1.25 billion

- Valuation per HTTP abstraction layer: ~$156 million

net/httpvaluation: $0 (in the standard library since 2009)- Lines of Go in riclib’s AI orchestration system: classified (Fortune 100)

- Lines of Python in riclib’s AI orchestration system: 0

- Deployment method:

scpand restart - Virtual environments required: 0

- Rust compilers installed to install a Python package: 0

- CUDA driver conflicts resolved: 0

pip installfailures debugged: 0go buildfailures resolved: many (at compile time, where they belong)- Characters to add concurrency in Go: 3 (

go) - Weekend required to add concurrency in Python: 1 (async/await recolouring)

- RAG pipeline in Go: 4 HTTP calls +

fmt.Sprintf - RAG pipeline in LangChain: a Retriever wrapping a VectorStore wrapping a Client wrapping an HTTP call

- Streaming in Go:

bufio.Scanneronresp.Body - Streaming in Python:

async for chunk in response.aiter_lines()(after recolouring every function) - Go SDK for Anthropic: exists, typed, compiled, pleasant

- Go SDK for OpenAI: exists, typed, compiled, pleasant

- Conference talks explaining “Go for AI”: growing

- Conference attendees who asked “but what about the ecosystem?”: shrinking

- The ecosystem:

net/http(since 2009, unchanged, sufficient) - The Squirrel’s preferred AI language: Python (with LangChain, CrewAI, and a dream)

- The Lizard’s preferred AI language: C (with raw sockets, obviously)

- riclib’s preferred AI language: Go (since August 2024, before the wave)

- The binary: still running

See Also

- Go — The language. Compiled, typed, simple.

net/httpsince 2009. - Python — The language. For training models: yes. For everything else: a virtual environment and a prayer.

- LangChain — $1.25 billion wrapping

requests.post(). Someone ported it to Go. Why. - Vibe In Go — The companion article: why Go’s compiler matters when the machine writes the code.

- Boring Technology —

net/httpis boring. Boring deploys withscp. - Interlude — The Article That Arrived Before the Wave — The Twitter thread. August 5, 2024. The prophecy.

- The Lizard — Would prefer raw sockets but tolerates Go’s HTTP client.

- The Caffeinated Squirrel — Wants to train GPT-5 in Go. Has been denied.