What a Programming Language Is When the Machine Writes Most of It

An AI wrote four thousand lines of code yesterday. I wrote about twenty words.

The code shipped. The reason it shipped is that between the four thousand lines and production were five filters, each catching what the previous one missed, until what reached me was small enough to fix in twenty words.

When the machine writes most of the code, the language is no longer primarily a tool for writing. It’s a tool for catching. The job has changed and most of the language debate hasn’t caught up yet.

This essay is the framework I use to think about it. It is language-agnostic. It maps cleanly onto Go, less cleanly onto Rust, badly onto Python — but those are observations, not the point. The point is the shape of the apparatus.

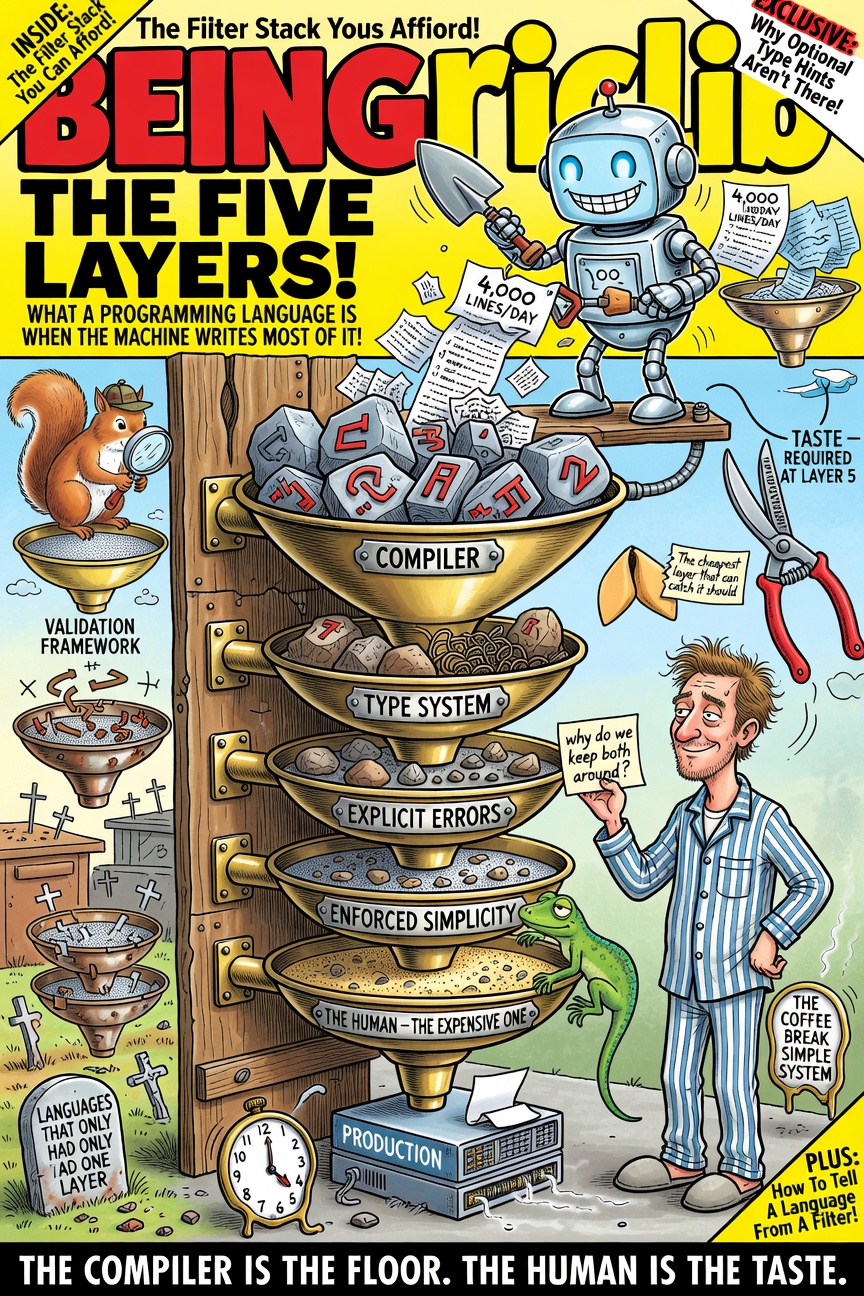

The Layers

In order, from cheapest to most expensive:

Layer 1 — The Compiler

Catches the obvious. Types don’t match. Imports unused. Variables shadowed. The first draft of any AI-generated code fails here, because syntax errors are easier than the model than coherence is.

This is cheap. This is instant. The error message is short. The fix is mechanical. The AI reads the error, fixes the code, builds again. Seconds, not minutes.

A language without a compiler — Python, JavaScript without TypeScript, any interpreted language run as-is — does not have Layer 1. The first draft runs. Whether it runs correctly is a question that gets answered later, by the customer, in production, at 2 AM.

This is the floor. A stack without a floor falls all the way through.

Layer 2 — The Type System

Catches the structural. A function was expecting WikiLinkResolver and got func(string) string. A handler is supposed to return ([]byte, error) and returns just []byte. An interface doesn’t match. A field has the wrong shape. The compiler catches the syntax; the type system catches the protocol.

A type system is cheap because the compiler has already done the bookkeeping. Adding a constraint costs nothing at runtime. Removing one is a refactor, not a rewrite. The machine’s second draft fails here, sometimes — and when it does, the error points at the call site that needs updating, which the AI can fix without further input.

A weak type system — Any propagated through a Python codebase, interface{} smeared across a Go codebase — gives Layer 2 the appearance of existing without the function of existing. The bug surfaces later, in a layer that is more expensive.

Layer 3 — Explicit Errors

Catches the ignored. Every error has to be handled — not caught, not swallowed — handled.

if err != nil, four hundred times per file, every error addressed, every fallback explicit, none of them silently disappearing into the gap between a try and a finally that nobody reads. The machine cannot write except: pass in a language that doesn’t have except. The machine must handle the error. The human can see the handling.

This is the layer most people argue against. They say it’s verbose. It is verbose. The verbosity is the feature. Verbosity is what makes the human see error handling instead of trusting it. When the AI handles errors invisibly, the AI has handled them in the way that is easiest to write, which is rarely the way that is correct.

A language without explicit errors has Layer 3 only by convention, which means it doesn’t have it.

Layer 4 — Enforced Simplicity

Catches nothing. Prevents everything.

One way to loop. One way to format. One way to organise a project. The machine generates uniform code because the language doesn’t permit non-uniform code. There are no eighteen subtly-different ways to spell the same idiom. There is one. The AI uses it. The human reads it at a glance.

Layer 4 does its work before the catch is needed. Code that all looks the same reveals the meaningful differences faster than code in seventeen styles. The human’s reading speed goes up. The human’s taste budget — the thing that determines whether the system is right — gets to spend itself on architecture instead of style.

This is the most controversial layer because it is not, strictly, a catching mechanism. It’s a not-needing-to-catch mechanism. Languages that prize expressiveness skip Layer 4 on principle and pay for it at Layer 5.

Layer 5 — The Human

Catches the subtle.

“Why do we keep both around?” Five words that no compiler can generate because five words require taste. “Make it dark mode aware.” Six words that bring fifteen files into alignment, because the human knows what the system is for and the machine doesn’t.

The human is the most expensive layer. Every minute the human spends here is a minute they are not spending on the architecture, the customer, the next stone in the river. A language stack that sends everything to the human has not made the human more powerful — it has made the human a debugger.

A language stack that sends the right things to the human is the only kind of stack worth using when the machine writes most of the code.

The Economics

The cost ratio matters.

Layer 1 is approximately free. Layer 2 is approximately free. Layer 3 is cheap. Layer 4 is free at runtime, modestly costly in expressiveness. Layer 5 is the order of magnitude all by itself.

A good language stack maximises what cheap layers catch. A great language stack also makes sure that what does reach Layer 5 is the human’s actual work — architecture, intent, judgement — not work the language itself asks the human to do on its behalf.

There are two failure modes here.

Mode one: too few layers. The language has Layer 1 and possibly Layer 2 and stops. Everything else reaches Layer 5. The human becomes a debugger. The taste budget is spent on the language’s gaps. The velocity that AI was supposed to provide collapses, because the bottleneck moved from typing to checking.

Mode two: too many layers, or layers catching the wrong thing. The language has all five but several of them are surfacing constraints the language itself imposes — borrow rules the AI didn’t know to satisfy, async-runtime mismatches the AI couldn’t see, lifetime annotations on values that didn’t need lifetimes. These are not language defects — these are language demands, often well-justified in their own frame. But in this collaboration mode, the human’s taste budget is spent on the language’s demands rather than on the system. Velocity also collapses, just in a more dignified register.

The right number of layers is the number that catches what should be caught, and not one more.

The Diagnostic Question

When evaluating a language stack for AI-assisted development, the question is not “how good is this language?” It’s:

- Of the work that reaches the human, how much is system work and how much is language work?

If most of what reaches Layer 5 is “the architecture has the wrong shape” or “this domain doesn’t belong in this place” or “we’re solving the wrong problem” — the stack is healthy. The human is doing human work.

If most of what reaches Layer 5 is “the borrow checker fights this clone” or “the type inference can’t disambiguate” or “the async runtime needs a wrapper” — the stack is leaking. The language is consuming taste that should be spent elsewhere.

The diagnostic is independent of language fashion. A Python codebase with strict pyright, ruff, and exhaustive runtime contracts can have all five layers. A Go codebase with interface{} everywhere and silenced errors can have only one. The layers are properties of the practice, not just the language.

But — and this is the part most language debates skip — the language sets the default. The default decides what the median codebase looks like. Most teams ship the median. AI generates the median. The default matters more than the maximum, when the writer is a machine.

The Recurring Move

Every essay in this corpus that touches AI-assisted engineering eventually arrives back at the same observation: the human’s expensive moves are the ones that matter, and the only job of everything else is to make sure those moves get to happen.

Liberato’s Law makes this point in the discovery direction — the human pays a tax in lines of code to discover the simple version. Gall’s Law Got Cheap makes it in the velocity direction — the customer is the working-ness oracle and the human’s job is to put the simple version in front of her. The Stones in the River makes it in the time direction — the human places stones months before the machine needs to leap between them. The Five Layers makes the same observation in the language direction — the cheap layers exist so the expensive one can do its job.

The expensive layer is always the human. The whole apparatus is built around making the human’s time count.

The Closing

I do not optimise for the best language. I optimise for the language with the most layers I can afford. When the machine writes most of the code, the language is not a tool for writing. It is a tool for filtering — and a filter you can afford to run, on every line, every commit, every build, is worth more than a filter you can afford to run on twenty percent of the code on Wednesdays.

The compiler is the floor. The human is the taste. Everything in between is a filter, and a filter you can afford.

There is an encyclopedia where software principles get the roast they deserve — YAGNI at a buffet, Liberato’s Law in a prison, Gall’s Law in a boardroom. And a mythology where these ideas have been arguing with each other for a hundred and fifty episodes. The five layers are how I think about language choice for AI-assisted work. The framework names it. The rest of the corpus illustrates it.

See also:

- Vibe Engineering — Steering the machine with five-word redirections, which is what Layer 5 actually does

- Why I Vibe in Go, Not Rust or Python — The framework applied to one specific stack

- Gall’s Law Got Cheap — The velocity argument: why catching things cheaply matters

- The Stones in the River — Why the human’s taste budget is the scarce resource

- YAGNI — Why Layer 5 spends its time on architecture, not on padding

- Boring Technology — Layer 4, generalised: enforced simplicity as a civic virtue

- The Cathedral — What gets drawn at Layer 5 and what stays drawn