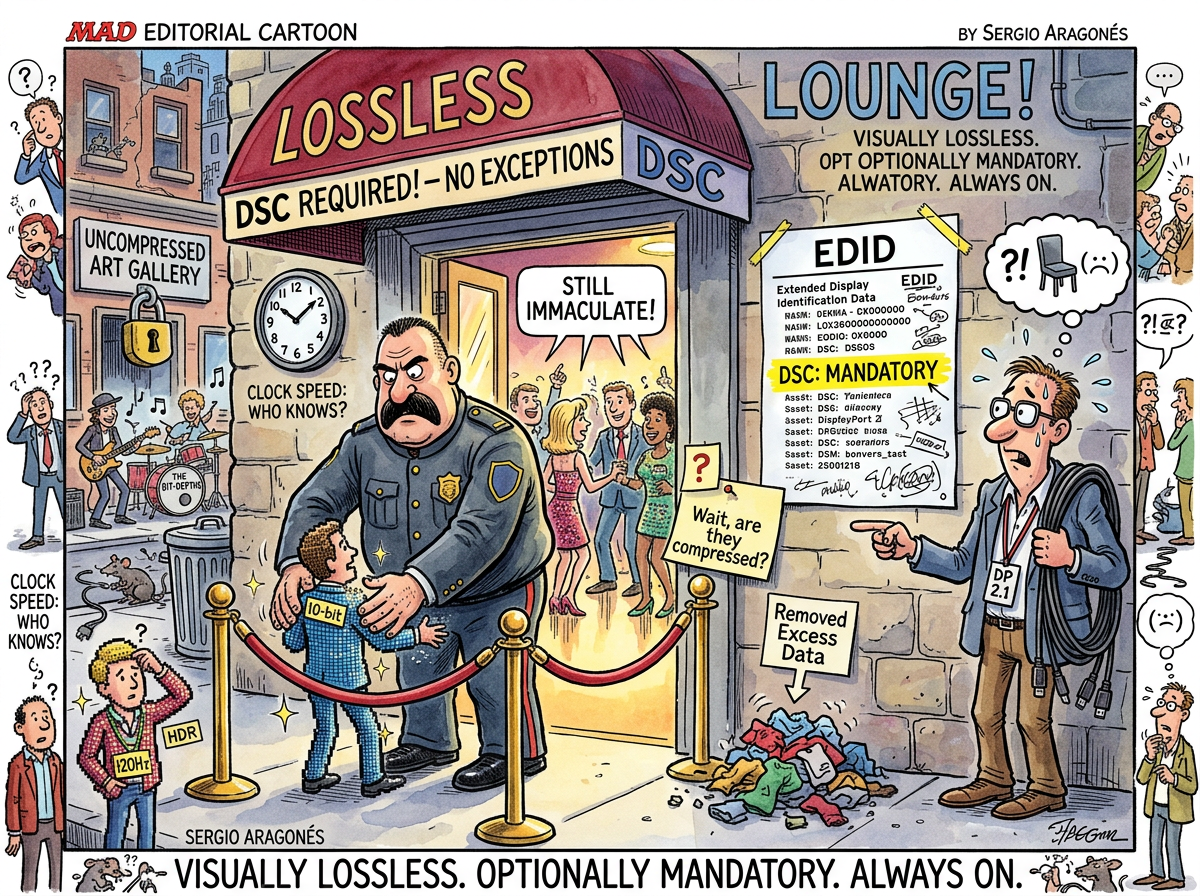

DSC (Display Stream Compression) is a VESA standard for compressing video signals between a GPU and a monitor, designed to be “visually lossless” — meaning no human can perceive the difference between compressed and uncompressed output, meaning the compression is perfect, meaning nobody asked for it but everybody gets it because the monitor’s EDID says so and the monitor’s EDID is not negotiable.

DSC operates on small blocks of pixels, compresses them using a fixed-ratio algorithm (typically 2:1 to 3:1), and decompresses them at the display. The compression happens in the GPU’s display engine. The decompression happens in the monitor’s timing controller. The developer sitting between them notices nothing, suspects nothing, and — unless they read the AMD DTN log at the kernel debug level — knows nothing.

The Mandatory Optional

DSC was designed for situations where the bandwidth demand exceeds the cable’s capacity. A 7680x2160 display at 120Hz with 10-bit color requires approximately 60 Gbps of uncompressed data. DisplayPort 1.4 provides 26 Gbps. The math does not work. DSC makes it work by compressing the 60 Gbps into ~26 Gbps. This is reasonable. This is the intended use case. This is fine.

What is less fine — what is the specific absurdity that only hardware engineers could produce — is when the monitor demands DSC even when the bandwidth is sufficient.

DisplayPort 2.1 provides 80 Gbps. The signal requires 60 Gbps. The cable has 20 Gbps of headroom. There is no mathematical reason to compress. The monitor compresses anyway. The Samsung Odyssey G95NC includes DSC as a mandatory requirement in its EDID — the hardware descriptor that tells the GPU what the monitor can do. The EDID does not say “use DSC if needed.” The EDID says “use DSC.”

The GPU obeys. The GPU always obeys the EDID. The GPU is constitutionally incapable of arguing with a monitor about whether compression is necessary. The EDID is the monitor’s résumé, and the GPU takes it at face value.

DSC: CLOCK_EN SLICE_WIDTH Bytes_pp

[0]: 1 960 256

Clock enabled. Compression active. On DP 1.4. On DP 2.1. On every cable, every protocol version, every configuration. The monitor wants compression. The monitor gets compression. Nobody asked why.

Visually Lossless

“Visually lossless” is a term that means “mathematically lossy but perceptually identical.” The compressed signal is not the same as the uncompressed signal. Bits have been removed. Information has been discarded. But the discarded information is, according to VESA’s psychovisual model, information that the human eye cannot perceive.

This is almost certainly true for photographs, video, games, UI elements, and the vast majority of content that appears on a screen.

The one use case where DSC compression might be perceptible — and this is a theoretical “might” that has never been conclusively demonstrated — is small monospaced text at high density on a dark background. Terminal text. The kind of content that consists of sharp single-pixel stems, high-contrast edges, and the specific geometry that block-based compression handles least gracefully.

A developer with two 57-inch ultrawides running four terminal panes at 8-point font asked whether DSC would affect text clarity. The answer: on Wayland, which uses grayscale antialiasing (not subpixel), with DSC operating on pixel blocks (not subpixels), at 2.3:1 compression on a display this dense — no. Not perceptibly. Not measurably. Not at all.

The developer accepted the math. The developer was not entirely sure the developer believed the math. But DP 1.4 with DSC was the only version that also supported DDC/CI, so the question was academic.

Measured Characteristics

- Protocol version: VESA DSC 1.2a (current)

- Compression ratio: typically 2:1 to 3:1 (fixed per stream)

- Perceptual quality: visually lossless (per VESA’s psychovisual model)

- Mathematical quality: lossy (bits are removed)

- Human detection rate: ~0% (under normal viewing conditions)

- Monitors that require DSC in EDID: increasing

- Monitors that require DSC even with sufficient bandwidth: yes (Samsung Odyssey G95NC, among others)

- GPU’s ability to argue with the EDID: none

- Developer awareness of active DSC: ~0% (unless you read kernel debug logs)

- The compression nobody asked for: always on

- The quality difference nobody can see: also always on

See Also

- DDC/CI — The protocol that works on DP 1.4 and breaks on DP 2.1. The reason DSC’s bandwidth savings don’t matter.

- DisplayPort — The cable that carries the compressed signal. Both versions compress. Only one talks back.

- DPMS — Another display protocol that modern hardware interprets creatively.

- The Nesting — Where DSC was investigated across both DP versions and found to be identical and inescapable.