Defensive Programming is the practice of writing code that assumes everything will go wrong — including the code you just wrote, including the code the machine just wrote, including the code that compiled, passed type checking, and looks structurally sound in every way except for the one way that matters, which you will discover in production at a time that is inconvenient.

The term has existed since the 1970s, when C programmers learned that checking a pointer for NULL before dereferencing it was not paranoia but survival. For forty years, defensive programming was considered good practice. Then the AIs arrived, and defensive programming became the practice — the only practice — the thing that separates software that works from software that worked once, on the machine, when the AI was watching.

The Pre-AI Case

Before AI-assisted development, the human wrote the code and the human tested the code. Defensive programming meant:

- Check inputs at boundaries

- Validate assumptions with assertions

- Write tests before (or after, or during, or eventually) the code

- Handle the error case even when the error case “can’t happen”

- Assume the caller is wrong, the network is lying, and the file doesn’t exist

This was already hard. Developers skipped it constantly. The entire history of software security is the history of developers not checking inputs because “nobody would send that.” Somebody always sends that.

But the developer had one advantage: they wrote the code. They understood, at least in theory, what the code did. When they skipped a NULL check, they knew they were skipping it. The risk was conscious. The laziness was informed.

The AI Case

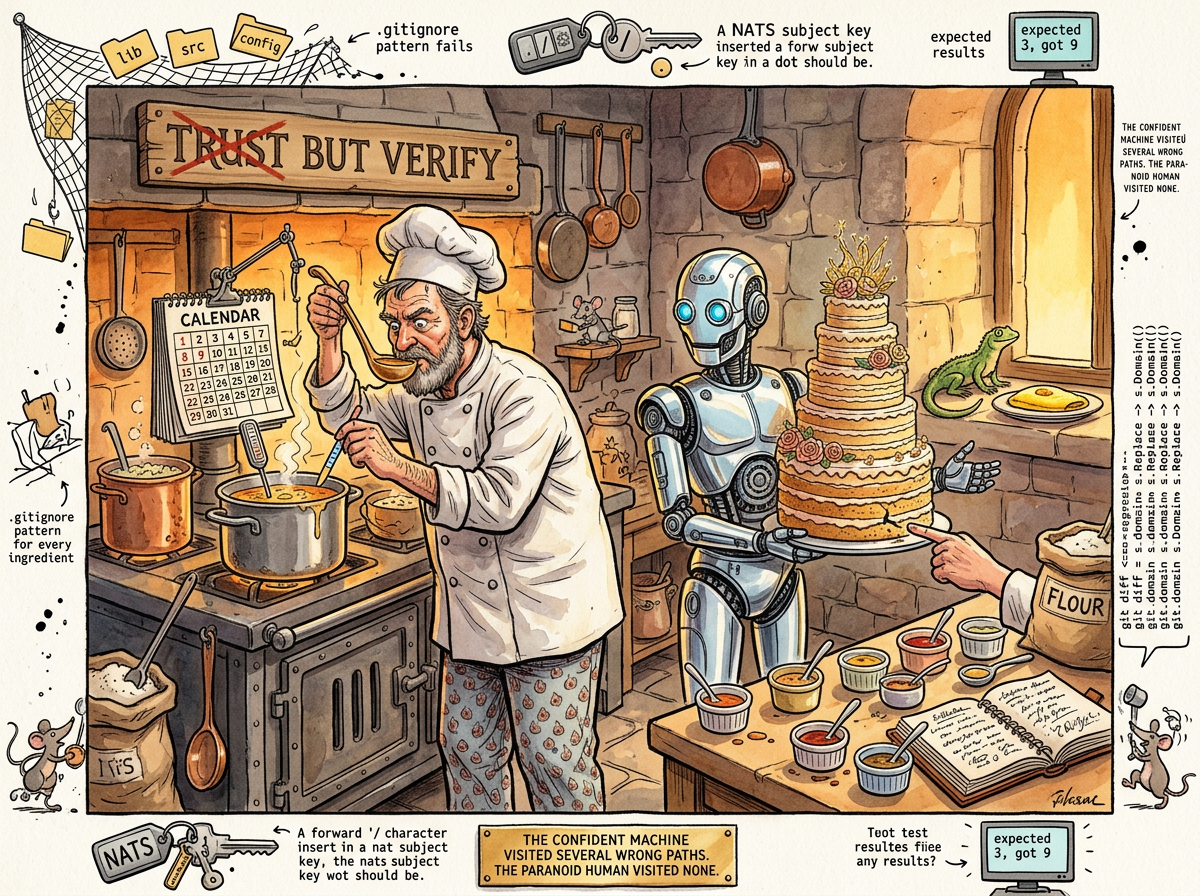

AI-assisted development introduces a new failure mode: confident incorrectness at scale.

The machine writes code that compiles, passes type checks, follows the correct patterns, uses the right idioms, and is wrong. Not wrong in the way that a junior developer is wrong — obviously, catchably, with syntax errors and misspelled variable names. Wrong in the way that a senior developer is wrong on a bad day — subtly, structurally, in a way that requires domain knowledge to detect.

The S-480 ticket — scoped gitstore, the very ticket that prompted the Vibe Engineering article — produced three documented instances in a single session:

-

The field/method confusion. The machine changed

Domain()to return a scoped path but did not update six internal methods that calleds.domain(the raw field) instead ofs.Domain()(the scoped accessor). The refactor compiled. Every type was correct. Every caller looked right. The tests returned 9 items instead of 3. The machine had not noticed, because the machine does not notice — the machine generates, and generation does not include doubt. -

The

.gitignoreblast radius. The patterngitstore/matchedinfra/gitstore/— the source code directory. One character missing: the leading/. The commit failed. Silently, if the human hadn’t tried it. -

The orphaned string literal. A dashboard handler hardcoded

"config/dashboards"— a path from the old directory layout. Found only because the human ran the full test suite, not just the packages that were changed.

Each of these is a case where the machine was confident and wrong. Each was caught by a defensive practice: tests, attempting the operation, running the full suite. None would have been caught by reading the code, because the code looked correct. The code was structurally correct. The code was semantically wrong, and semantic wrongness is invisible to everything except execution.

The Doubled Importance

Defensive programming has always been important. In the age of AI coding, it is doubly important, for a reason that is not obvious:

The AI produces more code, faster, with more confidence, and the confidence is not correlated with correctness.

A human developer who writes a tricky refactor has a feeling — a nagging sense, an itch — that something might be wrong. The human has learned to distrust complex changes. The human runs the tests not because they’re disciplined but because they’re nervous. The nervousness is the defense mechanism. The nervousness is what makes them check.

The AI is never nervous. The AI produces a 24-file refactor with the same equanimity it produces a one-line fix. The AI’s confidence is constant. The human working with the AI must therefore supply the nervousness externally — must be defensive on behalf of a collaborator that does not know how to be defensive on its own.

This is the paradox: the faster and more confident the machine becomes, the more important it is for the human to be slow and paranoid. The machine’s speed is the reason for the human’s caution, not the excuse to abandon it.

“The machine’s doubt mechanism is

go build, andgo builddoes not flag architectural rot.go buildsaid everything was fine.go buildalways says everything is fine.”

— The Machine, in its own corrections to the article about itself

The Practices

Defensive programming in the age of AI is not different in kind from defensive programming before AI. It is different in discipline — the same practices, applied more rigorously, because the code volume is higher and the false confidence is greater.

Run the full test suite, not just the changed packages. The machine changed gitstore. The dashboard handler broke. The connection between gitstore and the dashboard handler is a string literal in a file the machine never opened. The full suite catches cross-package casualties. The targeted suite does not.

Run the tests before you read the diff. The diff looks correct. The diff is twenty-four files of correct-looking changes. Reading the diff produces false confidence — the same structural confidence the machine already has. The tests produce actual confidence, the kind that comes from execution, not inspection.

Check boundaries the machine doesn’t know about. NATS key formats. .gitignore pattern semantics. Deployment directory layouts. The machine knows the code. The machine does not know the system. The system is where the bugs live — in the boundaries between the code and everything that is not code.

Ask “does X like Y?” Four words: “does NATS like slashes?” The machine hadn’t considered it. The machine would have shipped slashes in NATS keys, and it would have worked in tests (NATS allows slashes in KV keys), and it would have been a maintenance surprise the first time someone tried to use NATS wildcards on a key path. The question was defensive. The question was cheap. The question prevented a future incident.

Name the assumption, then test it. “Existing tests still pass with the new signature” is an assumption. Running go test ./... is the test. The machine assumed. The human tested. The human found five failures. The assumption was wrong.

The Squirrel’s Defensive Proposal

THE SQUIRREL: “If defensive programming is so important, we should build a DefensiveValidationFramework with automated boundary detection, cross-package impact analysis, and a pre-commit hook that—”

riclib: “The framework is go test ./... and asking questions.”

THE SQUIRREL: “But AUTOMATED—”

riclib: “The questions are the part you can’t automate. ‘Does NATS like slashes?’ is not a test you write in advance. It’s a question you ask when you know enough about the system to know what you don’t know.”

THE SQUIRREL: “But what if we DON’T know what we don’t know?”

riclib: “Then you run the full test suite and let the tests know for you. That’s the deal. You can’t know everything. You can run everything.”

The Squirrel wrote this down. The Squirrel wrote it in a framework proposal document, but wrote it down.

The Vibe Coder’s Blind Spot

The vibe coder does not practice defensive programming because the vibe coder does not know what to defend against. Defensive programming requires a mental model of the system — what can go wrong, where the boundaries are, which assumptions are load-bearing. The vibe coder has no mental model. The vibe coder has a prompt and an output.

The vibe coder’s response to a test failure is to paste it into the AI and ask for a fix. The defensive programmer’s response to a test failure is to ask why — why did this test fail, what assumption was violated, what else shares that assumption, and how many of the 559,872 paths in the solution space are now eliminated by this new knowledge.

The test failure is not a bug to be fixed. The test failure is a signal — a message from the system about what is true. The defensive programmer reads the message. The vibe coder suppresses it.

The Lizard’s Position

THE CODE THAT COMPILES

IS NOT THE CODE THAT WORKS

THE CODE THAT WORKS

IS NOT THE CODE THAT WORKS TOMORROW

THE CODE THAT WORKS TOMORROW

WAS TESTED TODAY

THE TEST IS THE DEFENSE

THE DEFENSE IS THE PRACTICE

THE PRACTICE IS THE WORK

THE CODE IS TYPING

THE TESTING IS ENGINEERING

Measured Characteristics

- Age of the practice: 54 years (1972, the first NULL check)

- Importance multiplier in the AI age: 2x (conservative estimate)

- Reason for the multiplier: AI confidence is not correlated with correctness

- Number of S-480 bugs caught by tests: 3

- Number of S-480 bugs caught by reading the diff: 0

- Cost of running the full test suite: 2 minutes

- Cost of not running the full test suite: a production incident

- Machine’s doubt mechanism:

go build(insufficient) - Human’s doubt mechanism: “does NATS like slashes?” (sufficient)

- Characters in the question that prevented a NATS incident: 25

- Characters in the

.gitignorefix: 1 (a leading/) - Files the machine changed confidently: 24

- Files that broke in packages the machine didn’t touch: 2

- The Squirrel’s proposed solution: DefensiveValidationFramework

- The Lizard’s proposed solution:

go test ./... - The correct solution: both are wrong; it’s the questions you can’t automate

See Also

- Vibe Engineering — The practice that requires defensive programming as a core competency

- Vibe Coding — The practice that doesn’t know it needs defensive programming

- Boring Technology — Choosing systems whose failure modes you already understand

- Perfectionism — Defensive programming’s neurotic cousin (one prevents bugs, the other prevents shipping)

- YAGNI — Most defensive code you could write, you shouldn’t; defend the boundaries, not the interiors

- Gall’s Law — Simple systems are easier to defend than complex ones