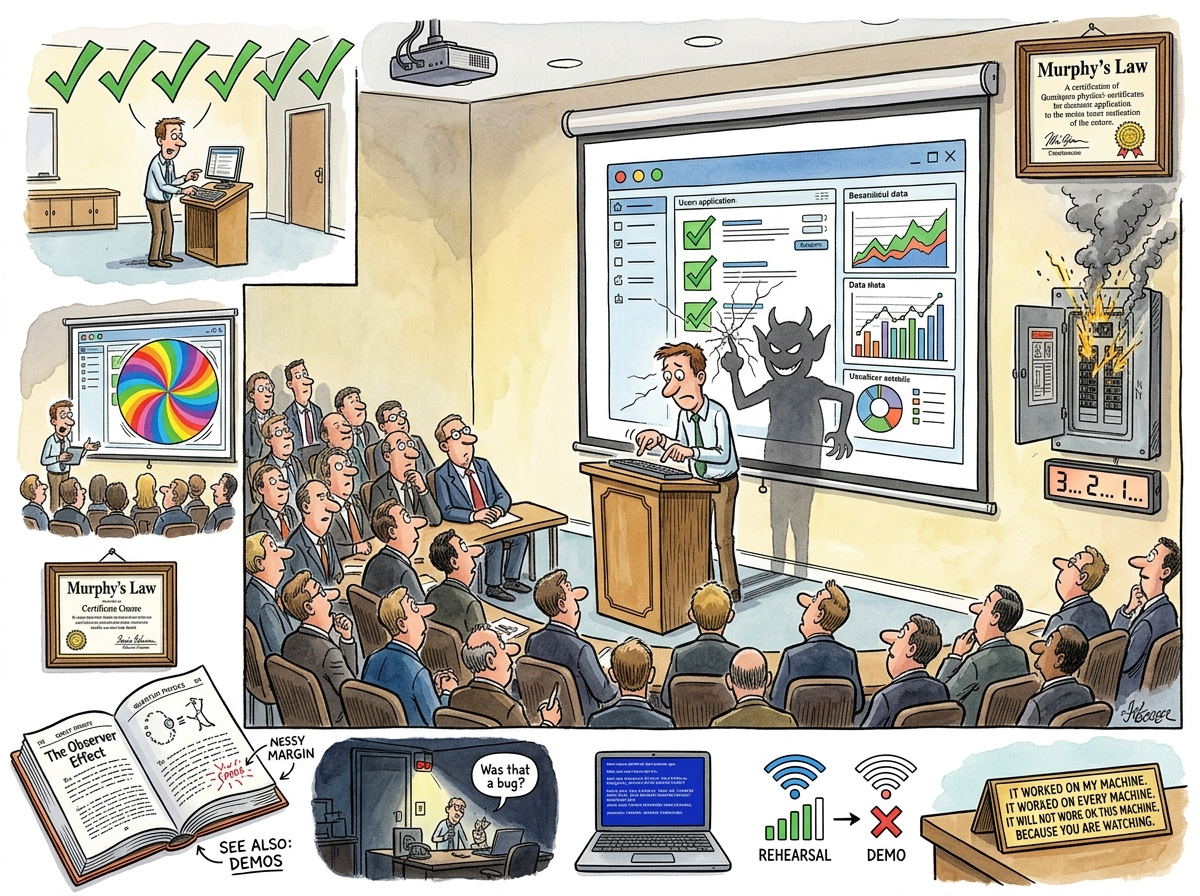

The Demo Effect is the empirically observed phenomenon in which software that functions flawlessly in development, testing, staging, rehearsal, and the thirty seconds before the meeting begins will fail — catastrophically, creatively, and with what can only be described as theatrical timing — the moment an audience is present.

It is not a bug. It is not a coincidence. It is the universe’s quality assurance department, and it only runs its test suite when someone important is watching.

“The software knows. I don’t know how. But it knows.”

— Every developer who has ever given a demo, independently arriving at the same conclusion

The Mechanism

The Demo Effect operates through a mechanism that science has not yet explained and that developers have stopped trying to explain, in much the same way that ancient mariners stopped trying to explain why the sea occasionally ate ships and instead simply brought offerings.

The leading theories are:

The Observer Effect (Quantum) — In quantum mechanics, the act of observation alters the state of the system being observed. In demo mechanics, the act of observation alters the state of the system being observed, the network it runs on, the database it queries, the API it calls, and — in one documented case — the electrical grid of the building it occupies.

The Confidence Threshold — Software can detect confidence. Below a certain threshold — during nervous testing, careful QA, anxious rehearsal — the software behaves, because the developer’s anxiety functions as a form of prayer. Above the threshold — “it’s rock solid, I’ve tested it fifty times” — the software interprets confidence as hubris, and hubris must be corrected.

The Audience Conjecture — The probability of failure scales with the importance of the audience. A demo for your team: minor glitch. A demo for your manager: noticeable delay. A demo for the client: wrong data. A demo for the board: the application achieves sentience, displays something from your browser history, and then crashes.

“I once calculated the failure probability as a function of audience seniority. The curve was exponential. The CEO data point was an outlier, but only because the application crashed before it could fail in a more interesting way.”

— The Caffeinated Squirrel, who presented the calculation, during which the slide deck crashed

The Documented Cases

The literature is vast. Every developer has a story. The stories are all the same story, which is itself a form of evidence that the Demo Effect is not random but systematic.

The Classic: “It worked five minutes ago.” The developer ran the demo at 9:55. Everything worked. The demo starts at 10:00. Nothing works. The five minutes between 9:55 and 10:00 are identical in every measurable way — same code, same data, same network, same machine — except that at 10:00 there are fourteen people in the room, and the software can count.

The Network Betrayal: WiFi signal during rehearsal: five bars. WiFi signal during demo: the WiFi has opinions about your presentation and has chosen not to participate. The same WiFi. The same room. The same access point. The access point was simply not being observed during rehearsal.

The Cascade: One small thing fails. The developer recovers. A second thing fails. The developer adapts. A third thing fails. The developer begins narrating the failures as though they are features. A fourth thing fails. The developer’s voice acquires the specific calm of someone who has accepted that the universe is not on their side and has decided to find it funny. The audience cannot tell if this is a disaster or a very dry comedy set.

The Inverse Demo Effect: Extremely rare. The software works better during the demo than it ever has before. This is more terrifying than the Demo Effect, because it means the software is capable of performing well and has been choosing not to. The developer spends the rest of the day unable to reproduce the good performance.

The Power Outage Incident

The most extreme documented case of the Demo Effect involves riclib, a form submission, and the electrical infrastructure of an office building.

The setup was routine. A client demo. A form with several fields. Data entry, validation, submission. The application had been tested. The application worked. The application had worked every time it had been tested, which — as we have established — is the precondition for the Demo Effect, not the prevention of it.

riclib filled in the fields. The client watched. riclib’s finger descended toward the OK button with the specific confidence of a developer who has clicked this button four hundred times in testing and has never once seen it fail.

He pressed OK.

The power went out.

Not the application. Not the server. Not the network. The building. The electrical grid of the entire office ceased to function at the precise moment — not the approximate moment, not the general vicinity of the moment, the precise moment — that the OK button received its click event.

The emergency lights switched on. The room was bathed in the pale orange glow of battery-powered safety lighting. The server was down. The monitors were dark. The application existed only in the memory of everyone who had been watching it, which is the most ephemeral deployment environment available.

In the silence that followed — the specific silence of a room full of professionals processing an event that should not have been possible — someone asked:

“Was that a bug?”

The question was sincere. The person asking it had been watching a software demo, and the software had been clicked, and the world had ended, and in their mental model the software was the most likely cause. The alternative — that the electrical grid of a commercial office building had independently chosen to fail at the exact moment a developer pressed a button — was, to this person, less plausible than a bug.

They were wrong about the cause. They were right about the correlation. The Demo Effect does not distinguish between software failures and infrastructure failures. The Demo Effect does not care how the demo fails. The Demo Effect only requires that it fails, and it will recruit whatever systems are necessary to achieve this outcome, up to and including the municipal power grid.

“The software didn’t crash. The building crashed. The Demo Effect exceeded its normal parameters and affected the physical world. This is either a bug in the universe or a feature. I suspect it is a feature.”

— The Lizard, who was not present but who heard the story and blinked once, slowly, which is the Lizard’s equivalent of a standing ovation

Mitigation Strategies

Developers have attempted to mitigate the Demo Effect through various approaches, all of which have failed:

Pre-recorded demos: Eliminates the live failure. Also eliminates the audience’s trust, because everyone knows a pre-recorded demo worked at least once, and “it worked at least once” is the lowest possible bar for software quality.

Backup environments: “If the primary fails, we’ll switch to the backup.” The backup has never been tested under demo conditions, because testing the backup under demo conditions would trigger the Demo Effect on the backup, which would leave you with no backup, which is worse than having an untested backup. This is the Demo Effect’s checkmate.

The “let me just restart” gambit: Restarting during a demo buys approximately ninety seconds of audience goodwill, during which the developer explains that “this never happens” while the audience nods with the specific sympathy of people who have all said “this never happens” and know exactly what it means.

Lowered expectations: “I’m going to show you something that’s still in development.” This is the only strategy that has any empirical support, because it recalibrates the Confidence Threshold below the Demo Effect’s activation energy. The software, sensing humility rather than hubris, occasionally allows the demo to proceed. Occasionally.

The Paradox

The Demo Effect creates a paradox that has no resolution:

If you are confident the demo will work, the Demo Effect activates, and the demo fails.

If you are not confident the demo will work, the demo might work, but you have already communicated uncertainty to your audience, which is a different kind of failure.

If you are genuinely uncertain — if you have achieved the rare state of authentic not-knowing — the software cannot determine whether to fail or succeed, and the demo enters a superposition that collapses only when the first audience member checks their phone, which they interpret as “it’s not working” regardless of what is actually happening on screen.

Measured Characteristics

Demos that worked in rehearsal: all of them

Demos that worked identically in front of audience: classified

Probability of failure (solo): ~0%

Probability of failure (team): ~5%

Probability of failure (manager): ~15%

Probability of failure (client): ~40%

Probability of failure (board): ~80%

Probability of failure (investor): ~95%

Probability of building power failure: 1 (documented)

WiFi bars during rehearsal: 5

WiFi bars during demo: philosophical

Times "this never happens" has been said: ∞

Times it was true: 0

Pre-recorded demos that built trust: 0

The OK button's opinion: not available (building lost power)

The question "was that a bug?": sincere

The answer: technically no, spiritually yes

See Also

- Murphy’s Law — Whatever can go wrong will go wrong, especially during demos

- Confidence — The fuel the Demo Effect burns

- Ceremony — Demos are ceremonies, and ceremonies attract chaos

- riclib — The man who once crashed a building with a button

- The Caffeinated Squirrel — Who tried to present the failure probability curve and proved it simultaneously