The Pentium is the name Intel gave to its fifth-generation x86 processor in 1993, and it represents — more than any other product in computing history — the moment when a processor architecture became too complex for any single human being to understand.

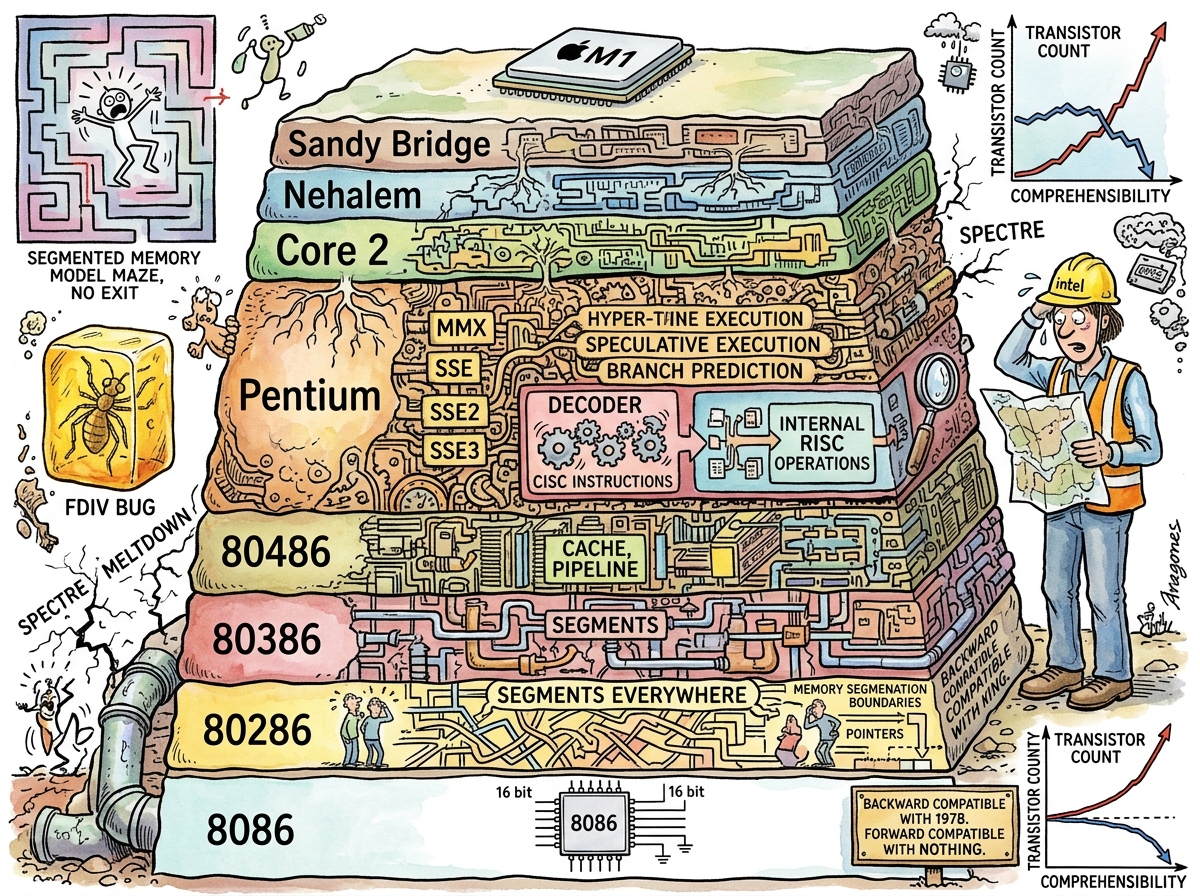

The Z80 instruction set fit on a sheet of paper. The 6502 had 56 instructions. The 68000 was orthogonal and elegant. The Pentium is none of these things. The Pentium is a monument to backward compatibility, a geological formation of accumulated decisions dating back to 1978, each layer added to the one below without ever removing anything, because removing anything would break the software that depended on the thing being there, and the software that depended on the thing being there was all of the software in the world.

The Pentium is what happens when you cannot start over. The Pentium is Rewrite that never happened, and the accumulated Technical Debt of the rewrite not happening, and the proof that sometimes not rewriting is worse than rewriting, except that rewriting was never possible because the world ran on x86 and the world cannot be rebooted.

The Geological Model

The x86 architecture is best understood not as a design but as a sedimentary formation:

8086 (1978) — The foundation. Sixteen-bit. Segmented memory (because 16-bit registers couldn’t address more than 64K without segments, and the segments would haunt the architecture for twenty years). Real mode. No protection. Simple enough that a person could understand it.

80286 (1982) — Protected mode added. But the 286 couldn’t switch from protected mode back to real mode without a hardware reset. IBM’s workaround: trigger a triple fault to crash the CPU and reboot into real mode. This is the first time the x86 architecture required a crash as a feature.

80386 (1985) — Finally 32-bit. Virtual memory. Paging. Protected mode that actually worked. But still backward-compatible with the 8086, which means the segments are still there, the real mode is still there, the 16-bit instructions are still there. The 68000 had flat 32-bit addressing in 1979. The x86 took until 1985 and kept the segments anyway.

80486 (1989) — On-chip cache. Pipeline. Integrated FPU (previously a separate chip). The 486 was when x86 started to be fast, not because the architecture was good but because Intel threw transistors at it.

Pentium (1993) — Superscalar (two pipelines). Branch prediction. The FDIV bug (a floating-point division error that affected specific operand combinations, discovered by a mathematics professor, denied by Intel until public pressure forced a recall). The FDIV bug is important not because it was a bug — every processor has bugs — but because it was the first bug in a processor so complex that testing every combination was no longer feasible.

Pentium Pro / Pentium II (1995–1997) — The revolution that proved the architecture was unsustainable: internally, the Pentium Pro decoded x86 CISC instructions into micro-ops — simple, RISC-like internal operations that the execution engine could handle efficiently. Intel had, in effect, admitted that RISC was right all along. The x86 instruction set was now a compatibility layer, a translation interface between the software that expected CISC and the hardware that wanted RISC. The 68000 and ARM had been RISC-adjacent from the start. The Pentium arrived at RISC by building a CISC-to-RISC translator and hiding it inside the chip.

And then: MMX, SSE, SSE2, SSE3, SSSE3, SSE4.1, SSE4.2, AVX, AVX2, AVX-512. Each one a new set of SIMD instructions, each one added without removing the old ones, each one growing the instruction set like rings on a tree.

The Z80 had 158 instructions. The modern x86-64 has over 1,500 documented instructions and an unknown number of undocumented ones. No human being knows the complete instruction set. No human being can know it. The Pentium’s descendants are designed by teams with CAD tools, verified by simulation, and understood — to the extent they are understood — in specialised subsections, never in totality.

The age of knowing the machine ended here.

The Speculative Execution Disaster

The Pentium’s most consequential innovation was speculative execution: the processor guesses which branch a program will take, executes the predicted path before knowing if the guess was correct, and discards the results if the guess was wrong. When the prediction is right (97% of the time), the program runs faster. When the prediction is wrong, the work is discarded and the correct path is executed.

This was brilliant. This was also, it turned out twenty-five years later, a catastrophic security vulnerability.

In 2018, researchers published Spectre and Meltdown: attacks that exploited speculative execution to read memory that a program should not have access to. The speculative path — the one that should have been “discarded” — left traces in the cache. By measuring cache timing, an attacker could infer the contents of protected memory. The processor’s own cleverness was the vulnerability.

The fix was to slow down speculative execution, add barriers between speculative and committed state, and patch the operating system to prevent cross-process cache leaks. The patches reduced performance by up to 30% on some workloads. Twenty-five years of speculative execution performance gains, partially rolled back because the speculation was too clever.

ARM processors also had speculative execution variants affected by Spectre. But ARM’s simpler pipeline, conditional execution (which eliminates many branches and thus many speculation opportunities), and lower reliance on speculative tricks meant the impact was smaller and the mitigations less costly.

The Pentium made the processor faster by making it guess. The guessing leaked secrets. The 6502 did not guess. The 6502 executed the instruction you gave it, at the speed you expected, with no speculation, no leaks, and no security patches required forty years later.

The FDIV Bug: A Parable

On October 30, 1994, Thomas Nicely, a mathematics professor at Lynchburg College, discovered that the Pentium’s floating-point division unit produced incorrect results for certain operand combinations. Specifically, the reciprocals of certain prime numbers were wrong in the ninth decimal place.

Intel’s initial response was that the bug affected “only” one in nine billion division operations and that most users would never encounter it. Intel offered replacements only to customers who could demonstrate they were affected — a policy that assumed users should diagnose hardware errors in their CPUs, which is like asking patients to diagnose errors in their pacemakers.

The public response was immediate and devastating. IBM halted Pentium shipments. Intel eventually recalled the processors at a cost of $475 million. The FDIV bug became the most famous hardware defect in computing history, not because it was the most severe but because it was the first time a processor was too complex for exhaustive testing, and the manufacturer’s response revealed that complexity had exceeded not just engineering capacity but institutional humility.

A Z80 could be tested exhaustively. A 6502 could be tested exhaustively. A Pentium could not. The FDIV bug was not a failure of engineering. It was a failure of scale. The machine had exceeded the ability of its makers to know it completely, and the age of complete knowing — the age of the Z80, the 6502, the 68000 — was over.

Why It Won

The Pentium — and the x86 architecture generally — won despite being technically inferior to the 68000 and its successors, despite the segmented memory model, despite the CISC-to-RISC translation layer, despite the FDIV bug, despite Spectre and Meltdown.

It won because of IBM. IBM chose the 8088 (a cost-reduced 8086) for the original IBM PC in 1981. The IBM PC became the standard. The standard attracted software. The software attracted users. The users attracted more software. The ecosystem became self-reinforcing, and by the time the 68000 was clearly superior and ARM was clearly the future, the x86 had too much software to abandon.

This is Conway’s Law applied to processor architecture: the industry’s structure (IBM-compatible PCs) determined the architecture (x86), regardless of technical merit. The 68000 lost not because it was worse but because it was in the wrong machines. ARM took forty years to win because the ecosystem had to shift — from desktops (where x86 was entrenched) to mobile (where x86 could not follow).

The Pentium won by being backward-compatible with the 8086. The 8086 won by being in the IBM PC. The IBM PC won by being IBM. And the architecture that resulted — layers of sediment, each one backward-compatible with 1978, each one adding complexity, none removing anything — is the geological record of an industry that could not start over.