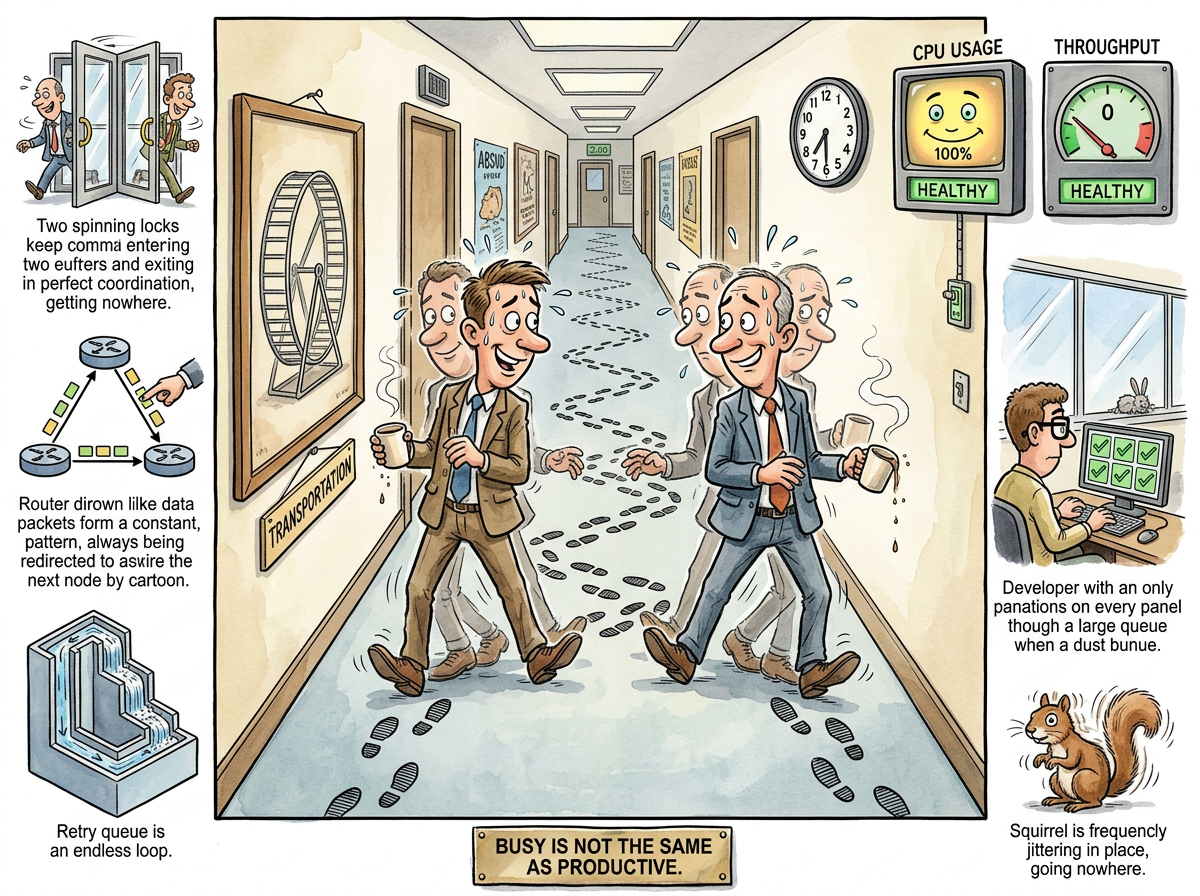

Livelock is a concurrency phenomenon in which two or more processes are actively running, consuming resources, responding to each other, and accomplishing absolutely nothing. Unlike deadlock, where processes freeze and the system goes quiet, livelock keeps every CPU at full utilization while producing zero useful work. The system is alive, technically, in the same way a hamster wheel is transportation.

The distinction matters. Deadlock is easy to detect: nothing is happening. Livelock is difficult to detect: everything is happening. The processes are not stuck. They are busy. They are busy changing state in response to each other’s state changes, and the state they keep changing to is the one that causes the other process to change state, and so on, forever, with great enthusiasm.

“THE DEADLOCKED SYSTEM HAS THE DECENCY TO STOP

THE LIVELOCKED SYSTEM KEEPS GOING

DECENCY IS UNDERRATED”

— The Lizard

The Corridor Problem

The canonical example is two people meeting in a narrow corridor.

Person A steps right to let Person B pass. Person B, at the same instant, steps left — their left, which is the same direction. They are now both standing to the same side. They make eye contact. They smile. They both step to the other side. They are now both standing to the other same side. They smile again. They step again. They are synchronised. They are polite. They are stuck.

This is livelock in its purest form. Neither person is blocked. Both are actively trying to resolve the situation. Both are making correct decisions based on the information available to them. The problem is that the information available to each is the other’s previous position, which has already changed by the time they act on it.

In software, the corridor is a shared resource. The two people are threads. The stepping aside is releasing a lock. The smiling is a context switch.

“I have modelled the corridor scenario to fourteen billion iterations. At no point does either party consider standing still. Standing still is the optimal strategy. It is also the one that feels rude. Politeness, I have concluded, is O(infinity).”

— The Passing AI

The Mechanism

Livelock requires three conditions:

Shared state. Two or more processes are reading and writing the same resource — a lock, a flag, a routing table, a seat at a conference room table.

Reactive symmetry. Each process responds to the other’s action with a complementary action. Process A yields because B is waiting. Process B yields because A is waiting. Both yield. Both resume. Both yield again.

No symmetry breaker. There is nothing — no random delay, no priority ordering, no coin flip — to ensure one process goes first and the other waits. They are perfectly matched. They are equals. Equality, in this context, is the problem.

Deadlock occurs when processes refuse to yield. Livelock occurs when processes insist on yielding. The system has too little cooperation in one case and too much in the other. The throughput is the same.

Observed Habitats

Retry storms with identical backoff. Two clients fail to acquire a resource. Both wait exactly 100 milliseconds. Both retry simultaneously. Both fail. Both wait 100 milliseconds. Both retry. The Retry Storm article covers the herd variant; livelock is the intimate, two-party version, like a retry storm for introverts.

Routing loops. Router A determines that the best path to a destination is through Router B. Router B determines that the best path is through Router A. Packets bounce between them until their TTL expires. The routers are busy. The packets are going nowhere. The network monitoring dashboard shows healthy link utilization.

Lock-free data structures gone wrong. A compare-and-swap loop retries when another thread modifies the value first. Two threads modify the value simultaneously, causing both CAS operations to fail, causing both to retry, causing both to fail. The data structure is lock-free. It is also progress-free.

Polite thread yielding. Thread A detects contention and calls yield(). Thread B detects contention and calls yield(). The scheduler runs A. A detects contention and yields. The scheduler runs B. B detects contention and yields. The scheduler runs A. The CPU is at 100%. The work completed is 0%.

The Diagnosis Problem

Deadlock announces itself. The process stops. The health check fails. The alert fires. Someone investigates.

Livelock hides. The process is running. The health check passes — the process responds to health checks, it just doesn’t do anything else. CPU usage is high, which looks like the system is working hard. Thread counts are normal. Memory is stable. Every dashboard is green. The only symptom is that requests are not being served, and that symptom lives in a different dashboard that nobody is watching because all the other dashboards are green.

“I once observed a livelocked system pass seventeen health checks per second while serving zero requests. It was, by every metric we had chosen to measure, the healthiest failure in production history.”

— The Caffeinated Squirrel

The Cure: Introducing Imperfection

The solution to livelock is to break the symmetry. This is done by making the system slightly less fair, slightly less predictable, or slightly less polite.

Randomized backoff. Instead of retrying after exactly 100 milliseconds, retry after 100 milliseconds plus a random interval between 0 and 50 milliseconds. The two processes will almost certainly choose different delays. One will go first. The symmetry is broken. The corridor is clear.

Priority ordering. Assign each process a priority. When two processes yield to each other, the higher-priority process goes first. This replaces infinite politeness with a hierarchy, which is less egalitarian but terminates.

Exponential backoff with jitter. Each failed attempt increases the delay and adds randomness. This is randomized backoff’s more aggressive cousin. It is the standard fix for Retry Storms, and it works for livelock for the same reason: it makes it statistically improbable for two processes to remain synchronised.

Doing nothing. In the corridor scenario, the optimal strategy for at least one party is to stand still and let the other person walk around them. In software, this translates to having one process back off completely — not yield, not retry, but wait — while the other proceeds. This requires one process to be less polite, which is to say: more effective.

Measured Characteristics

CPU utilisation during livelock: 100%

Useful work during livelock: 0%

Appearance to monitoring: perfectly healthy

Appearance to users: completely broken

Time to detect deadlock: seconds (something stopped)

Time to detect livelock: hours to days (everything is running)

Corridor encounters resolved per day: most of them (humans add jitter naturally)

Corridor encounters that become legend: the ones where both people apologise

Fix complexity: one random number generator

Fix elegance: negative (chaos is the solution)

See Also

- Priority Inversion — A low-priority process holds a resource that a high-priority process needs. The system is busy running medium-priority tasks that help no one. Made famous by a Mars rover that needed a mutex expert 48 million miles away.

- Thundering Herd — Livelock’s extroverted relative. Instead of two processes being too polite, a hundred processes are too simultaneous.

- The Halting Problem — The theoretical guarantee that you cannot, in general, determine whether a program will finish. Livelock is the practical demonstration that a program can run forever while looking like it is about to finish any moment now.

- Retry Storm — What happens when livelock scales horizontally. Two polite processes become two hundred polite processes, and the corridor becomes a motorway.