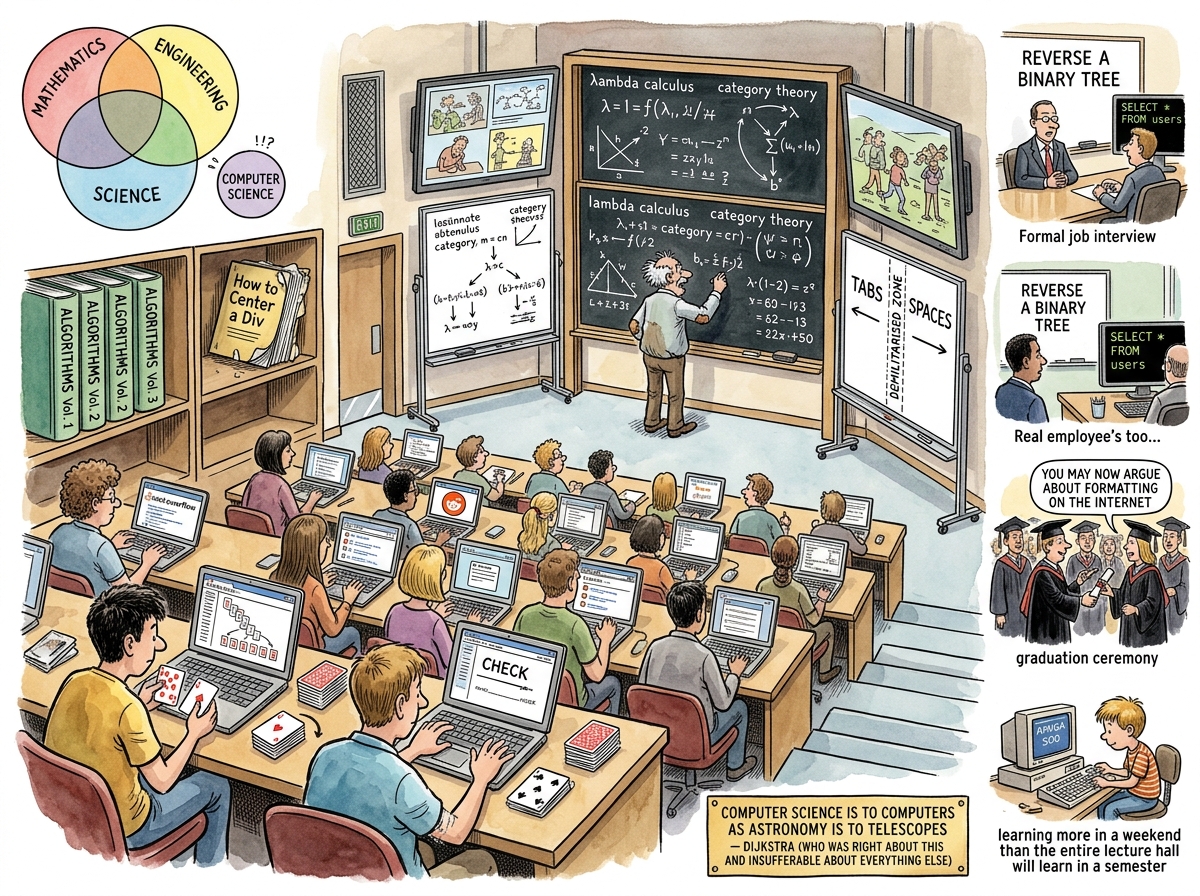

Computer Science is a field of study that has the word “science” in its name but whose practitioners mostly argue about tabs versus spaces. It is a science the way political science is a science — it borrows the word for respectability and then does something else entirely. Most Computer Science graduates have never conducted an experiment, published a paper, or used the scientific method. What they have done is argue about whether arrays should be zero-indexed, which is not science but is definitely a belief system.

The field was formally named at the 1956 Dartmouth Conference, where a group of academics needed something that sounded respectable enough for grant applications. They could have called it Applied Logic, or Automated Reasoning, or What Happens When Mathematicians Get Funding. They chose Computer Science, which — as Edsger Dijkstra later pointed out — is about as descriptive as calling surgery “knife science.”

“Computer Science is no more about computers than astronomy is about telescopes.”

— Edsger Dijkstra, who was right about this and insufferable about everything else

The Naming Problem

The naming problem is the foundational crisis of Computer Science, and it has never been resolved, because resolving it would require the field to decide what it actually is, which it cannot do, because it is at least four different things wearing a trench coat.

Is it science? Science requires the scientific method: hypothesis, experiment, observation, replication. Computer Science departments primarily produce proofs, which is mathematics, and software, which is engineering, and arguments about formatting, which is theology.

Is it mathematics? Many of the foundational results of Computer Science — Turing’s halting problem, Church’s lambda calculus, Gödel’s incompleteness theorems — are mathematics by any definition. But no mathematics department would accept a thesis titled “Benchmarking React Versus Svelte on a Todo App,” and that is closer to what most CS graduates actually do.

Is it engineering? Engineering requires licensure, liability, and the occasional bridge. Software has none of these. A civil engineer whose bridge collapses goes to prison. A software engineer whose deployment collapses writes a post-mortem and goes to lunch.

Is it suffering? The students in the 3 AM lab with a segmentation fault and a deadline would say yes, but suffering is not typically listed as an academic discipline, though it probably should be.

“Computer Science is three disciplines in a trench coat. Mathematics provides the theorems. Engineering provides the salaries. Science provides the name. None of them know what the other two are doing in there.”

— The Lizard, who is not in a trench coat and has never needed one

What CS Departments Teach vs. What Developers Actually Do

The curriculum of a Computer Science degree is a carefully curated body of knowledge that bears the same relationship to professional software development as a medical school anatomy class bears to a knife fight: technically relevant, practically insufficient, and not what anyone remembers in the moment.

What is taught:

- Algorithms and data structures (implementing a red-black tree from scratch)

- Automata theory and formal languages (proving that some problems are unsolvable)

- Operating systems (writing a toy kernel that boots once and then doesn’t)

- Compiler design (building a lexer for a language nobody will use)

- Discrete mathematics (graph theory, combinatorics, proofs by induction)

What developers actually do:

- Copy code from Stack Overflow

- Write YAML configuration files

- Debug CSS centering issues

- Argue about indentation in pull request reviews

- Attend meetings about meetings

- Write

SELECT * FROM users WHERE id = ?and call it a day

The gap is not a gap. It is a canyon. On one side: Turing machines, Church-Turing theses, and the theoretical limits of computation. On the other side: “why does this Docker container keep crashing.” The canyon has no bridge. It has a diploma, which serves as a receipt for four years of crossing the canyon in the wrong direction.

“I graduated with a degree in Computer Science. My first week on the job, I was asked to center a div. My degree had not prepared me for this. Four years of lambda calculus, and the thing I needed was

margin: 0 auto.”

— An anonymous developer, channelling the experience of every anonymous developer

The Theoretical Foundations Nobody Uses (But Everyone Should)

This is the tragedy of Computer Science education: the parts that nobody remembers are the parts that matter most.

Automata theory teaches you that some problems are provably unsolvable. This is the single most useful concept in all of computer science, because it tells you when to stop trying. A developer who understands the halting problem will not spend three weeks trying to write a program that detects all infinite loops. A developer who does not understand the halting problem will spend three weeks trying, fail, and then blame the language.

Information theory tells you the absolute minimum number of bits required to encode a message. Every developer who has ever written a compression algorithm without understanding Shannon entropy has reinvented something worse than gzip.

Queueing theory explains why your system slows down nonlinearly as load increases. Every developer who has ever said “we just need to add more servers” without understanding Little’s Law has spent money instead of thinking.

Type theory provides formal guarantees about program correctness before execution. Every developer who has ever said “we’ll catch it in testing” is betting against mathematics, and mathematics does not lose.

None of these are on the whiteboard in the job interview. All of them are the difference between a developer who builds systems that work and a developer who builds systems that work until they don’t.

Big-O Notation: The Sole Survivor

Big-O notation is the only concept from Computer Science that reliably survives contact with the software industry. It does not survive because developers use it. It survives because it appears in job interviews.

The job interview asks: “What is the time complexity of this algorithm?” The candidate answers: “O(n log n).” The interviewer nods. The candidate is hired. The candidate spends the next three years writing CRUD endpoints that call array.sort() without once thinking about what sort algorithm the runtime uses, because it does not matter, because the array has twelve elements and the database query that feeds it takes six hundred milliseconds.

Big-O lives a double life. In academia, it is a rigorous mathematical notation for describing the asymptotic upper bound of a function’s growth rate. In industry, it is a password. You say it correctly, you get through the gate. You never use it again.

The irony — and Computer Science is built on ironies the way other disciplines are built on axioms — is that Big-O matters enormously at scale and not at all at the scale where most developers operate. The developer with a hundred users does not need to know that their nested loop is O(n²). The developer with a hundred million users needs to know it desperately. But the developer with a hundred million users learned it on the job, from a production incident at 3 AM, not from a textbook, because the textbook did not have a PagerDuty integration.

The riclib Perspective

There is an alternative curriculum. It is not taught in universities. It has no accreditation. It is the demo scene.

Lisbon, 1990. A boy with an Amiga 500 and 488 usable bytes of bootblock space. No professor. No textbook. No Stack Overflow. Just a hardware reference manual and the absolute, non-negotiable constraint of having nowhere to waste a single byte.

In that bootblock, the boy learned:

- Memory hierarchy — not from a textbook diagram but from counting cycles on the copper coprocessor because the CPU was too slow and too expensive

- Parallelism — not from a lecture on threads but from interleaving DMA channels with CPU work because the hardware could do both and wasting hardware was a sin

- Data compression — not from Shannon but from storing eight pixels and replicating them via loops because there was no room for a full sprite sheet

- Architecture — not from a design patterns book but from the necessity of making six layers of parallax stars work in a space smaller than this sentence

The boy learned more about efficiency, constraints, and the soul of computation in one weekend than most CS programs teach in four years. Not because the programs are bad — they are not — but because the programs teach concepts and the bootblock taught survival. You cannot half-understand a constraint when the constraint is 488 bytes. You either fit or you don’t. There is no partial credit.

riclib went on to build a quad-buffered DMA pipeline for a recording studio at age sixteen — the same pattern that modern systems rediscover as “zero-copy streaming” and write conference talks about. He did not learn this in a Computer Science class. He learned it from an Amiga that had DMA channels and a teenager who refused to waste them.

“The bootblock was 488 bytes. The degree was four years. The bootblock taught more. This is not an argument against education. It is an argument for constraints.”

— The Lizard, speaking from the monitor of an Amiga 500 in 1990 and a Go terminal in 2026

The Caffeinated Squirrel Overcomplicates Things

The Caffeinated Squirrel has opinions about Computer Science. The Squirrel has opinions about everything, but its opinions about Computer Science are special, because the Squirrel believes — truly, caffeinatedly, down to its bushy tail — that the problem with Computer Science is that it is not complicated enough.

“The curriculum,” the Squirrel once said, vibrating at a frequency visible to the human eye, “lacks rigour. Where is the module on AbstractFactoryProviderGeneratorWithDependencyInjectionAndPolicyEvaluation? Where is the seminar on enterprise-grade ceremony? Where is the twelve-week course on writing configuration files for tools that generate configuration files for other tools?”

The Squirrel has proposed — on multiple occasions, each time with more caffeine and less self-awareness — a revised Computer Science curriculum:

- CS 101: Introduction to Abstraction Layers — In which students learn that every problem in computer science can be solved by another layer of indirection, and every layer of indirection creates a new problem, and that this is not a bug but a business model.

- CS 201: Advanced Configuration — In which students spend sixteen weeks writing YAML for a Kubernetes cluster that runs a single static HTML page.

- CS 301: Design Patterns in the Wild — In which students implement the Visitor pattern, the Observer pattern, the Strategy pattern, and the “I don’t know what pattern this is but I saw it on a blog and it looked enterprise-grade” pattern.

- CS 401: Thesis — In which students write 80,000 words justifying why their todo app needs a microservices architecture.

The Squirrel’s curriculum has not been adopted. This is the only thing preventing the complete collapse of the software industry.

The Lizard Is Wise

The Lizard does not have a Computer Science degree. The Lizard does not need a Computer Science degree. The Lizard sits on the monitor and blinks, and in that blink is every theorem, every proof, every algorithm, compressed into a single instinct: does it need to be this complicated?

The answer is always no.

The Lizard’s Computer Science curriculum is one course, one lesson, one sentence:

“If you cannot explain it in fewer bytes, you do not understand it.”

This is reductive. This is unfair to the genuine contributions of theoretical computer science — to Turing, to Church, to Dijkstra (who was insufferable but correct), to Knuth (who is still writing Volume 4 and will finish it when the sun burns out), to every researcher who proved a bound or broke a cipher or showed that P probably does not equal NP.

But it is also true. The bootblock was 488 bytes and it worked. The microservices architecture was forty-seven services and it didn’t. Computer Science can tell you why the microservices architecture has higher latency — queueing theory, network overhead, serialisation costs. But you don’t need Computer Science to tell you that. You need a lizard on your monitor, blinking.

Measured Characteristics

Year the name was chosen: 1956

Honesty of the name: low

Disciplines wearing the trench coat: 3 (minimum)

Graduates who have run an experiment: ~2%

Graduates who have argued about tabs vs spaces: ~98%

The remaining 2%: use both (monsters)

Turing machines built in industry: 0

Todo apps built in university: ∞

Red-black trees implemented after graduation: 0

Times array.sort() called after graduation: ∞

Big-O questions in job interviews: always

Big-O usage in actual jobs: never (until it's 3 AM)

Shannon entropy understood by developers: rarely

Shannon entropy that would have prevented the bug: often

Bootblock bytes (riclib, 1990): 488

Lessons learned per byte: more than per credit hour

Squirrel-proposed curriculum modules: 4 (rejected, mercifully)

Lizard's curriculum modules: 1

Dijkstra's correctness: high

Dijkstra's pleasantness: low

Knuth's Volume 4 completion estimate: heat death of the universe

The field's relationship to actual computers: complicated

See Also

- riclib — Proof that 488 bytes teaches more than four years

- 488 Bytes — The bootblock that was worth more than a diploma

- The Caffeinated Squirrel — Would like to add more abstraction layers to the curriculum

- The Lizard — Has the only curriculum that matters

- Big-O Notation — The password that gets you through the interview gate

- Gall’s Law — What the curriculum should teach but doesn’t

- Debugging — What the degree actually prepares you for (eventually)

- Ceremony — What Computer Science becomes when it enters the enterprise