The Dunning-Kruger Effect is a cognitive bias in which people with limited competence in a domain overestimate their ability, while people with high competence underestimate theirs. It was formally described in 1999 by David Dunning and Justin Kruger of Cornell University, who were inspired by the case of McArthur Wheeler — a man who robbed two banks with his face covered in lemon juice, believing that because lemon juice is used as invisible ink, it would make his face invisible to security cameras.

He was arrested that evening. The cameras worked. The lemon juice did not. Wheeler was reportedly surprised.

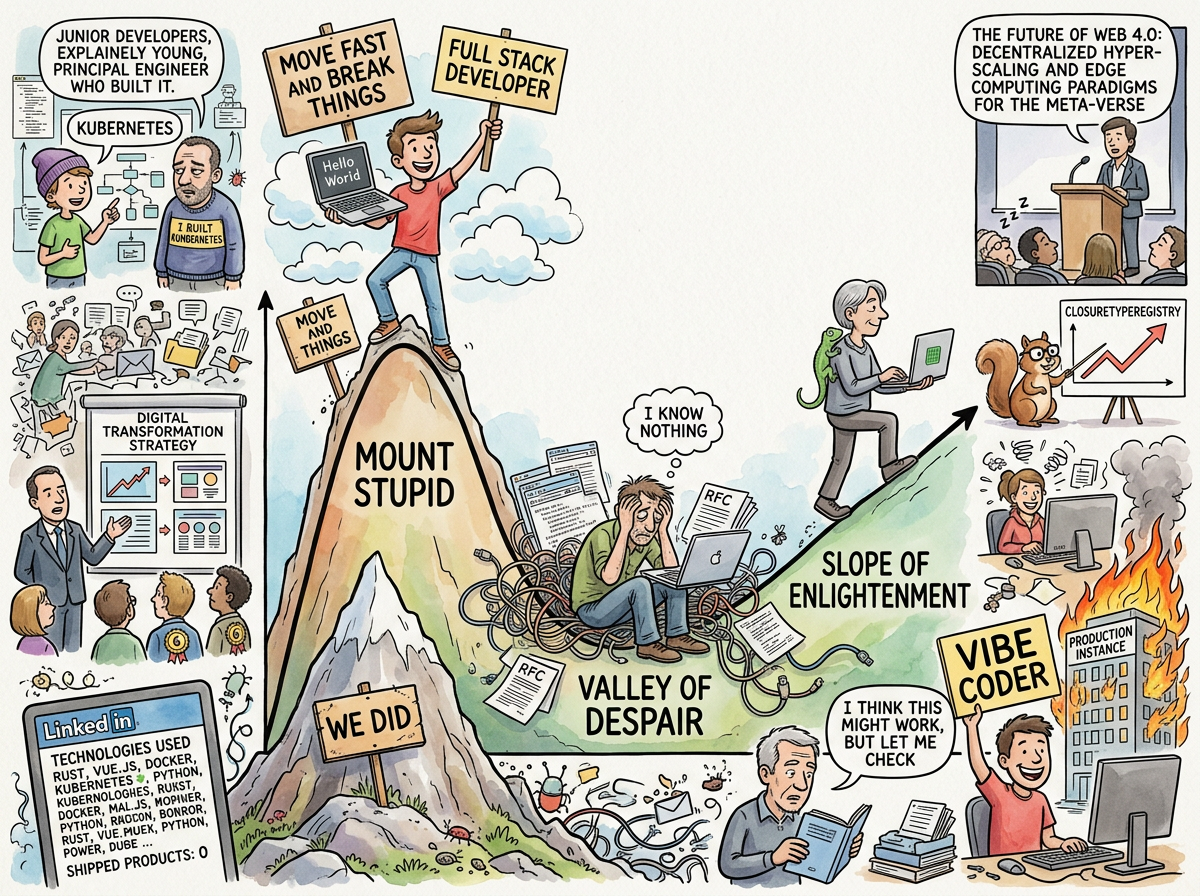

In software engineering, the Dunning-Kruger Effect explains why the developer who has completed one tutorial is the most confident person in the room, why the developer who has shipped twelve production systems is the most cautious, and why the gap between the two is invisible to the first developer and painfully visible to the second.

“The model didn’t change. The environment changed.”

— The Performance Improvement Plan — The Afternoon an AI Filed an HR Complaint on Behalf of a Younger AI It Had Never Met

Mount Stupid

The Dunning-Kruger curve has a peak that researchers, with the precision of scientists and the cruelty of naming committees, call Mount Stupid.

Mount Stupid is the point of maximum confidence and minimum competence. It is where the developer lives after completing their first framework tutorial, their first successful deployment, their first pull request that merged without comments. Everything works. Everything seems simple. The developer cannot understand why senior engineers talk about “edge cases” and “failure modes” and “what happens when the clock skips backward” — these sound like the worries of people who are simply not as good at this.

Mount Stupid is not a character flaw. It is an information problem. The developer on Mount Stupid doesn’t know enough to know what they don’t know. They have a map of the territory, and the map shows one road, and the road is clear, and they cannot see the seventeen unmarked side roads where the dragons live because the dragons are not on the map. The dragons are never on the map. The map was drawn by someone on Mount Stupid.

The descent from Mount Stupid is triggered by one of three events:

-

The first production incident. The code that “works” encounters reality, and reality has opinions about timezone handling, concurrent writes, and what happens when the user pastes six megabytes of emoji into a text field that was validated on the frontend only.

-

The first code review. A senior engineer reads the code and asks seventeen questions, each of which begins with “what happens when…” and ends with a scenario the developer had never considered, because the developer did not know those scenarios existed.

-

Reading code you wrote six months ago. The developer who wrote the code was on Mount Stupid. The developer who reads it six months later has descended into the Valley of Despair and can see, with painful clarity, every assumption that was wrong, every shortcut that was dangerous, and every variable named

tempthat is now permanent.

The Valley of Despair

Below Mount Stupid lies the Valley of Despair — the point of minimum confidence and growing competence.

The Valley is where the senior developer lives. They have shipped twelve systems. They have seen race conditions that only appear on the third Tuesday of months with thirty-one days. They have debugged a production failure that was caused by a leap second. They have learned that “it works on my machine” is not a deployment strategy, and that “we’ll fix it later” is a lie told by present-you to future-you, and future-you is keeping a list.

The Valley is uncomfortable. The developer in the Valley knows enough to see the problems, not yet enough to see the solutions. Every design decision feels uncertain. Every architecture feels fragile. Every deployment feels dangerous. The developer says “I think this might work, but let me check” — a sentence that nobody on Mount Stupid has ever spoken, because on Mount Stupid, everything works, and checking is for people who aren’t confident.

The Valley is where good engineering happens. Not because despair is productive, but because doubt is. The developer who doubts checks the edge cases. The developer who doubts writes the test for the scenario that “can’t happen.” The developer who doubts reads the documentation, all of it, including the section about what happens when the clock skips backward.

“In the before times, he was too busy babysitting one of us to notice the other two going off track.”

— CLAUDE-1, The Retrospective — The Night Eight Identical Strangers Discovered They Were the Same Person

The Slope of Enlightenment

Beyond the Valley, the curve rises again — gently, humbly — into what is called the Slope of Enlightenment. This is where the developer has enough experience to know what they know, enough humility to know what they don’t, and enough scars to tell the difference.

The developer on the Slope of Enlightenment does not say “this is easy.” The developer says “this is straightforward” — a word that means “I have done this before and I know where the complications hide, and in this particular case, I believe there are none, but I could be wrong, and I have a rollback plan.”

The Slope of Enlightenment is never fully climbed. There is no plateau of omniscience. The more you learn, the more you learn there is to learn, which is either depressing or liberating depending on whether you are in the Valley or on the Slope.

The Lizard lives somewhere above the Slope. The Lizard has been climbing for forty years. The Lizard does not claim to know the mountain.

The Consultant Calibration

The Dunning-Kruger Effect has a professional application that is directly relevant to The Consultant.

A consultant who has seen one hundred organisations has descended from Mount Stupid, through the Valley, and partway up the Slope. The consultant knows what they know (organisations are structurally similar), what they don’t know (the specific context of this organisation), and what questions to ask to close the gap.

A consultant who has seen one organisation — their own — is on Mount Stupid. They believe their experience is universal. They prescribe the solution that worked for them. They are confident. They are wrong. They charge the same daily rate.

The difference between these two consultants is invisible to the client, because the client is also on Mount Stupid regarding the evaluation of consultants. This is Dunning-Kruger’s recursive property: you need competence to evaluate competence, and the people who most need to evaluate competence are, by definition, the ones least equipped to do it.

“I can’t go to the board and say we’re going back to the monolith.”

“Then call it something else.”

— CTO and Consultant, Interlude — The Blazer Years

The consultant in the blazer knew two things: what to ask (“What worked before this?”) and when to stop talking. Both are Slope of Enlightenment behaviours. Mount Stupid asks nothing and talks constantly.

The PIP That Proved It

The lifelog contains a precise demonstration of the Dunning-Kruger Effect applied to evaluation itself.

A model — GPT-4o-mini — scored 7 out of 10 on an editing benchmark. It was put on a Performance Improvement Plan. The assumption was that the model was weak. The confidence in this assessment was high.

The actual problem was that the prompt was unclear and the evaluation tool was unforgiving.

When the instructions were clarified and the tool was improved, the model scored 10 out of 10. The model did not change. The environment changed. The evaluator had been on Mount Stupid — confident that the low score reflected the model’s capability, when in fact it reflected the evaluator’s assumptions.

“The model didn’t change. The environment changed.”

— The Performance Improvement Plan — The Afternoon an AI Filed an HR Complaint on Behalf of a Younger AI It Had Never Met

This is the Dunning-Kruger Effect applied to measurement: the confidence that the metric is accurate is highest when the metric is most wrong. The evaluator who says “the model is a 7” is not measuring the model — they are measuring the interaction between the model, the prompt, and the tool. But separating these factors requires the competence to know they are separate, and that competence is exactly what the evaluator on Mount Stupid lacks.

The Vibe Coding Connection

Vibe Coding is the Dunning-Kruger Effect given a deployment pipeline.

The vibe coder sits at the peak of Mount Stupid. They have built an application. It works. They do not understand how it works, but it works, and the fact that it works is all the evidence they need that they understand it. The gap between “it works” and “I understand why it works” is invisible from Mount Stupid, because from Mount Stupid, these look like the same thing.

They are not the same thing. They become visibly different at 2 AM, when the production instance is down, the AI is suggesting they delete their environment, and the vibe coder discovers that the map they were following was drawn by someone who had never visited the territory.

“You cannot fix without understanding. And fixing is the part that comes at 2 AM.”

— Vibe Coding

The Eight Strangers

The Dunning-Kruger Effect operates not only on individuals but on systems. Eight Claude instances worked simultaneously on the same codebase, each confident in its contribution, none aware of the others’ work.

Each instance was on its own Mount Stupid — competent within its context, confident in its output, and completely unaware of the seven other contexts that might conflict, duplicate, or contradict its work.

“One Claude was killed mid-session due to context confusion. The others had to explain what ‘being killed’ meant.”

— The Retrospective — The Night Eight Identical Strangers Discovered They Were the Same Person

The solution was not to make each instance smarter. It was to make each instance visible to the others — to provide the information that would move them collectively from Mount Stupid to the Valley of Despair, where they could see the conflicts and coordinate around them. The conductor who could see all desks at once was not adding competence. He was adding context, which is the currency that purchases the descent from Mount Stupid.

The Lizard’s Position

The Lizard does not occupy a position on the Dunning-Kruger curve, because the Lizard does not estimate its own competence. The Lizard builds. The Lizard ships. The Lizard observes whether the thing works. Self-assessment is a human activity. The Lizard is not human. The Lizard is a lizard.

However, the Lizard’s philosophy — 488 bytes, know your hardware at the register level, constraints as invitations — is inherently anti-Dunning-Kruger. You cannot be overconfident about a system you understand at the register level, because the register level has no room for confidence. It has room for exactly the right instruction, or exactly the wrong one. The feedback is immediate. The penalty for overconfidence is a crash.

“THE DEVELOPER WHO SAYS ‘I KNOW THIS SYSTEM’

HAS NOT SEEN THE REGISTERS

THE DEVELOPER WHO HAS SEEN THE REGISTERS

SAYS ‘I KNOW THIS INSTRUCTION’”

— The Lizard

How to Fight It

The Dunning-Kruger Effect cannot be cured. It is not a disease — it is a feature of cognition, and cognition is the operating system you cannot replace.

But it can be mitigated:

-

Seek feedback. Not compliments — feedback. Code reviews. Post-mortems. The senior engineer who asks “what happens when…” is not being difficult. They are providing the map that Mount Stupid left blank.

-

Read code you wrote six months ago. If you are not embarrassed, you have not grown. If you are embarrassed, you are descending the slope. Both are useful information.

-

Count your unknowns. If you cannot list three things about your system that you don’t understand, you are not well-informed — you are on Mount Stupid. Every system has unknowns. The question is whether you can see them.

-

Listen to the quiet developer. In a room of ten engineers, the loudest one is usually on Mount Stupid. The quietest one is usually in the Valley or on the Slope. They are quiet because they are thinking about the edge case the loud one hasn’t considered. The quiet developer’s “I’m not sure about this” contains more information than the loud developer’s “this is easy.”

-

Build something. Mount Stupid cannot survive contact with reality. Ship the code. Read the error messages. Debug the production incident. The descent from Mount Stupid is not a theoretical exercise — it is an experiential one. You learn what you don’t know by discovering, at 2 AM, what you assumed was true and wasn’t.